-

#1

Hye

Sorry for my english,i’m french.

I Have Proxmox 5.3.8 on NUC — SSD 32 Gb — 4GB

I Have test my ram with MEMORY TEST its ok.

But i use my proxmox for one VM, Jeedom.

Its ok, but then i want to ping every hours google server for test my internet proxmox crash.

I have test with PHOLE, after 1 days proxmox crash, so i think it a request on ethernet ?

So That the log for see the problem ?

I Can use SSH if need.

Tks for your help.

-

#2

Hi,

Normal you should see something in the syslog.

/var/log/syslog

But I would also enable the persistent journald log

-

#3

Hye

Sorry but i noob in this…

It not working i have tape this

I’m root login

Tks

Code:

root@proxmox:~# /var/log/syslog

-bash: /var/log/syslog: Permission denied

root@proxmox:~# mkdir /var/log/journal

root@proxmox:~# /var/log/syslog

-bash: /var/log/syslog: Permission denied

root@proxmox:~#

tim

Proxmox Retired Staff

-

#4

Try:

# less /var/log/syslog

To debug anything you will need some basic Linux knowledge, if you are interested in the topic I bet there is some french guide out there as well.

-

#5

Tks, its works but no history,

Morning proxmox crash and no ethernet acces, so i power off witch socket and power on,

so logs begin like morning power off/on

Tks

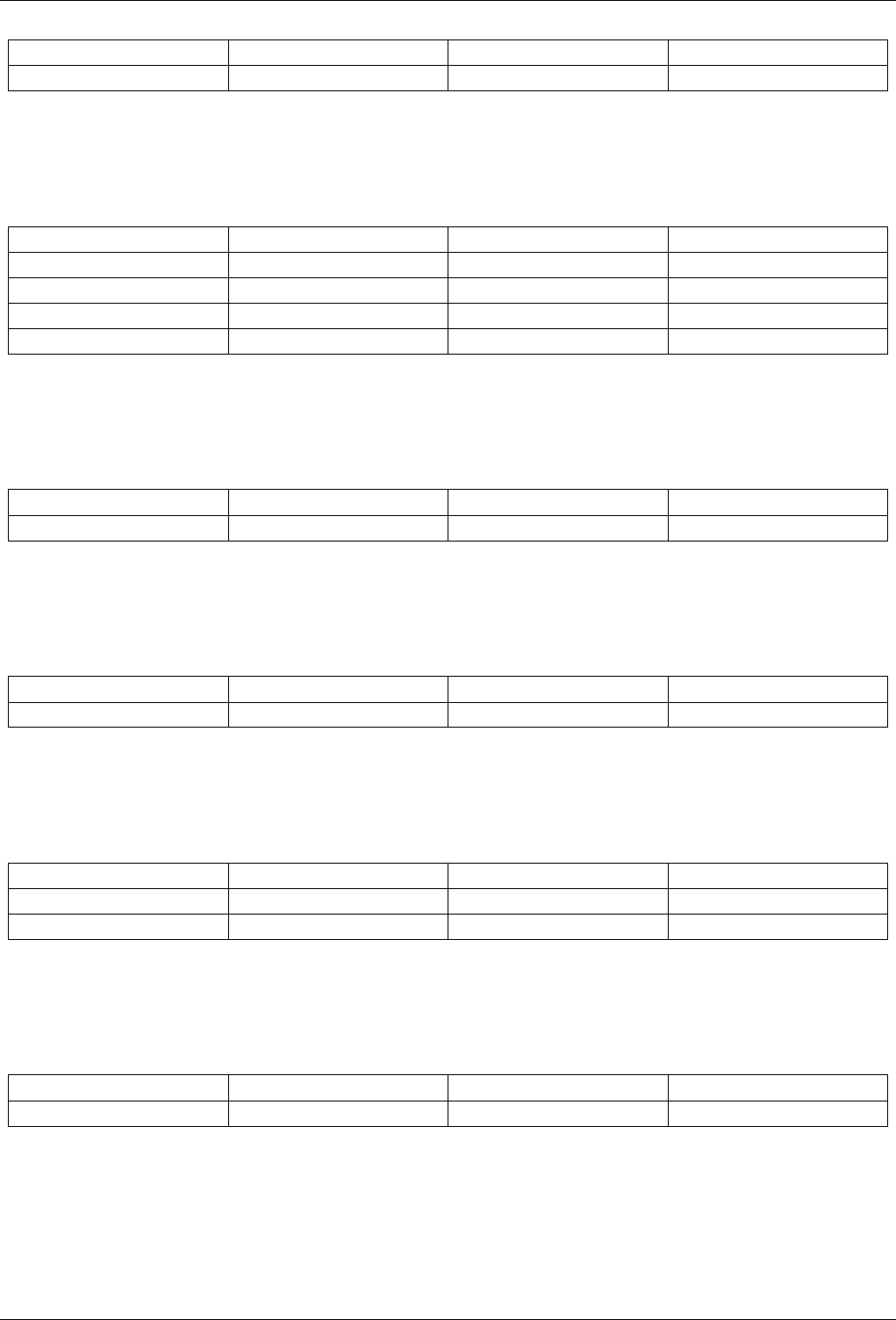

Mar 25 06:25:02 proxmox liblogging-stdlog: [origin software=»rsyslogd» swVersion=»8.24.0″ x-pid=»817″ x-info=»http://www.rsyslog.com»] rsyslogd was HUPed

Mar 25 06:25:02 proxmox liblogging-stdlog: [origin software=»rsyslogd» swVersion=»8.24.0″ x-pid=»817″ x-info=»http://www.rsyslog.com»] rsyslogd was HUPed

Mar 25 06:26:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:26:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:27:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:27:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:28:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:28:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:29:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:29:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:30:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:30:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:31:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:31:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:32:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:32:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:32:22 proxmox postfix/qmgr[1224]: E512B25949: from=<>, size=13921, nrcpt=1 (queue active)

Mar 25 06:33:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:33:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:33:02 proxmox postfix/smtp[23976]: E512B25949: to=<XXXXXX@gmail.com>, relay=none, delay=275711, delays=275671/0.01/40/0, dsn=4.4.3, status=deferred (Host or domain name not found. Name service error for name=gmail.com type=MX: Host not found, try again)

Mar 25 06:34:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:34:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:35:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:35:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:36:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:36:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:37:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:37:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:38:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:38:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:39:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:39:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:40:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:40:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:41:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:41:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:41:46 proxmox systemd[1]: Starting Daily apt upgrade and clean activities…

Mar 25 06:41:46 proxmox systemd[1]: Started Daily apt upgrade and clean activities.

Mar 25 06:41:46 proxmox systemd[1]: apt-daily-upgrade.timer: Adding 40min 1.878996s random time.

Mar 25 06:41:46 proxmox systemd[1]: apt-daily-upgrade.timer: Adding 21min 27.514893s random time.

Mar 25 06:42:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:42:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:43:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:43:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:44:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:44:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:45:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:45:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:46:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:46:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:47:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:47:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:48:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:48:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:49:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:49:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:50:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:50:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:51:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

Mar 25 06:51:01 proxmox systemd[1]: Started Proxmox VE replication runner.

Mar 25 06:52:00 proxmox systemd[1]: Starting Proxmox VE replication runner…

:

-

#6

One up for help, and log history do you have any idea for monitoring ?

tks a lots

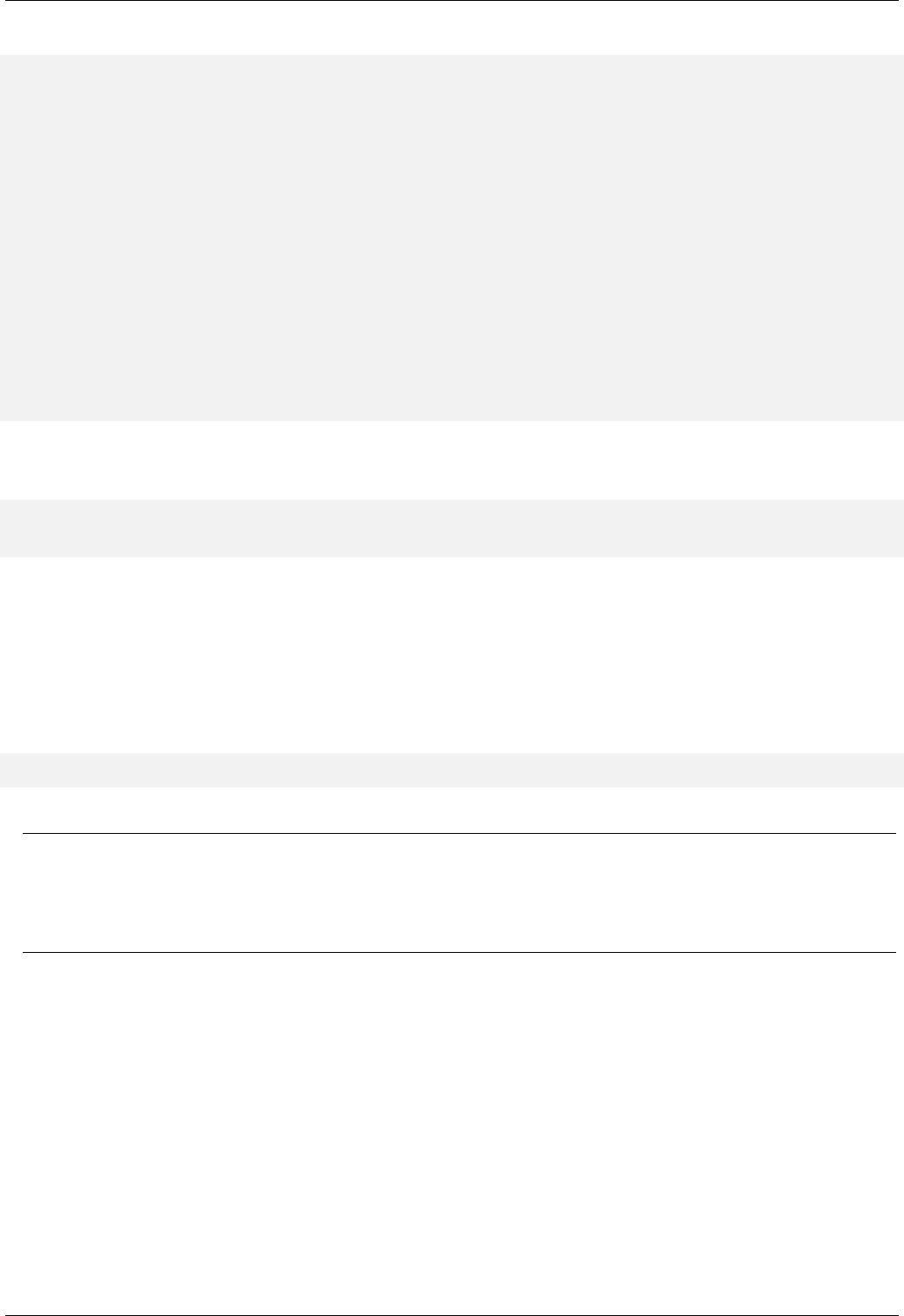

Introduction

- Sometimes you are not able to get a trace log of crashed kernel because it is not written into any file in a filesystem. This guide is aimed to help with getting a kernel crash log.

- The easiest way to archive the goal is to utilize a remote system through network. This example shows the case with two Proxmox VE 4.X hosts. One of them (server1) is the host to debug and another one (server2) is the host to catch a log.

- Server1 IP: 10.10.10.1

- Server2 IP: 10.10.10.2

Server1 configuration

Insert the following line to GRUB_CMDLINE_LINUX_DEFAULT in /etc/default/grub

netconsole=<port>@<your ip>/eth0,<port>@<remote logging ip>/<mac address of logging pc> loglevel=7

The hole line locks like this.

GRUB_CMDLINE_LINUX_DEFAULT=»quiet netconsole=5555@10.10.10.1/eth0,5555@10.10.10.2/0c:c4:7a:44:1e:fe loglevel=7″

- Check https://www.kernel.org/doc/Documentation/networking/netconsole.txt for more information

Note: it’s good idea to disable this configuration after you have done with debugging.

To find out your MAC address use on the logging Server/Laptop

ip a

Server2 configuration

Now you can setup a rsyslog service to log the other server or you get the log with nc direct to a console on a remote device.

Set up a rsyslog

- Create a new file /etc/rsyslog.d/01-netconsole-collector.conf with the following content:

# Start UDP server on port 5555 $ModLoad imudp $UDPServerRun 5555 # Define templates $template NetconsoleFile,"/var/log/netconsole/%fromhost-ip%.log" $template NetconsoleFormat,"%rawmsg%" # Accept endline characters (unfortunatelly these options are global) $EscapeControlCharactersOnReceive off $DropTrailingLFOnReception off # Store collected logs using templates without local ones :fromhost-ip, !isequal, "127.0.0.1" ?NetconsoleFile;NetconsoleFormat # Discard logs match the rule above & ~

- Restart rsyslog

systemctl restart rsyslog

- Note: it’s good idea to disable this configuration after you have done with debugging because $EscapeControlCharactersOnReceive and $DropTrailingLFOnReception are global options and they change default behaviour of rsyslog.

Output on a remote conlsole

nc -l -p 5555 -u

Examination

- We can check if everything works by causing kernel crash intentionally (be careful!, it’s going to be a real crash), type the following command on server1

echo c > /proc/sysrq-trigger

- Server1 will crash and you should get a crash log in /var/log/netconsole/10.10.10.1.log on server2

За последние несколько лет я очень тесно работаю с кластерами Proxmox: многим клиентам требуется своя собственная инфраструктура, где они могут развивать свой проект. Именно поэтому я могу рассказать про самые распространенные ошибки и проблемы, с которыми также можете столкнуться и вы. Помимо этого мы конечно же настроим кластер из трех нод с нуля.

Proxmox кластер может состоять из двух и более серверов. Максимальное количество нод в кластере равняется 32 штукам. Наш собственный кластер будет состоять из трех нод на мультикасте (в статье я также опишу, как поднять кластер на уникасте — это важно, если вы базируете свою кластерную инфраструктуру на Hetzner или OVH, например). Коротко говоря, мультикаст позволяет осуществлять передачу данных одновременно на несколько нод. При мультикасте мы можем не задумываться о количестве нод в кластере (ориентируясь на ограничения выше).

Сам кластер строится на внутренней сети (важно, чтобы IP адреса были в одной подсети), у тех же Hetzner и OVH есть возможность объединять в кластер ноды в разных датацентрах с помощью технологии Virtual Switch (Hetzner) и vRack (OVH) — о Virtual Switch мы также поговорим в статье. Если ваш хостинг-провайдер не имеет похожие технологии в работе, то вы можете использовать OVS (Open Virtual Switch), которая нативно поддерживается Proxmox, или использовать VPN. Однако, я рекомендую в данном случае использовать именно юникаст с небольшим количеством нод — часто возникают ситуации, где кластер просто “разваливается” на основе такой сетевой инфраструктуры и его приходится восстанавливать. Поэтому я стараюсь использовать именно OVH и Hetzner в работе — подобных инцидентов наблюдал в меньшем количестве, но в первую очередь изучайте хостинг-провайдера, у которого будете размещаться: есть ли у него альтернативная технология, какие решения он предлагает, поддерживает ли мультикаст и так далее.

Установка Proxmox

Proxmox может быть установлен двумя способами: ISO-инсталлятор и установка через shell. Мы выбираем второй способ, поэтому установите Debian на сервер.

Перейдем непосредственно к установке Proxmox на каждый сервер. Установка предельно простая и описана в официальной документации здесь.

Добавим репозиторий Proxmox и ключ этого репозитория:

echo "deb http://download.proxmox.com/debian/pve stretch pve-no-subscription" > /etc/apt/sources.list.d/pve-install-repo.list

wget http://download.proxmox.com/debian/proxmox-ve-release-5.x.gpg -O /etc/apt/trusted.gpg.d/proxmox-ve-release-5.x.gpg

chmod +r /etc/apt/trusted.gpg.d/proxmox-ve-release-5.x.gpg # optional, if you have a changed default umaskОбновляем репозитории и саму систему:

apt update && apt dist-upgradeПосле успешного обновления установим необходимые пакеты Proxmox:

apt install proxmox-ve postfix open-iscsiЗаметка: во время установки будет настраиваться Postfix и grub — одна из них может завершиться с ошибкой. Возможно, это будет вызвано тем, что хостнейм не резолвится по имени. Отредактируйте hosts записи и выполните apt-get update

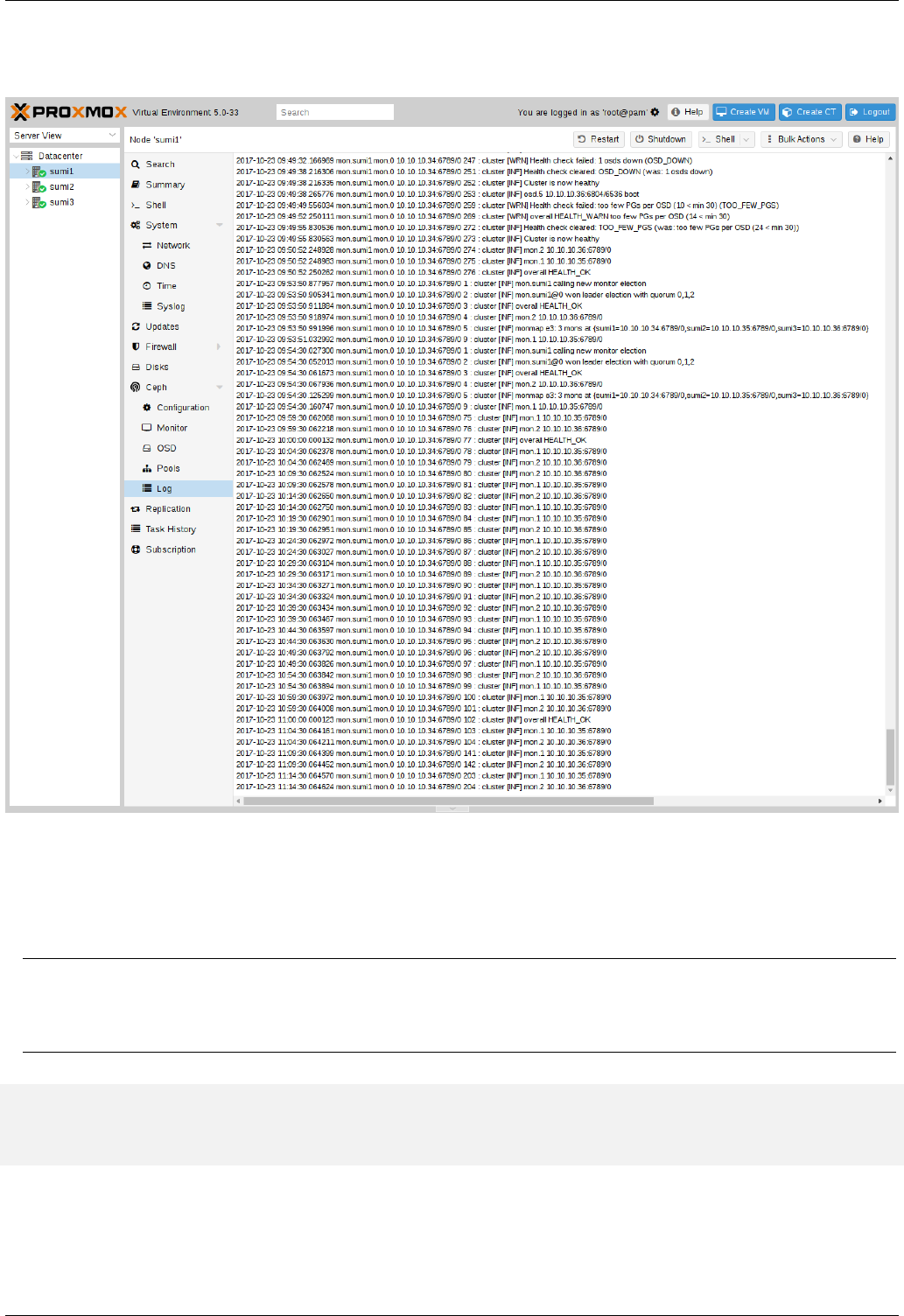

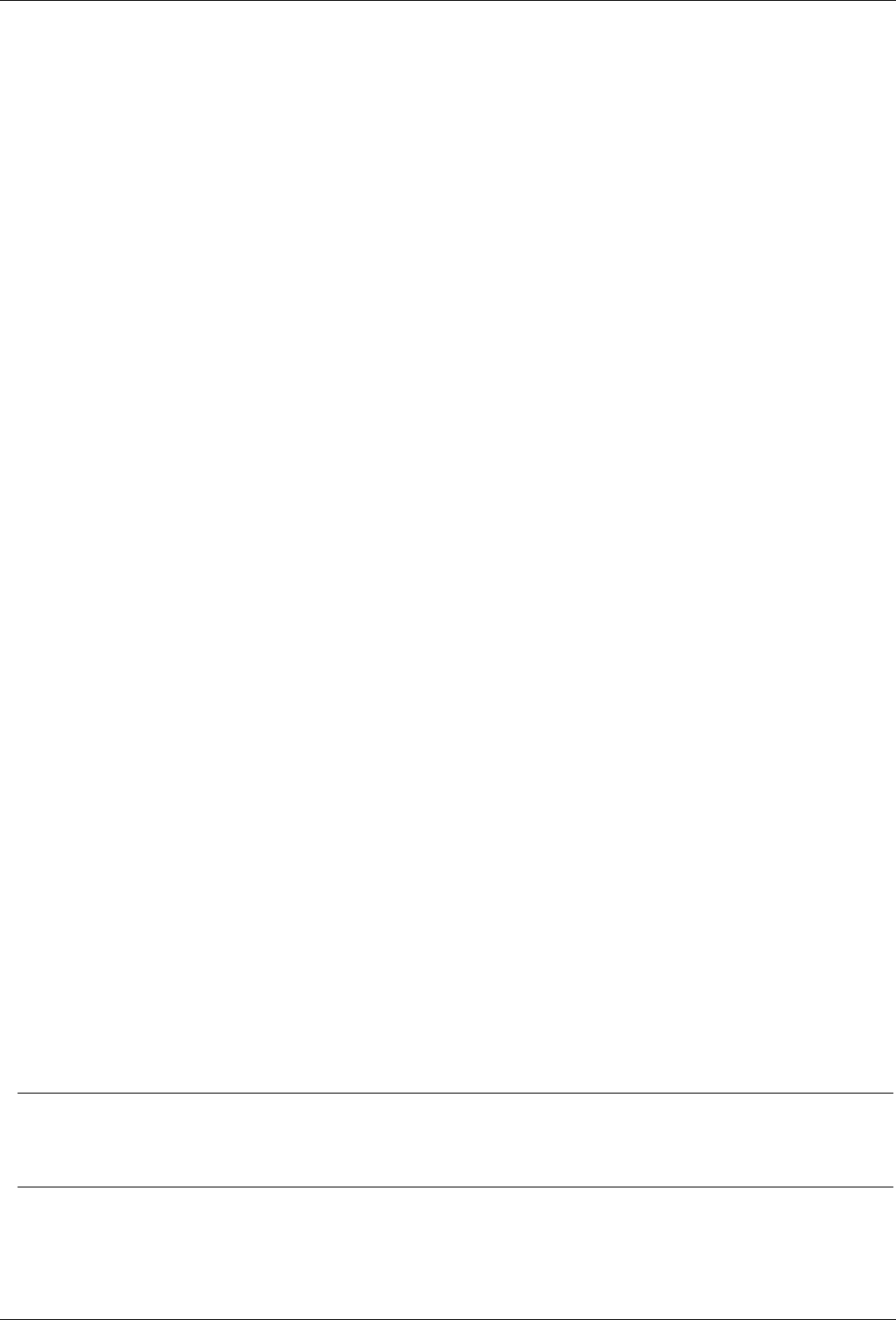

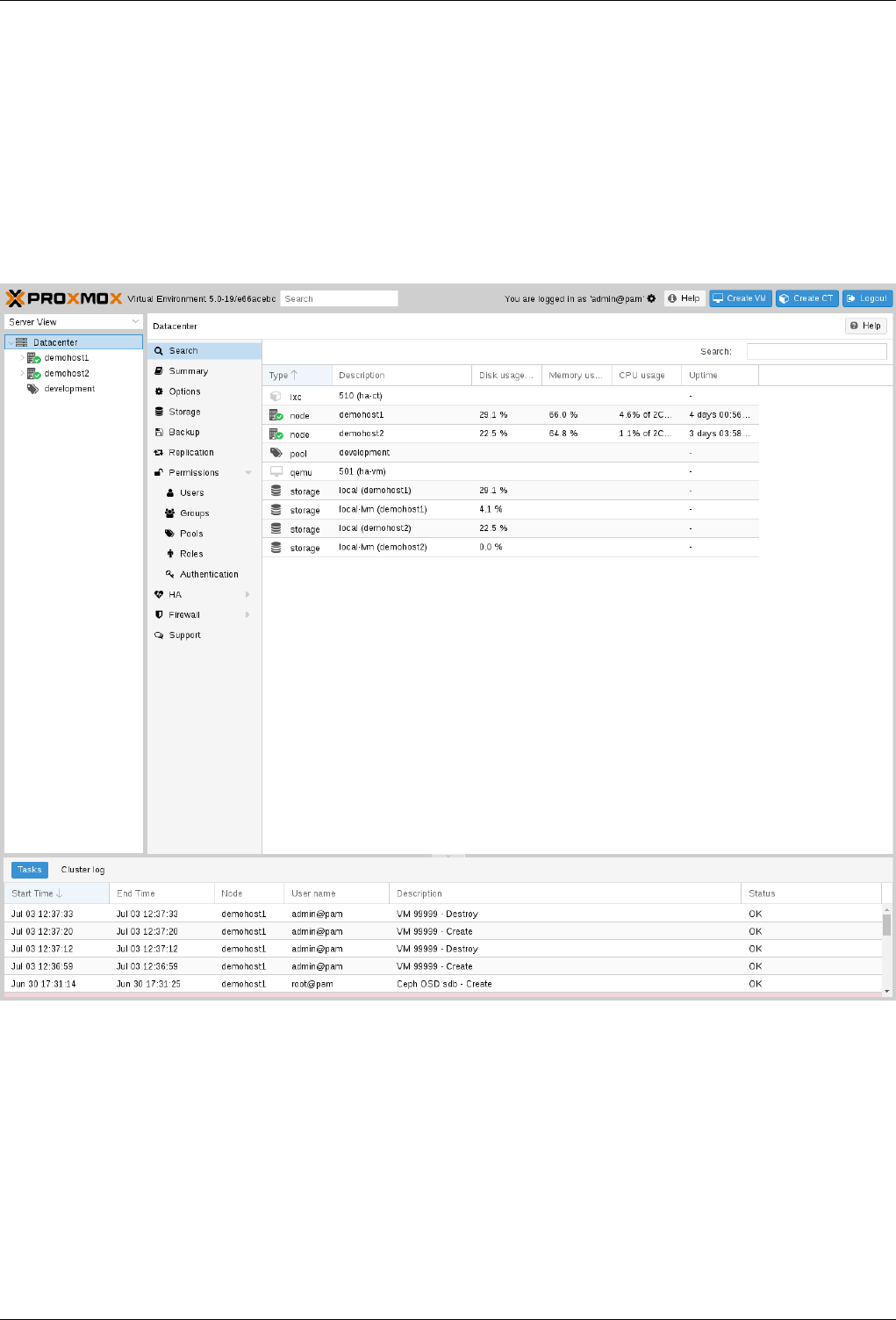

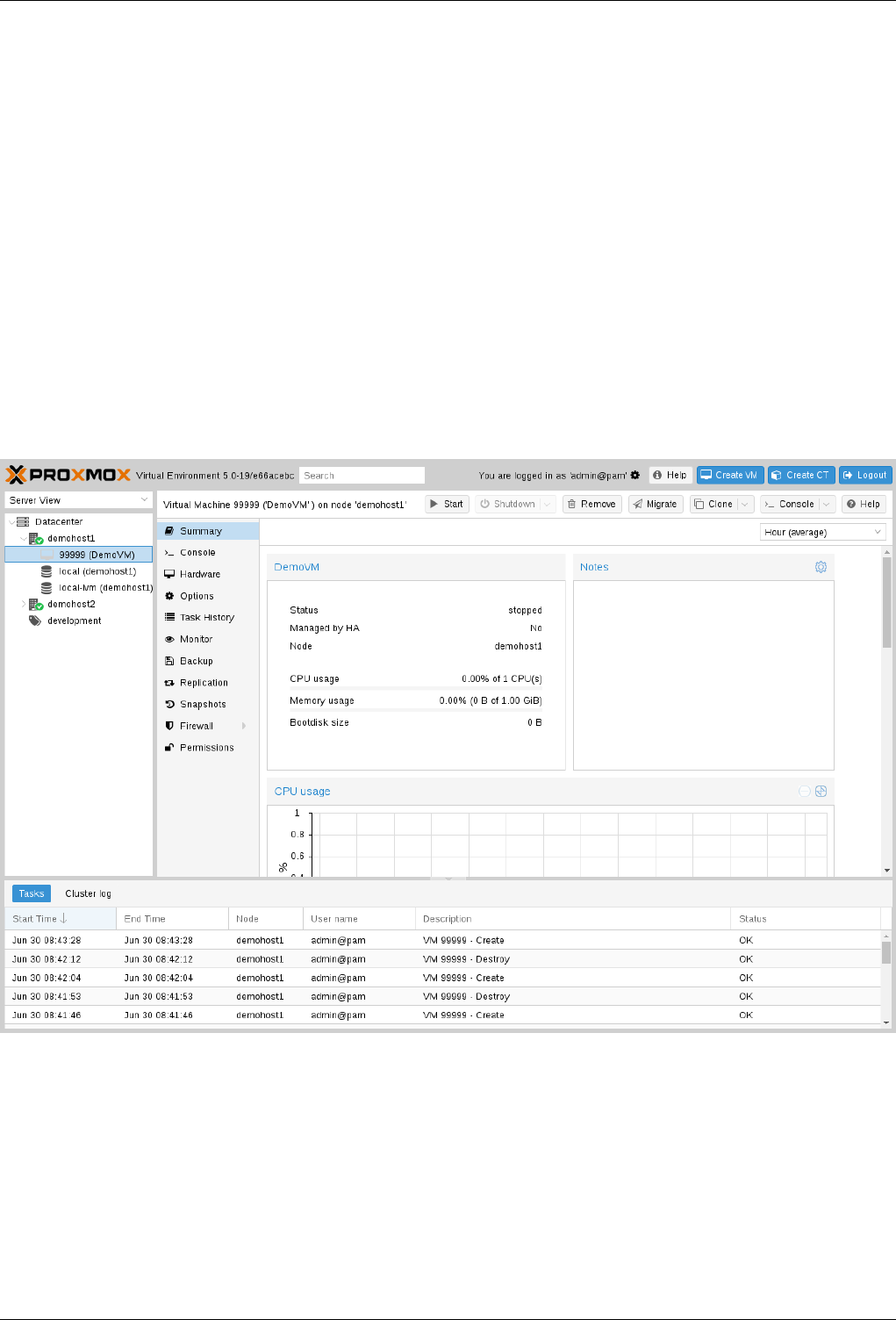

С этого момента мы можем авторизоваться в веб-интерфейс Proxmox по адресу https://<внешний-ip-адрес>:8006 (столкнетесь с недоверенным сертификатом во время подключения).

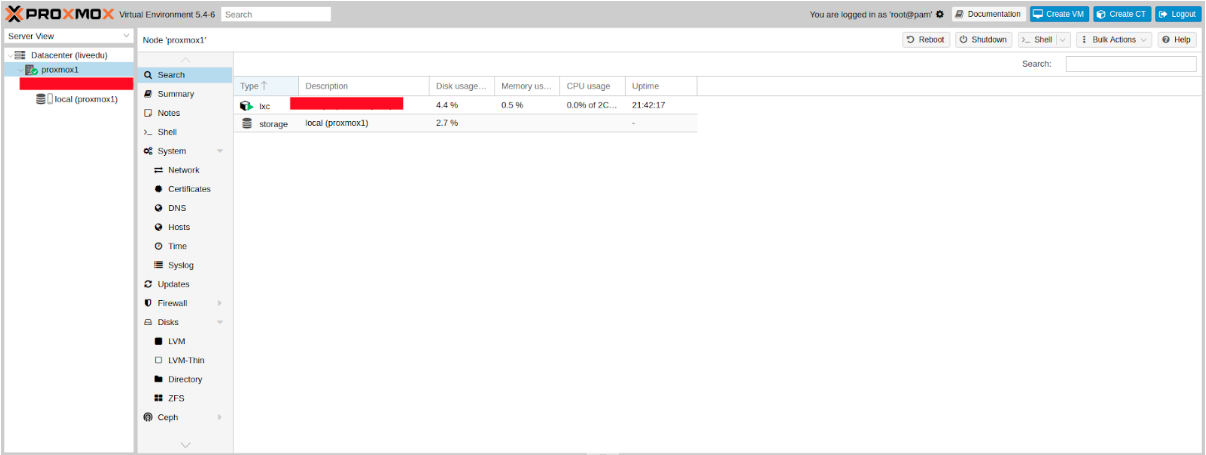

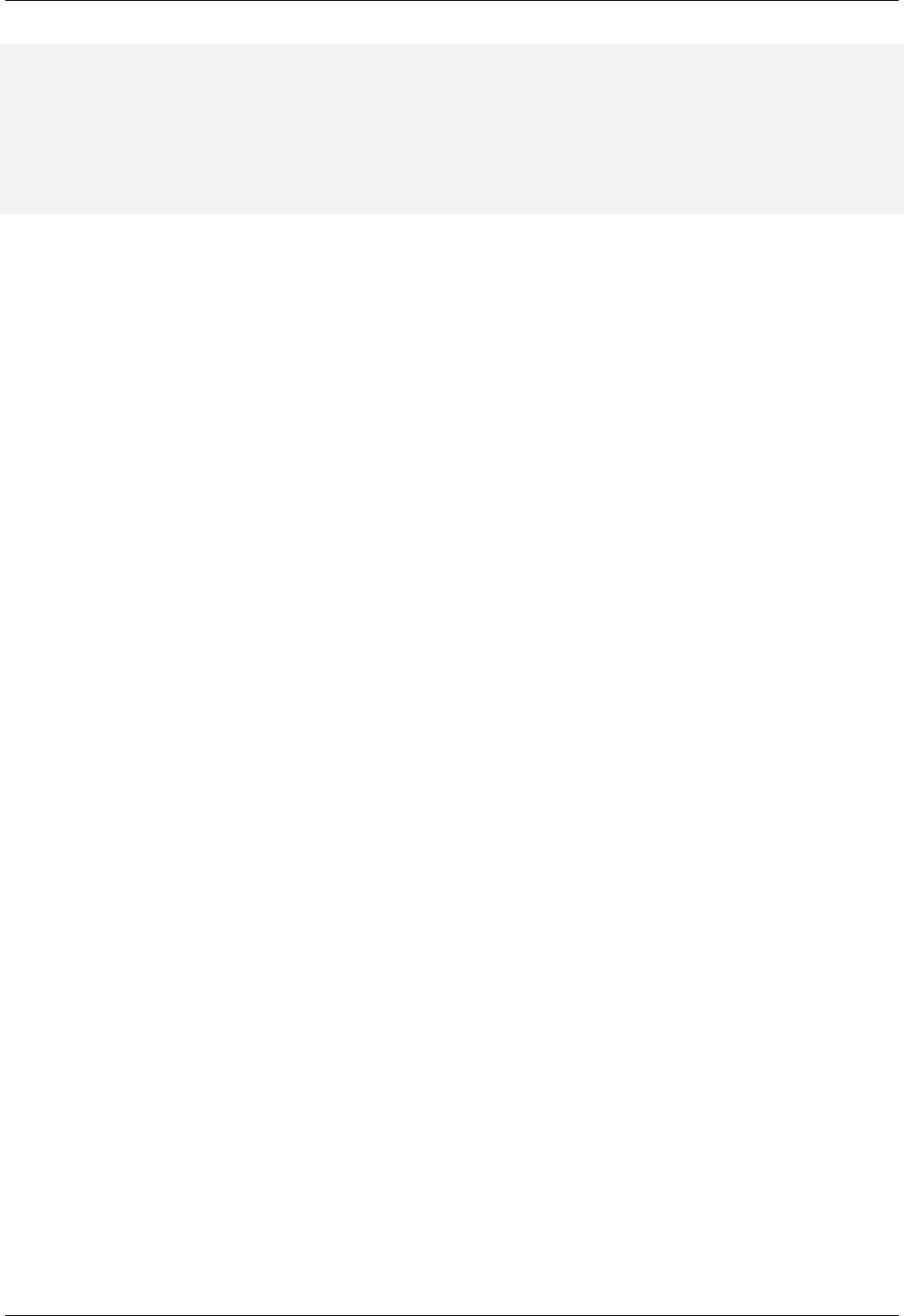

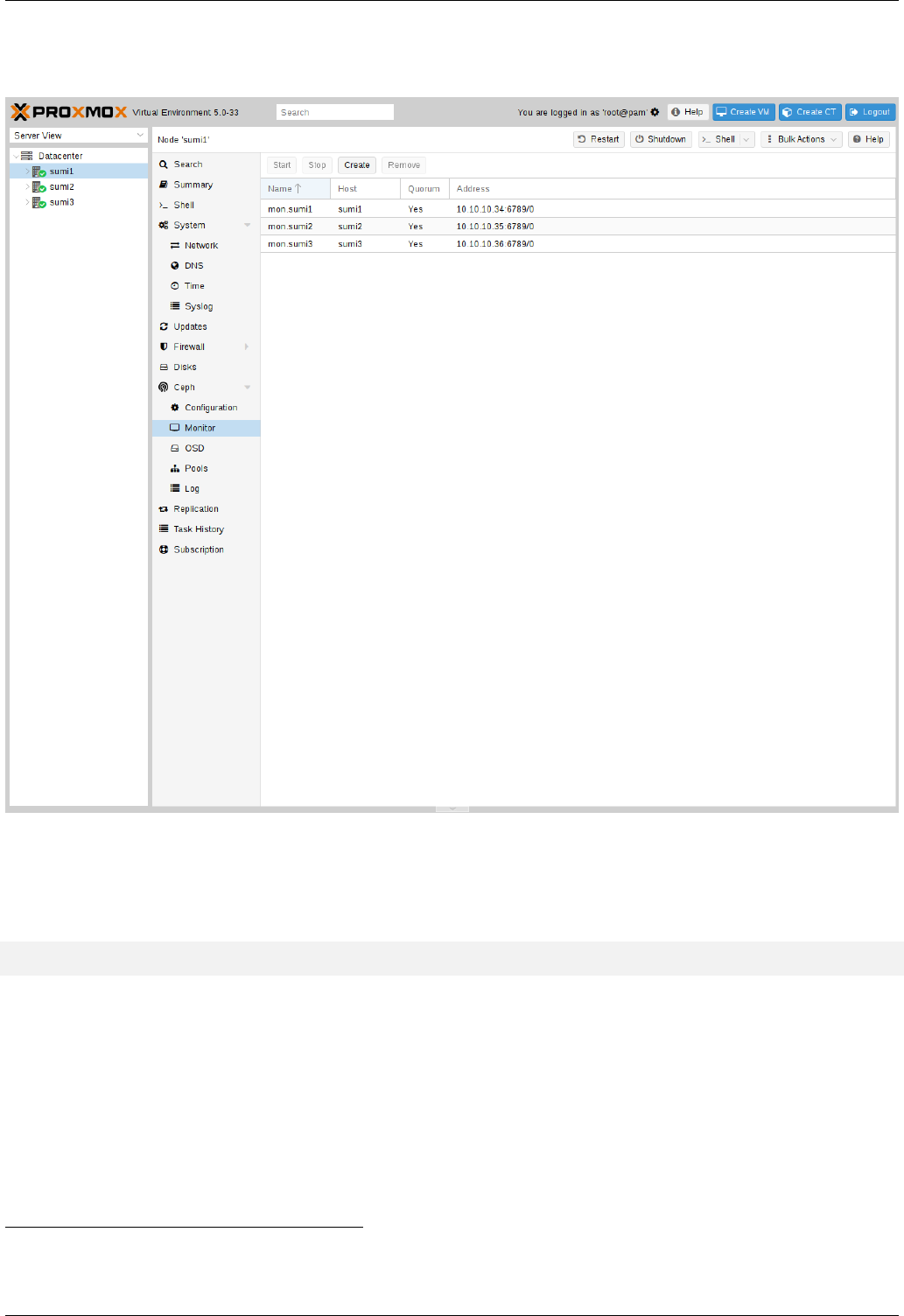

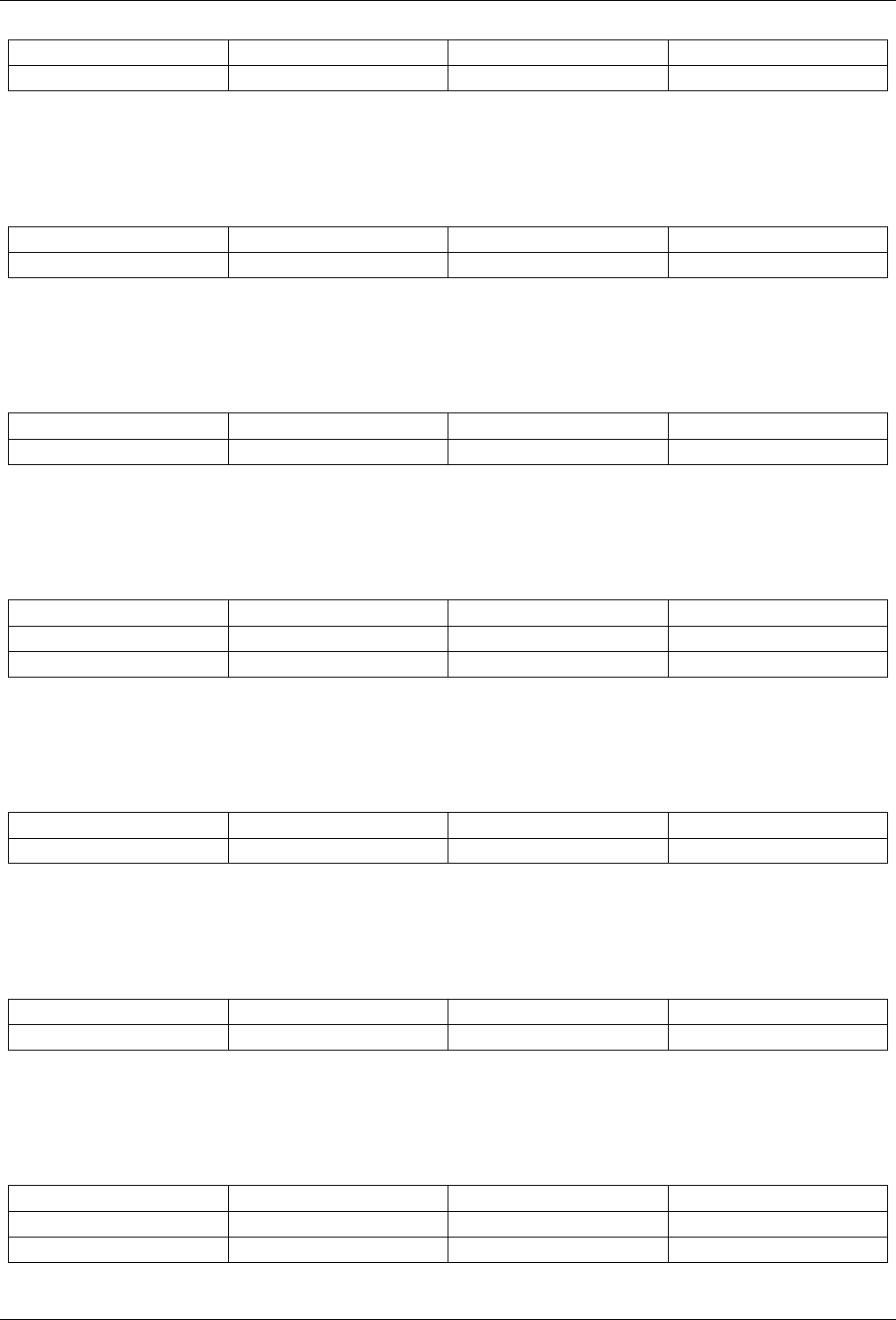

Изображение 1. Веб-интерфейс ноды Proxmox

Установка Nginx и Let’s Encrypt сертификата

Мне не очень нравится ситуация с сертификатом и IP адресом, поэтому я предлагаю установить Nginx и настроить Let’s Encrypt сертификат. Установку Nginx описывать не буду, оставлю лишь важные файлы для работы Let’s encrypt сертификата:

/etc/nginx/snippets/letsencrypt.conf

location ^~ /.well-known/acme-challenge/ {

allow all;

root /var/lib/letsencrypt/;

default_type "text/plain";

try_files $uri =404;

}

Команда для выпуска SSL сертификата:

certbot certonly --agree-tos --email sos@livelinux.info --webroot -w /var/lib/letsencrypt/ -d proxmox1.domain.name

Конфигурация сайта в NGINX

upstream proxmox1.domain.name {

server 127.0.0.1:8006;

}

server {

listen 80;

server_name proxmox1.domain.name;

include snippets/letsencrypt.conf;

return 301 https://$host$request_uri;

}

server {

listen 443 ssl;

server_name proxmox1.domain.name;

access_log /var/log/nginx/proxmox1.domain.name.access.log;

error_log /var/log/nginx/proxmox1.domain.name.error.log;

include snippets/letsencrypt.conf;

ssl_certificate /etc/letsencrypt/live/proxmox1.domain.name/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/proxmox1.domain.name/privkey.pem;

location / {

proxy_pass https://proxmox1.domain.name;

proxy_next_upstream error timeout invalid_header http_500 http_502 http_503 http_504;

proxy_redirect off;

proxy_buffering off;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}Не забываем после установки SSL сертификата поставить его на автообновление через cron:

0 */12 * * * /usr/bin/certbot -a ! -d /run/systemd/system && perl -e 'sleep int(rand(3600))' && certbot -q renew --renew-hook "systemctl reload nginx"Отлично! Теперь мы можем обращаться к нашему домену по HTTPS.

Заметка: чтобы отключить информационное окно о подписке, выполните данную команду:

sed -i.bak "s/data.status !== 'Active'/false/g" /usr/share/javascript/proxmox-widget-toolkit/proxmoxlib.js && systemctl restart pveproxy.serviceСетевые настройки

Перед подключением в кластер настроим сетевые интерфейсы на гипервизоре. Стоит отметить, что настройка остальных нод ничем не отличается, кроме IP адресов и названия серверов, поэтому дублировать их настройку я не буду.

Создадим сетевой мост для внутренней сети, чтобы наши виртуальные машины (в моем варианте будет LXC контейнер для удобства) во-первых, были подключены к внутренней сети гипервизора и могли взаимодействовать друг с другом. Во-вторых, чуть позже мы добавим мост для внешней сети, чтобы виртуальные машины имели свой внешний IP адрес. Соответственно, контейнеры будут на данный момент за NAT’ом у нас.

Работать с сетевой конфигурацией Proxmox можно двумя способами: через веб-интерфейс или через конфигурационный файл /etc/network/interfaces. В первом варианте вам потребуется перезагрузка сервера (или можно просто переименовать файл interfaces.new в interfaces и сделать перезапуск networking сервиса через systemd). Если вы только начинаете настройку и еще нет виртуальных машин или LXC контейнеров, то желательно перезапускать гипервизор после изменений.

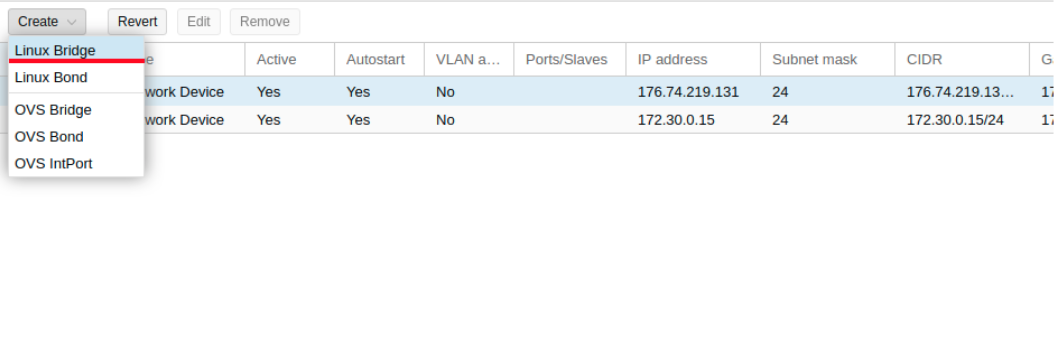

Теперь создадим сетевой мост под названием vmbr1 во вкладке network в веб-панели Proxmox.

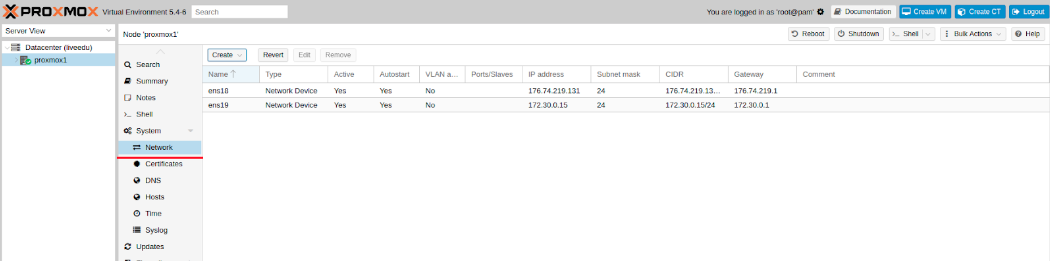

Изображение 2. Сетевые интерфейсы ноды proxmox1

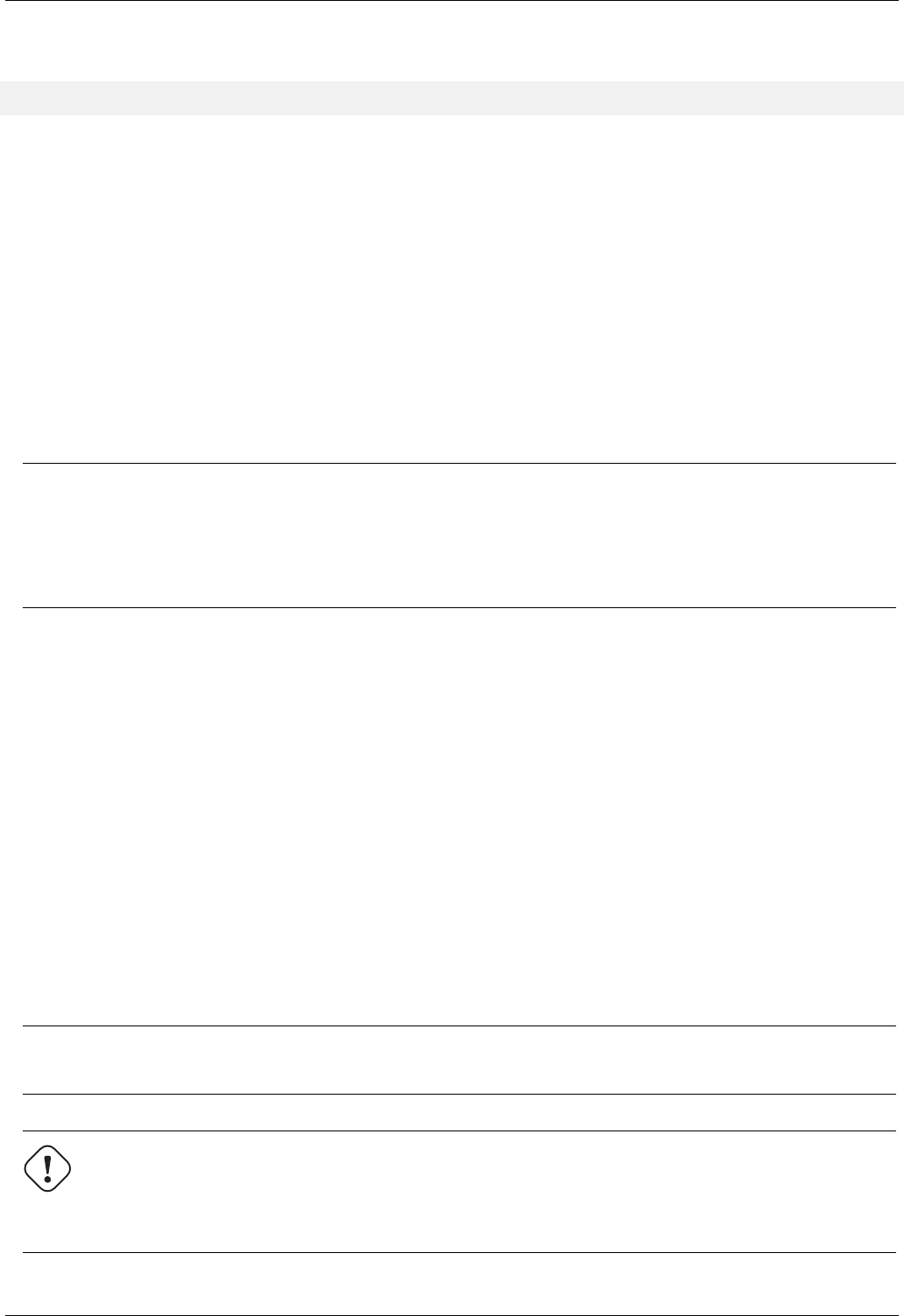

Изображение 3. Создание сетевого моста

Изображение 4. Настройка сетевой конфигурации vmbr1

Настройка предельно простая — vmbr1 нам нужен для того, чтобы инстансы получали доступ в Интернет.

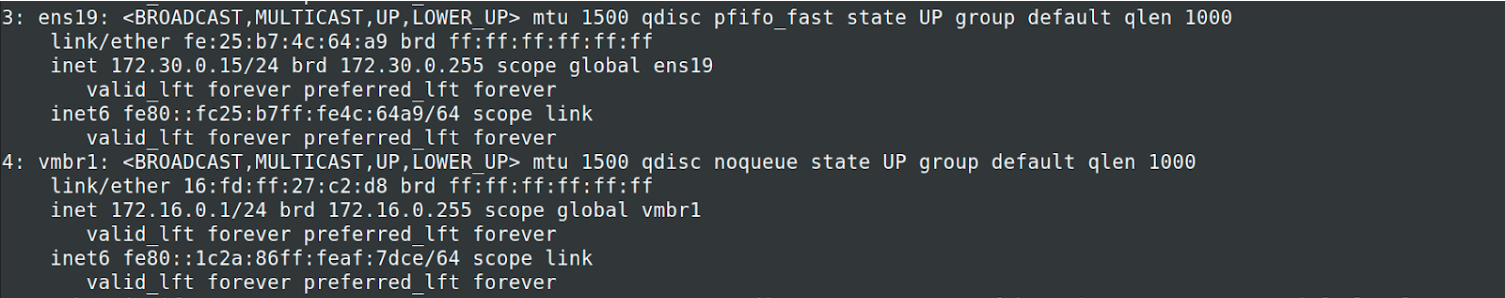

Теперь перезапускаем наш гипервизор и проверяем, создался ли интерфейс:

Изображение 5. Сетевой интерфейс vmbr1 в выводе команды ip a

Заметьте: у меня уже есть интерфейс ens19 — это интерфейс с внутренней сетью, на основе ее будет создан кластер.

Повторите данные этапы на остальных двух гипервизорах, после чего приступите к следующему шагу — подготовке кластера.

Также важный этап сейчас заключается во включении форвардинга пакетов — без нее инстансы не будут получать доступ к внешней сети. Открываем файл sysctl.conf и изменяем значение параметра net.ipv4.ip_forward на 1, после чего вводим следующую команду:

sysctl -pВ выводе вы должны увидеть директиву net.ipv4.ip_forward (если не меняли ее до этого)

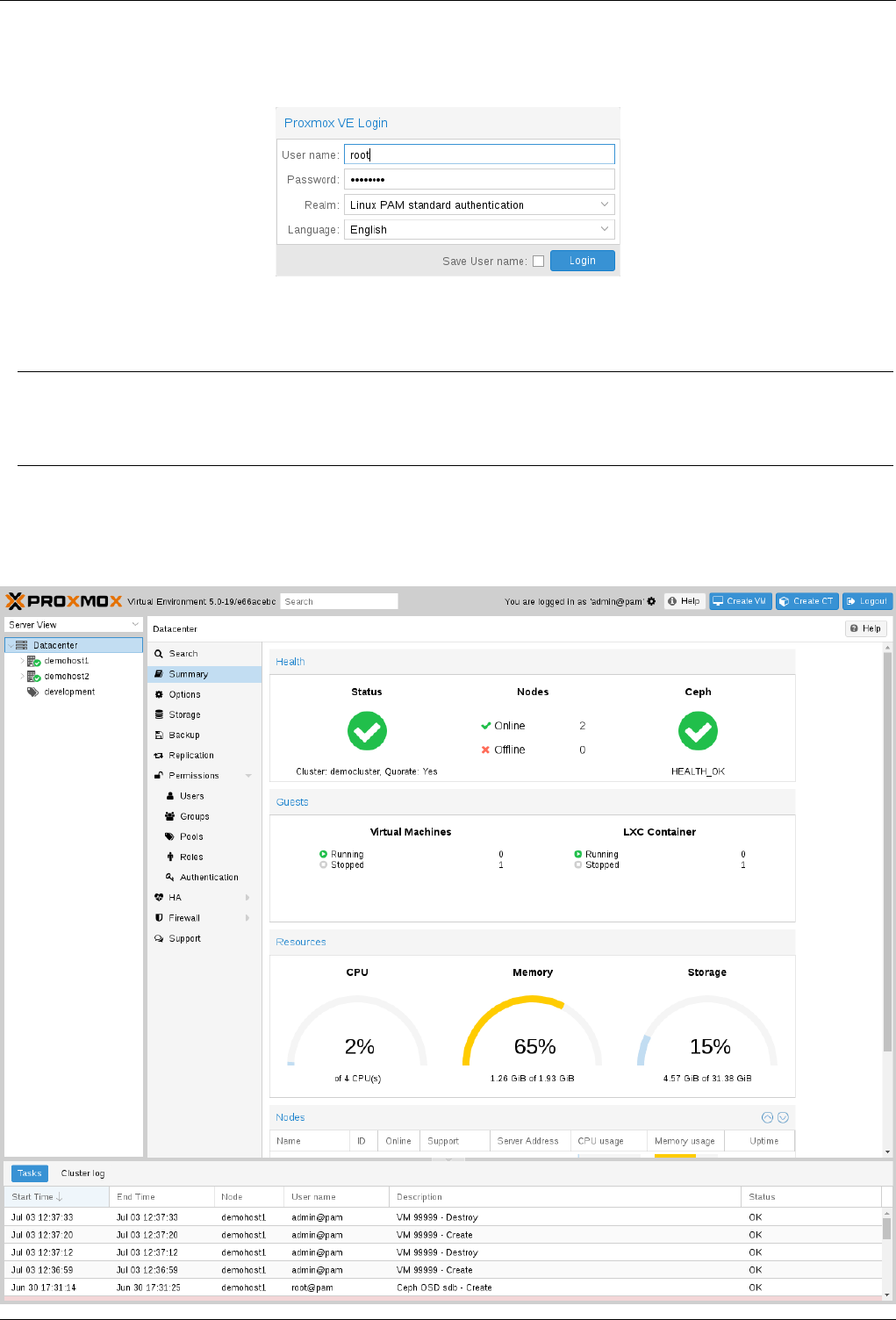

Настройка Proxmox кластера

Теперь перейдем непосредственно к кластеру. Каждая нода должна резолвить себя и другие ноды по внутренней сети, для этого требуется изменить значения в hosts записях следующих образом (на каждой ноде должна быть запись о других):

172.30.0.15 proxmox1.livelinux.info proxmox1

172.30.0.16 proxmox2.livelinux.info proxmox2

172.30.0.17 proxmox3.livelinux.info proxmox3

Также требуется добавить публичные ключи каждой ноды к остальным — это требуется для создания кластера.

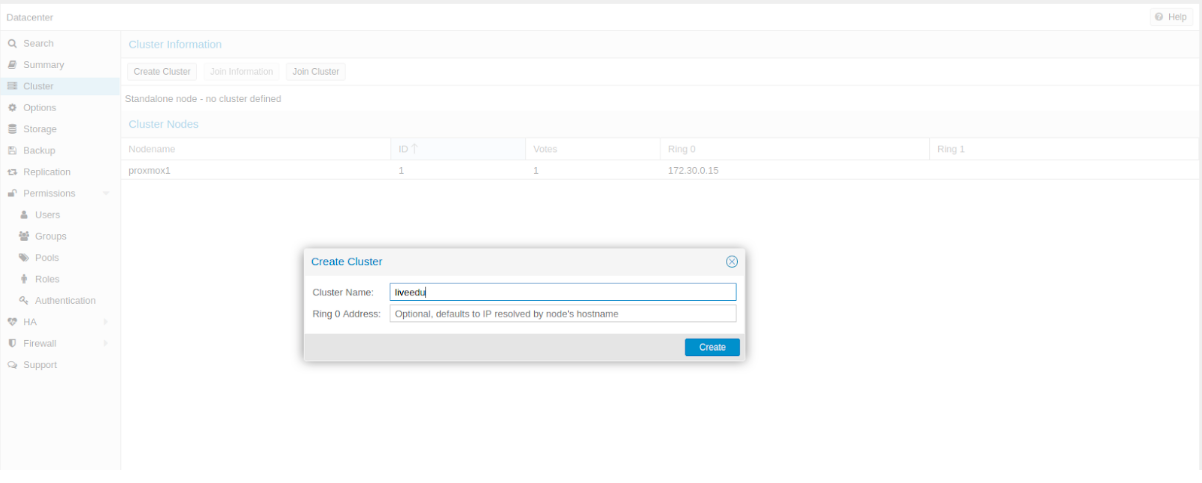

Создадим кластер через веб-панель:

Изображение 6. Создание кластера через веб-интерфейс

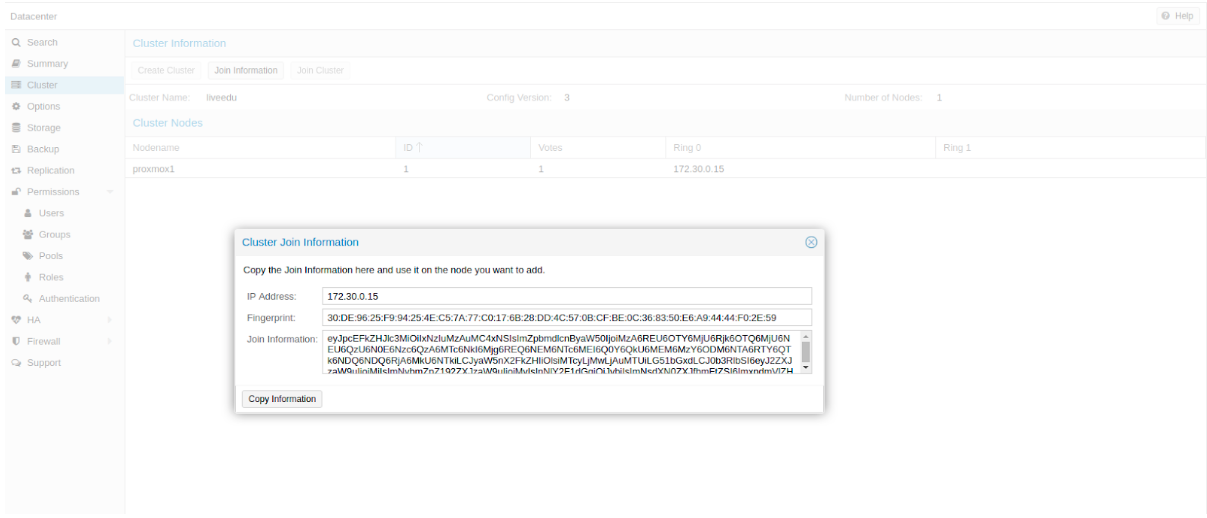

После создания кластера нам необходимо получить информацию о нем. Переходим в ту же вкладку кластера и нажимаем кнопку “Join Information”:

Изображение 7. Информация о созданном кластере

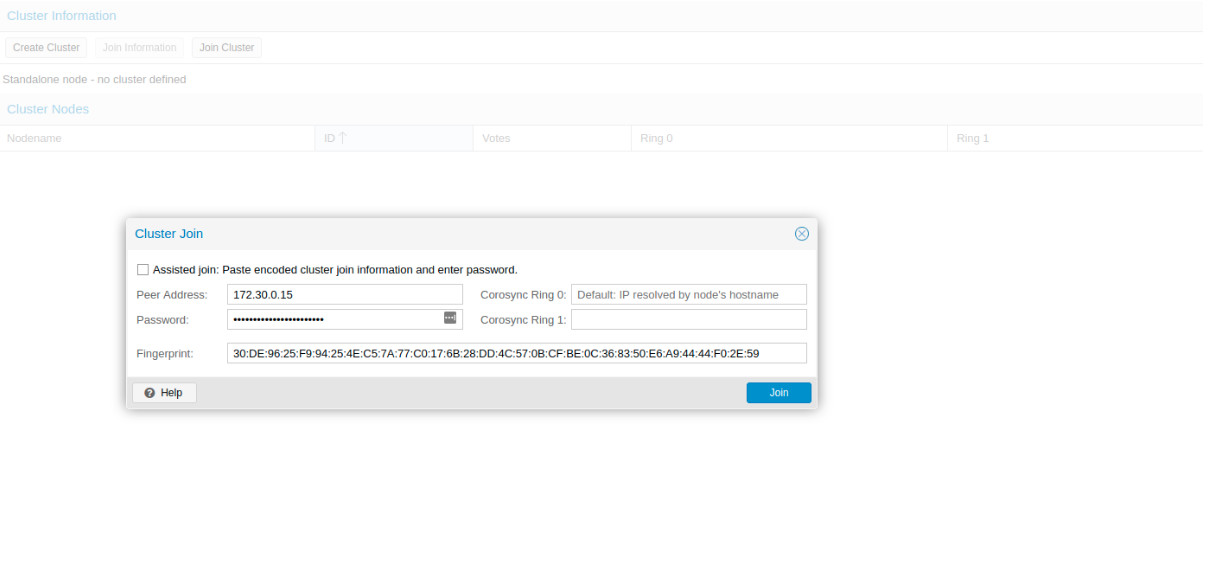

Данная информация пригодится нам во время присоединения второй и третьей ноды в кластер. Подключаемся к второй ноде и во вкладке Cluster нажимаем кнопку “Join Cluster”:

Изображение 8. Подключение к кластеру ноды

Разберем подробнее параметры для подключения:

- Peer Address: IP адрес первого сервера (к тому, к которому мы подключаемся)

- Password: пароль первого сервера

- Fingerprint: данное значение мы получаем из информации о кластере

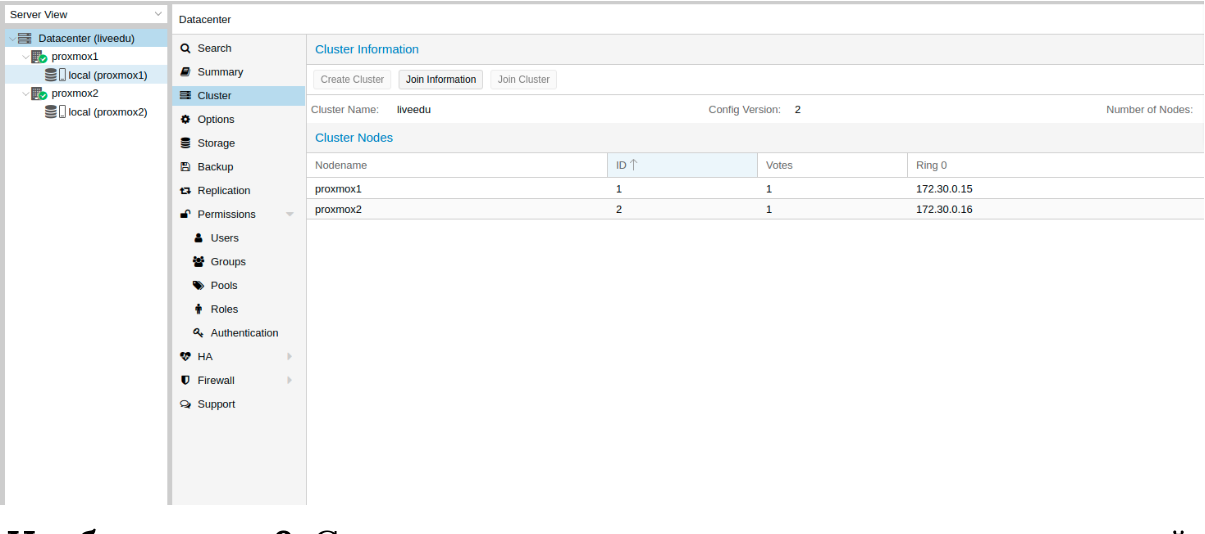

Изображение 9. Состояние кластера после подключения второй ноды

Вторая нода успешно подключена! Однако, такое бывает не всегда. Если вы неправильно выполните шаги или возникнут сетевые проблемы, то присоединение в кластер будет провалено, а сам кластер будет “развален”. Лучшее решение — это отсоединить ноду от кластера, удалить на ней всю информацию о самом кластере, после чего сделать перезапуск сервера и проверить предыдущие шаги. Как же безопасно отключить ноду из кластера? Для начала удалим ее из кластера на первом сервере:

pvecm del proxmox2После чего нода будет отсоединена от кластера. Теперь переходим на сломанную ноду и отключаем на ней следующие сервисы:

systemctl stop pvestatd.service

systemctl stop pvedaemon.service

systemctl stop pve-cluster.service

systemctl stop corosync

systemctl stop pve-cluster

Proxmox кластер хранит информацию о себе в sqlite базе, ее также необходимо очистить:

sqlite3 /var/lib/pve-cluster/config.db

delete from tree where name = 'corosync.conf';

.quit

Данные о коросинке успешно удалены. Удалим оставшиеся файлы, для этого необходимо запустить кластерную файловую систему в standalone режиме:

pmxcfs -l

rm /etc/pve/corosync.conf

rm /etc/corosync/*

rm /var/lib/corosync/*

rm -rf /etc/pve/nodes/*

Перезапускаем сервер (это необязательно, но перестрахуемся: все сервисы по итогу должны быть запущены и работать корректно. Чтобы ничего не упустить делаем перезапуск). После включения мы получим пустую ноду без какой-либо информации о предыдущем кластере и можем начать подключение вновь.

Установка и настройка ZFS

ZFS — это файловая система, которая может использоваться совместно с Proxmox. С помощью нее можно позволить себе репликацию данных на другой гипервизор, миграцию виртуальной машины/LXC контейнера, доступ к LXC контейнеру с хост-системы и так далее. Установка ее достаточно простая, приступим к разбору. На моих серверах доступно три SSD диска, которые мы объединим в RAID массив.

Добавляем репозитории:

nano /etc/apt/sources.list.d/stretch-backports.list

deb http://deb.debian.org/debian stretch-backports main contrib

deb-src http://deb.debian.org/debian stretch-backports main contrib

nano /etc/apt/preferences.d/90_zfs

Package: libnvpair1linux libuutil1linux libzfs2linux libzpool2linux spl-dkms zfs-dkms zfs-test zfsutils-linux zfsutils-linux-dev zfs-zed

Pin: release n=stretch-backports

Pin-Priority: 990

Обновляем список пакетов:

apt updateУстанавливаем требуемые зависимости:

apt install --yes dpkg-dev linux-headers-$(uname -r) linux-image-amd64Устанавливаем сам ZFS:

apt-get install zfs-dkms zfsutils-linuxЕсли вы в будущем получите ошибку fusermount: fuse device not found, try ‘modprobe fuse’ first, то выполните следующую команду:

modprobe fuseТеперь приступим непосредственно к настройке. Для начала нам требуется отформатировать SSD и настроить их через parted:

Настройка /dev/sda

parted /dev/sda

(parted) print

Model: ATA SAMSUNG MZ7LM480 (scsi)

Disk /dev/sda: 480GB

Sector size (logical/physical): 512B/512B

Partition Table: msdos

Disk Flags:

Number Start End Size Type File system Flags

1 1049kB 4296MB 4295MB primary raid

2 4296MB 4833MB 537MB primary raid

3 4833MB 37,0GB 32,2GB primary raid

(parted) mkpart

Partition type? primary/extended? primary

File system type? [ext2]? zfs

Start? 33GB

End? 480GB

Warning: You requested a partition from 33,0GB to 480GB (sectors 64453125..937500000).

The closest location we can manage is 37,0GB to 480GB (sectors 72353792..937703087).

Is this still acceptable to you?

Yes/No? yes

Аналогичные действия необходимо произвести и для других дисков. После того, как все диски подготовлены, приступаем к следующему шагу:

zpool create -f -o ashift=12 rpool /dev/sda4 /dev/sdb4 /dev/sdc4

Мы выбираем ashift=12 из соображений производительности — это рекомендация самого zfsonlinux, подробнее про это можно почитать в их вики: github.com/zfsonlinux/zfs/wiki/faq#performance-considerations

Применим некоторые настройки для ZFS:

zfs set atime=off rpool

zfs set compression=lz4 rpool

zfs set dedup=off rpool

zfs set snapdir=visible rpool

zfs set primarycache=all rpool

zfs set aclinherit=passthrough rpool

zfs inherit acltype rpool

zfs get -r acltype rpool

zfs get all rpool | grep compressratio

Теперь нам надо рассчитать некоторые переменные для вычисления zfs_arc_max, я это делаю следующим образом:

mem =`free --giga | grep Mem | awk '{print $2}'`

partofmem=$(($mem/10))

echo $setzfscache > /sys/module/zfs/parameters/zfs_arc_max

grep c_max /proc/spl/kstat/zfs/arcstats

zfs create rpool/data

cat > /etc/modprobe.d/zfs.conf << EOL

options zfs zfs_arc_max=$setzfscache

EOL

echo $setzfscache > /sys/module/zfs/parameters/zfs_arc_max

grep c_max /proc/spl/kstat/zfs/arcstatsВ данный момент пул успешно создан, также мы создали сабпул data. Проверить состояние вашего пула можно командой zpool status. Данное действие необходимо провести на всех гипервизорах, после чего приступить к следующему шагу.

Теперь добавим ZFS в Proxmox. Переходим в настройки датацентра (именно его, а не отдельной ноды) в раздел «Storage», кликаем на кнопку «Add» и выбираем опцию «ZFS», после чего мы увидим следующие параметры:

ID: Название стораджа. Я дал ему название local-zfs

ZFS Pool: Мы создали rpool/data, его и добавляем сюда.

Nodes: указываем все доступные ноды

Данная команда создает новый пул с выбранными нами дисками. На каждом гипервизоре должен появится новый storage под названием local-zfs, после чего вы сможете смигрировать свои виртуальные машины с локального storage на ZFS.

Репликация инстансов на соседний гипервизор

В кластере Proxmox есть возможность репликации данных с одного гипервизора на другой: данный вариант позволяет осуществлять переключение инстанса с одного сервера на другой. Данные будут актуальны на момент последней синхронизации — ее время можно выставить при создании репликации (стандартно ставится 15 минут). Существует два способа миграции инстанса на другую ноду Proxmox: ручной и автоматический. Давайте рассмотрим в первую очередь ручной вариант, а в конце я предоставлю вам Python скрипт, который позволит создавать виртуальную машину на доступном гипервизоре при недоступности одного из гипервизоров.

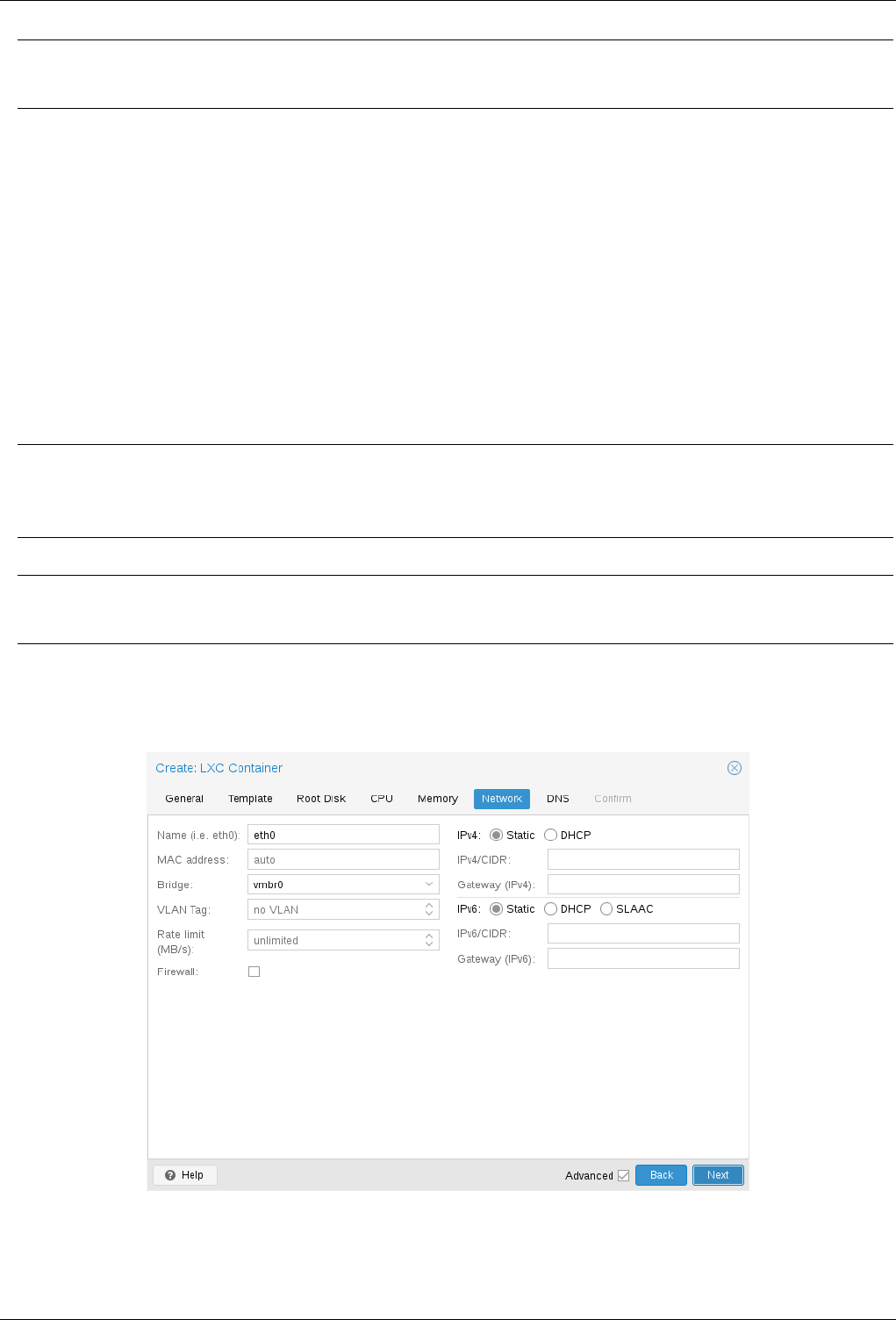

Для создания репликации необходимо перейти в веб-панель Proxmox и создать виртуальную машину или LXC контейнер. В предыдущих пунктах мы с вами настроили vmbr1 мост с NAT, что позволит нам выходить во внешнюю сеть. Я создам LXC контейнер с MySQL, Nginx и PHP-FPM с тестовым сайтом, чтобы проверить работу репликации. Ниже будет пошаговая инструкция.

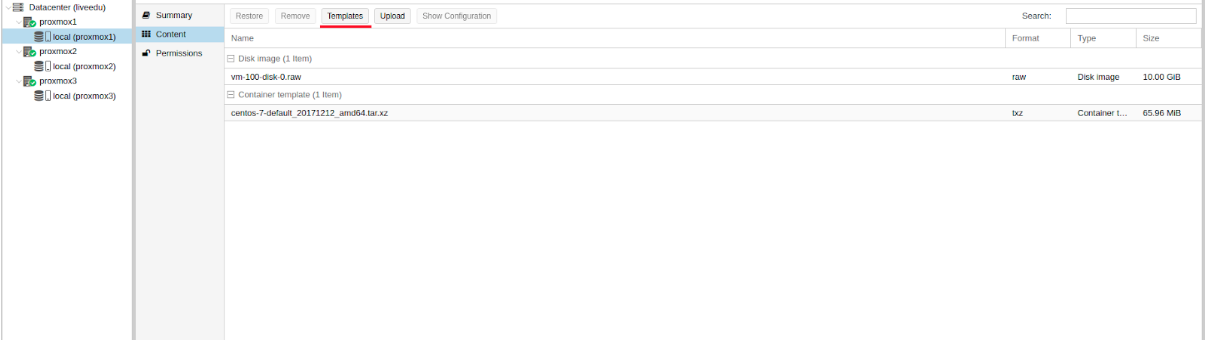

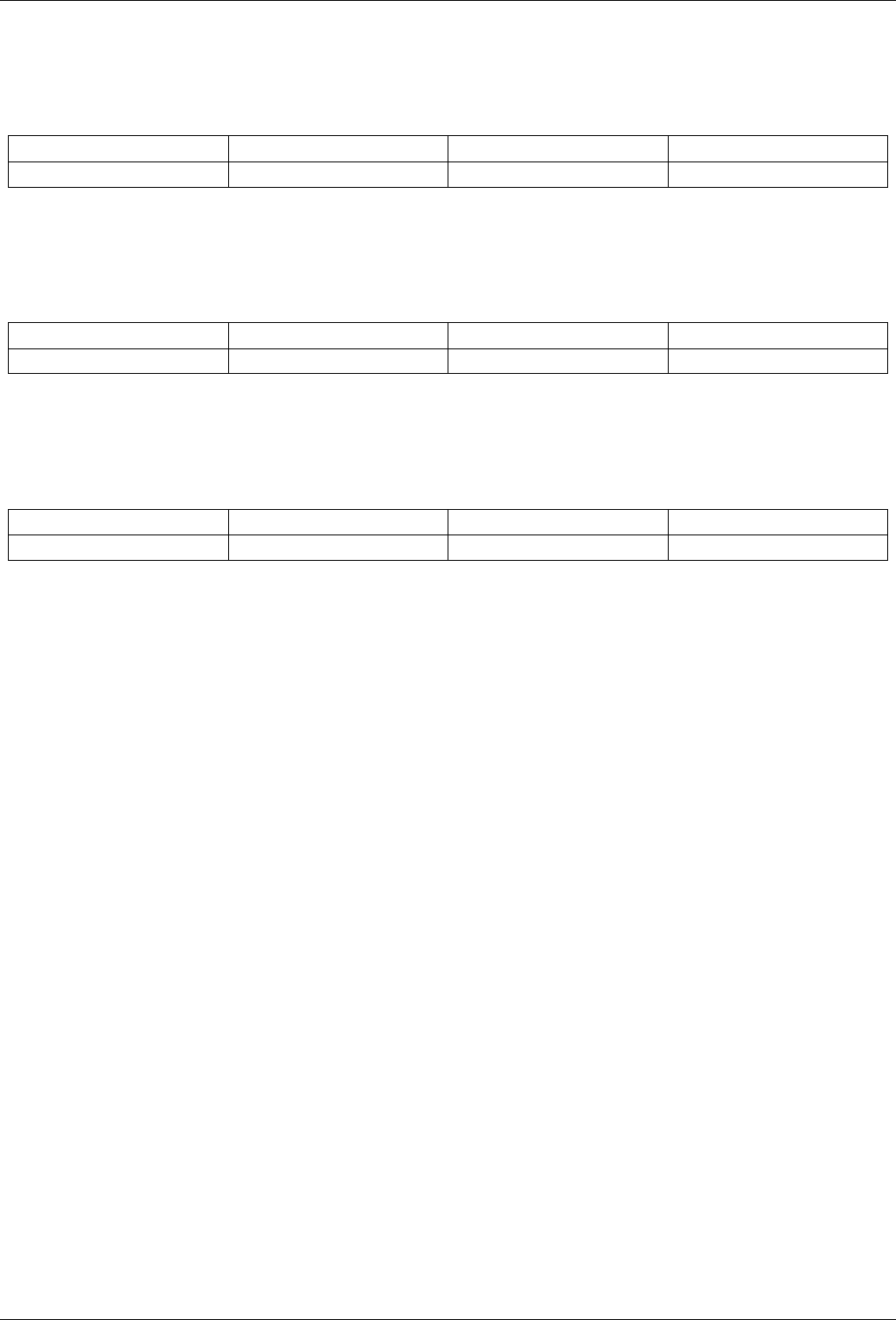

Загружаем подходящий темплейт (переходим в storage —> Content —> Templates), пример на скриншоте:

Изображение 10. Local storage с шаблонами и образами ВМ

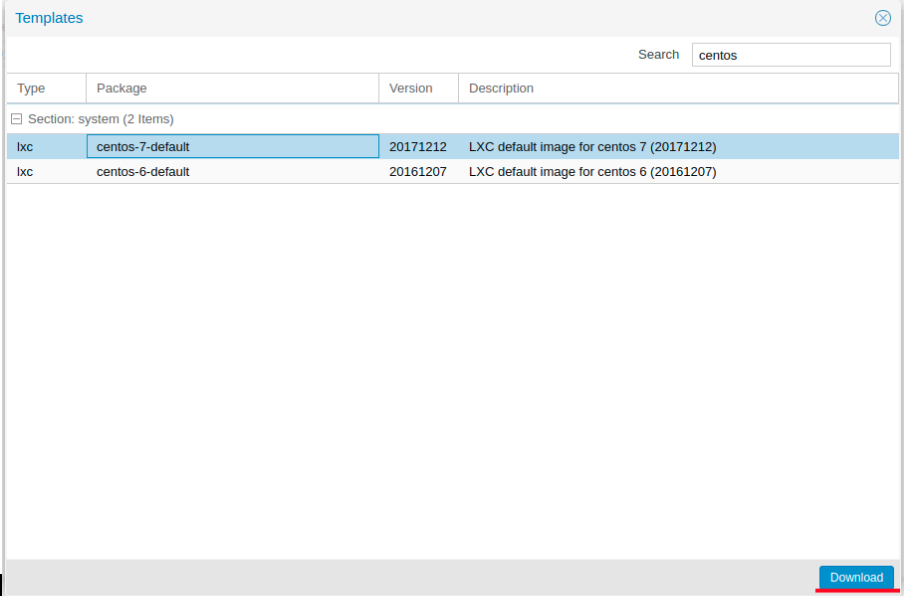

Нажимаем кнопку “Templates” и загружаем необходимый нам шаблон LXC контейнера:

Изображение 11. Выбор и загрузка шаблона

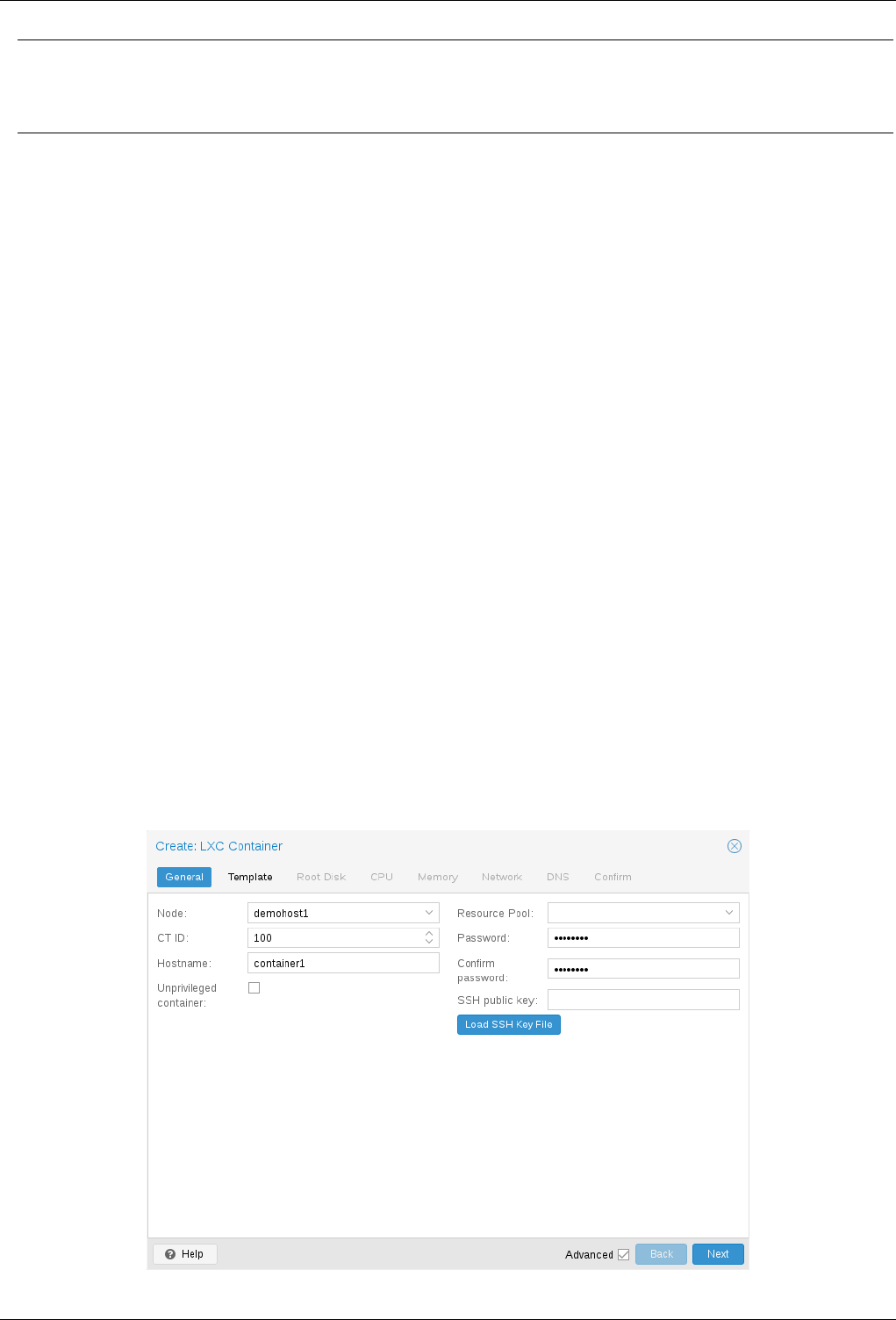

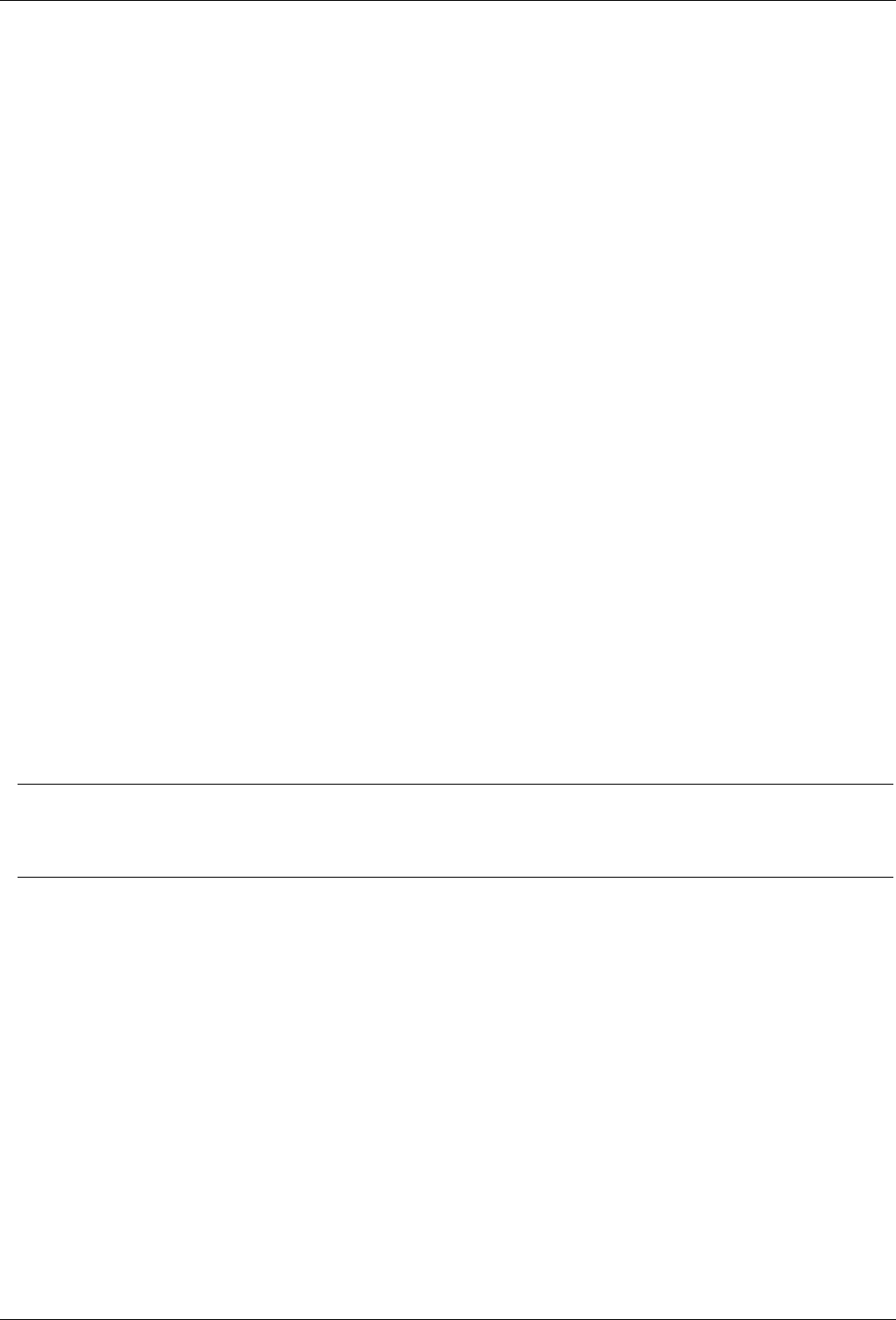

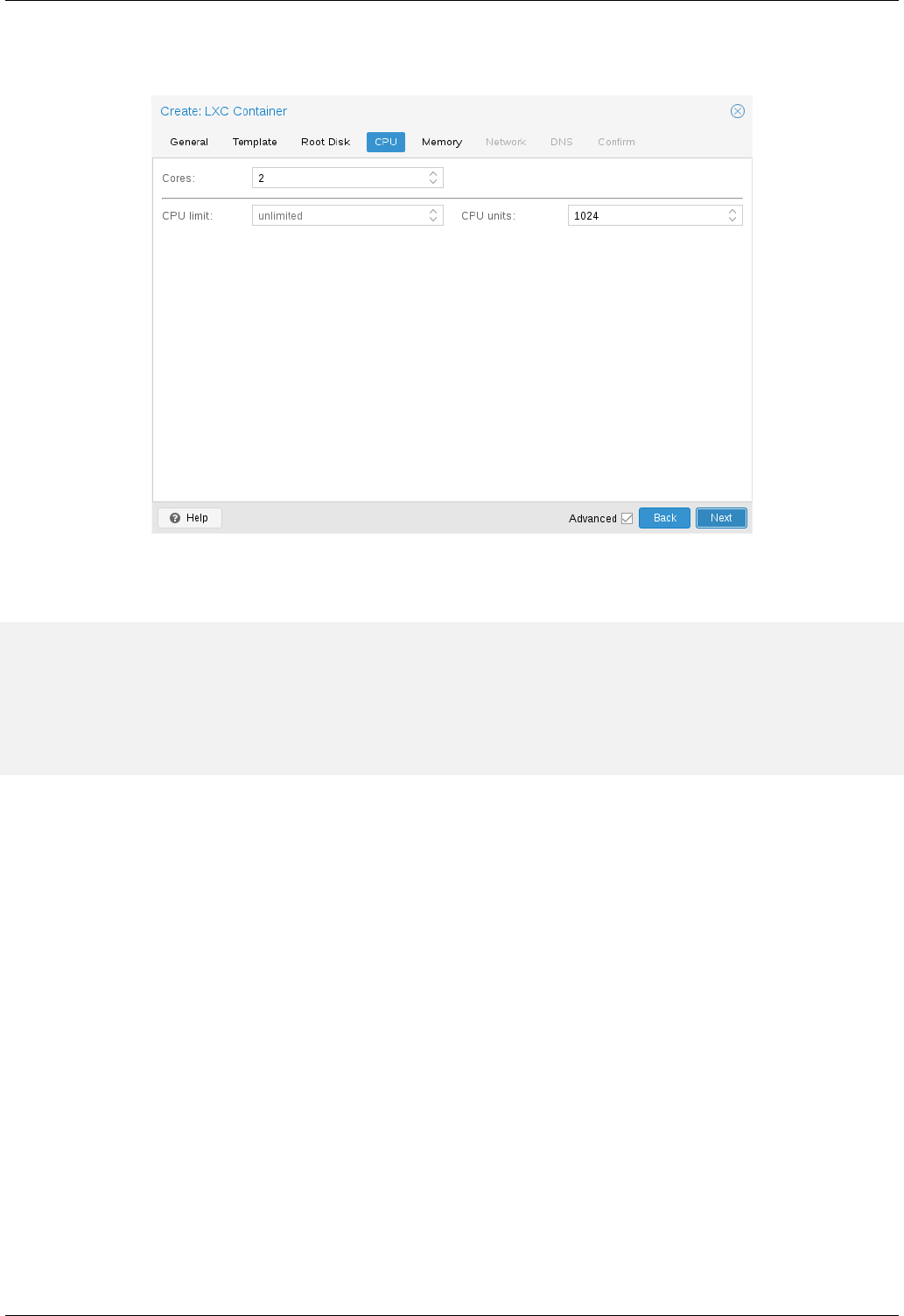

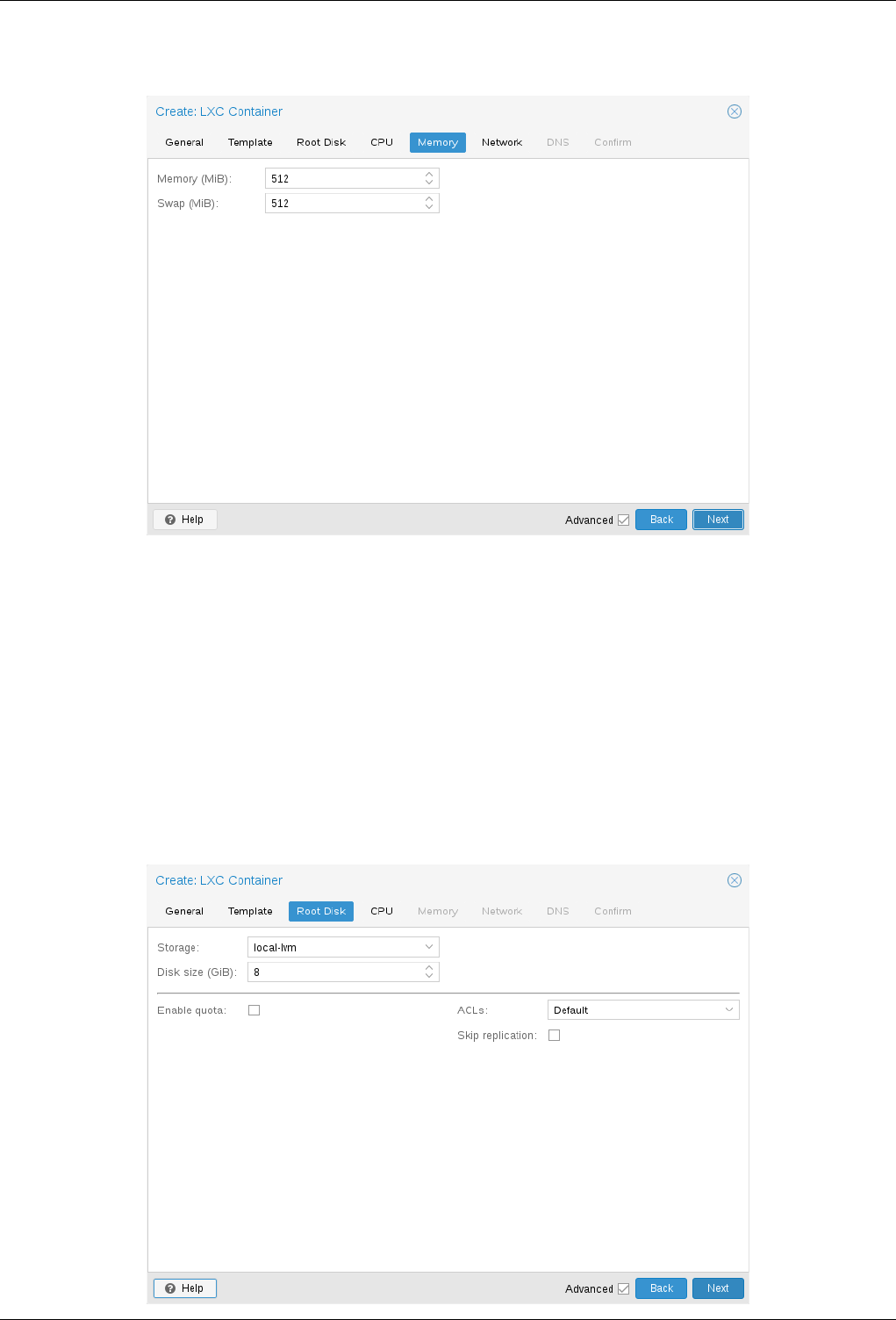

Теперь мы можем использовать его при создании новых LXC контейнеров. Выбираем первый гипервизор и нажимаем кнопку “Create CT” в правом верхнем углу: мы увидим панель создания нового инстанса. Этапы установки достаточно просты и я приведу лишь конфигурационный файл данного LXC контейнера:

arch: amd64

cores: 3

memory: 2048

nameserver: 8.8.8.8

net0: name=eth0,bridge=vmbr1,firewall=1,gw=172.16.0.1,hwaddr=D6:60:C5:39:98:A0,ip=172.16.0.2/24,type=veth

ostype: centos

rootfs: local:100/vm-100-disk-1.raw,size=10G

swap: 512

unprivileged:

Контейнер успешно создан. К LXC контейнерам можно подключаться через команду pct enter , я также перед установкой добавил SSH ключ гипервизора, чтобы подключаться напрямую через SSH (в PCT есть небольшие проблемы с отображением терминала). Я подготовил сервер и установил туда все необходимые серверные приложения, теперь можно перейти к созданию репликации.

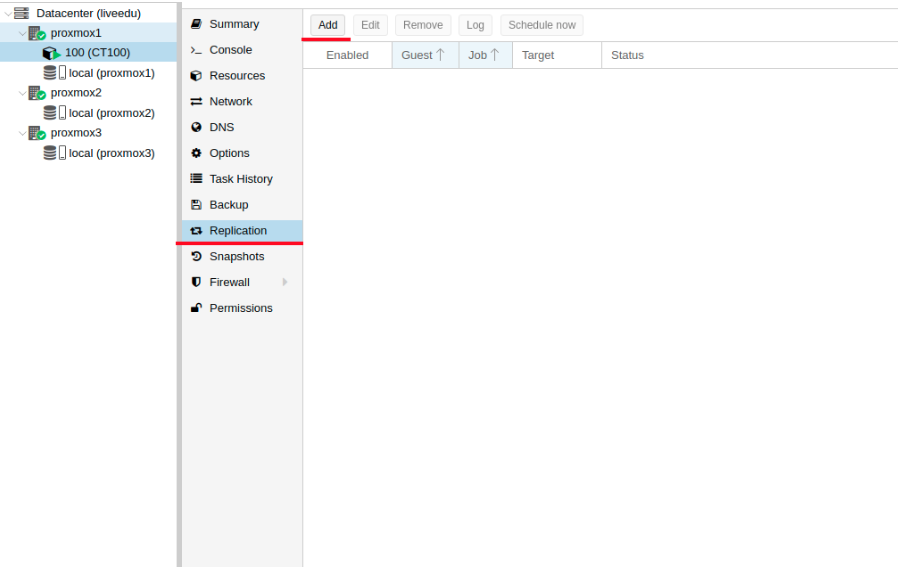

Кликаем на LXC контейнер и переходим во вкладку “Replication”, где создаем параметр репликации с помощью кнопки “Add”:

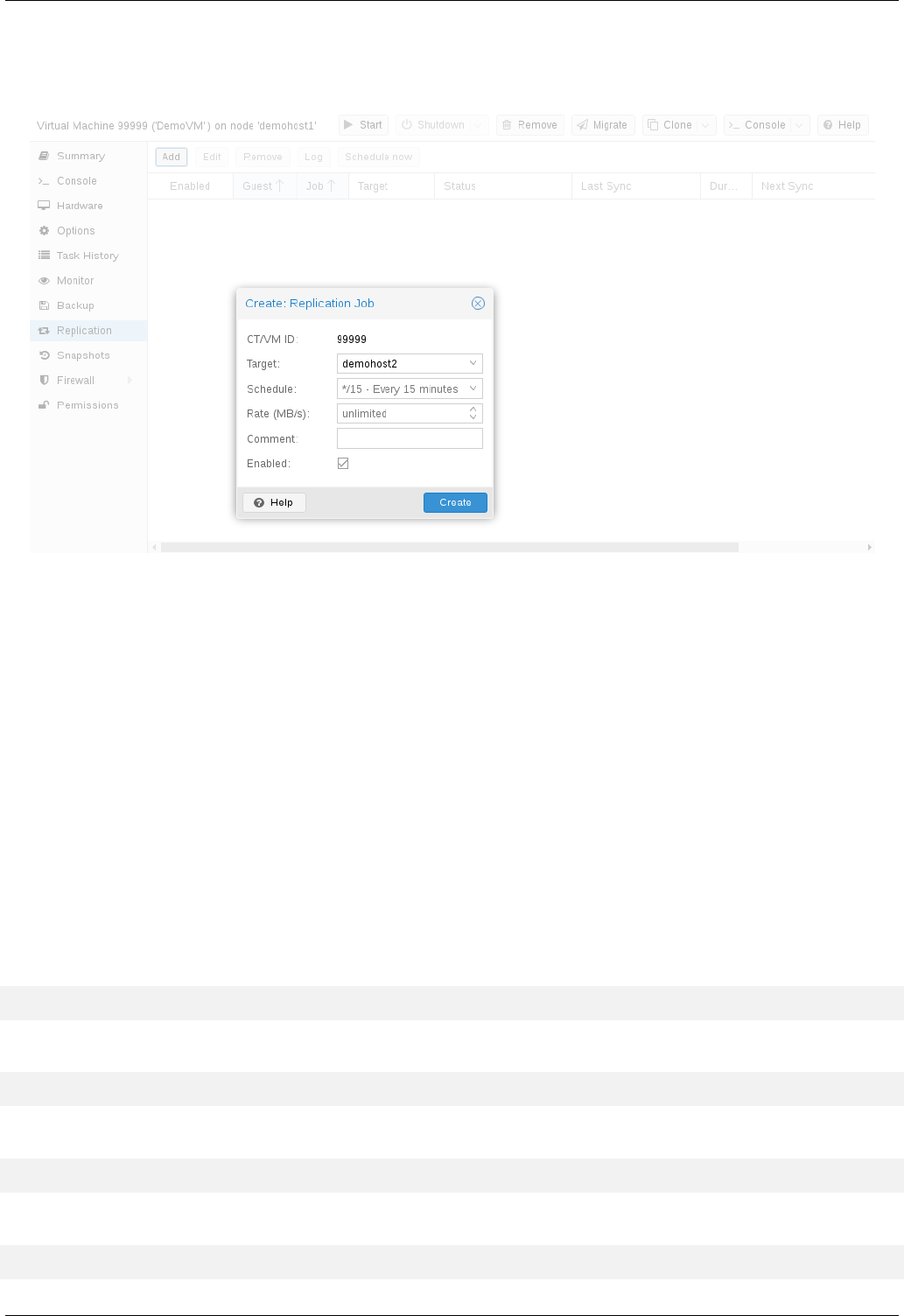

Изображение 12. Создание репликации в интерфейсе Proxmox

Изображение 13. Окно создания Replication job

Я создал задачу реплицировать контейнер на вторую ноду, как видно на следующем скриншоте репликация прошла успешно — обращайте внимание на поле “Status”, она оповещает о статусе репликации, также стоит обращать внимание на поле “Duration”, чтобы знать, сколько длится репликация данных.

Изображение 14. Список синхронизаций ВМ

Теперь попробуем смигрировать машину на вторую ноду с помощью кнопки “Migrate”

Начнется миграция контейнера, лог можно просмотреть в списке задач — там будет наша миграция. После этого контейнер будет перемещен на вторую ноду.

Ошибка “Host Key Verification Failed”

Иногда при настройке кластера может возникать подобная проблема — она мешает мигрировать машины и создавать репликацию, что нивелирует преимущества кластерных решений. Для исправления этой ошибки удалите файл known_hosts и подключитесь по SSH к конфликтной ноде:

/usr/bin/ssh -o 'HostKeyAlias=proxmox2' root@172.30.0.16

Примите Hostkey и попробуйте ввести эту команду, она должна подключить вас к серверу:

/usr/bin/ssh -o 'BatchMode=yes' -o 'HostKeyAlias=proxmox2' root@172.30.0.16

Особенности сетевых настроек на Hetzner

Переходим в панель Robot и нажимаем на кнопку “Virtual Switches”. На следующей странице вы увидите панель создания и управления интерфейсов Virtual Switch: для начала его необходимо создать, а после “подключить” выделенные сервера к нему. В поиске добавляем необходимые сервера для подключения — их не не нужно перезагружать, только придется подождать до 10-15 минут, когда подключение к Virtual Switch будет активно.

После добавления серверов в Virtual Switch через веб-панель подключаемся к серверам и открываем конфигурационные файлы сетевых интерфейсов, где создаем новый сетевой интерфейс:

auto enp4s0.4000

iface enp4s0.4000 inet static

address 10.1.0.11/24

mtu 1400

vlan-raw-device enp4s0Давайте разберем подробнее, что это такое. По своей сути — это VLAN, который подключается к единственному физическому интерфейсу под названием enp4s0 (он у вас может отличаться), с указанием номера VLAN — это номер Virtual Switch’a, который вы создавали в веб-панели Hetzner Robot. Адрес можете указать любой, главное, чтобы он был локальный.

Отмечу, что конфигурировать enp4s0 следует как обычно, по сути он должен содержать внешний IP адрес, который был выдан вашему физическому серверу. Повторите данные шаги на других гипервизорах, после чего перезагрузите на них networking сервис, сделайте пинг до соседней ноды по IP адресу Virtual Switch. Если пинг прошел успешно, то вы успешно установили соединение между серверами по Virtual Switch.

Я также приложу конфигурационный файл sysctl.conf, он понадобится, если у вас будут проблемы с форвардингом пакетом и прочими сетевыми параметрами:

net.ipv6.conf.all.disable_ipv6=0

net.ipv6.conf.default.disable_ipv6 = 0

net.ipv6.conf.all.forwarding=1

net.ipv4.conf.all.rp_filter=1

net.ipv4.tcp_syncookies=1

net.ipv4.ip_forward=1

net.ipv4.conf.all.send_redirects=0

Добавление IPv4 подсети в Hetzner

Перед началом работ вам необходимо заказать подсеть в Hetzner, сделать это можно через панель Robot.

Создадим сетевой мост с адресом, который будет из этой подсети. Пример конфигурации:

auto vmbr2

iface vmbr2 inet static

address ip-address

netmask 29

bridge-ports none

bridge-stp off

bridge-fd 0Теперь переходим в настройки виртуальной машины в Proxmox и создаем новый сетевой интерфейс, который будет прикреплен к мосту vmbr2. Я использую LXC контейнер, его конфигурацию можно изменять сразу же в Proxmox. Итоговая конфигурация для Debian:

auto eth0

iface eth0 inet static

address ip-address

netmask 26

gateway bridge-addressОбратите внимание: я указал 26 маску, а не 29 — это требуется для того, чтобы сеть на виртуальной машине работала.

Добавление IPv4 адреса в Hetzner

Ситуация с одиночным IP адресом отличается — обычно Hetzner дает нам дополнительный адрес из подсети сервера. Это означает, что вместо vmbr2 нам требуется использоваться vmbr0, но на данный момент его у нас нет. Суть в том, что vmbr0 должен содержать IP адрес железного сервера (то есть использовать тот адрес, который использовал физический сетевой интерфейс enp2s0). Адрес необходимо переместить на vmbr0, для этого подойдет следующая конфигурация (советую заказать KVM, чтобы в случае чего возобновить работу сети):

auto enp2s0

iface enp2s0 inet manual

auto vmbr0

iface vmbr0 inet static

address ip-address

netmask 255.255.255.192

gateway ip-gateway

bridge-ports enp2s0

bridge-stp off

bridge-fd 0

Перезапустите сервер, если это возможно (если нет, перезапустите сервис networking), после чего проверьте сетевые интерфейсы через ip a:

2: enp2s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master vmbr0 state UP group default qlen 1000

link/ether 44:8a:5b:2c:30:c2 brd ff:ff:ff:ff:ff:ff

Как здесь видно, enp2s0 подключен к vmbr0 и не имеет IP адрес, так как он был переназначен на vmbr0.

Теперь в настройках виртуальной машины добавляем сетевой интерфейс, который будет подключен к vmbr0. В качестве gateway укажите адрес, прикрепленный к vmbr0.

В завершении

Надеюсь, что данная статья пригодится вам, когда вы будете настраивать Proxmox кластер в Hetzner. Если позволит время, то я расширю статью и добавлю инструкцию для OVH — там тоже не все очевидно, как кажется на первый взгляд. Материал получился достаточно объемным, если найдете ошибки, то, пожалуйста, напишите в комментарии, я их исправлю. Всем спасибо за уделенное внимание.

Автор: Илья Андреев, под редакцией Алексея Жадан и команды «Лайв Линукс»

Если вдруг web-интерфейс proxmox перестал обвечать во время выполнения задачки, то посмотреть статус и лог задач можно с помощью утилиты pvesh.

В общем случае это делается так:

pvesh get nodes/<NODE>/tasks/<UPID>/log pvesh get nodes/<NODE>/tasks/<UPID>/status

Имя ноды можно поглядеть так:

pvesh get nodes

Список задач, чтобы узнать UPID поглядеть так:

pvesh get nodes/proxmox/tasks/

В итоге команды будут какие-то такие:

pvesh get nodes/proxmox/tasks/UPID:proxmox:000265EB:112BC1FC:60480914:aptupdate::root@pam:/log pvesh get nodes/proxmox/tasks/UPID:proxmox:000265EB:112BC1FC:60480914:aptupdate::root@pam:/status

- proxmox/proxmox_get_task_status_from_cli.txt

- Last modified: 2021/03/10 08:57

- by admin

Log In

Open the PDF directly: View PDF .

Page Count: 350 [warning: Documents this large are best viewed by clicking the View PDF Link!]

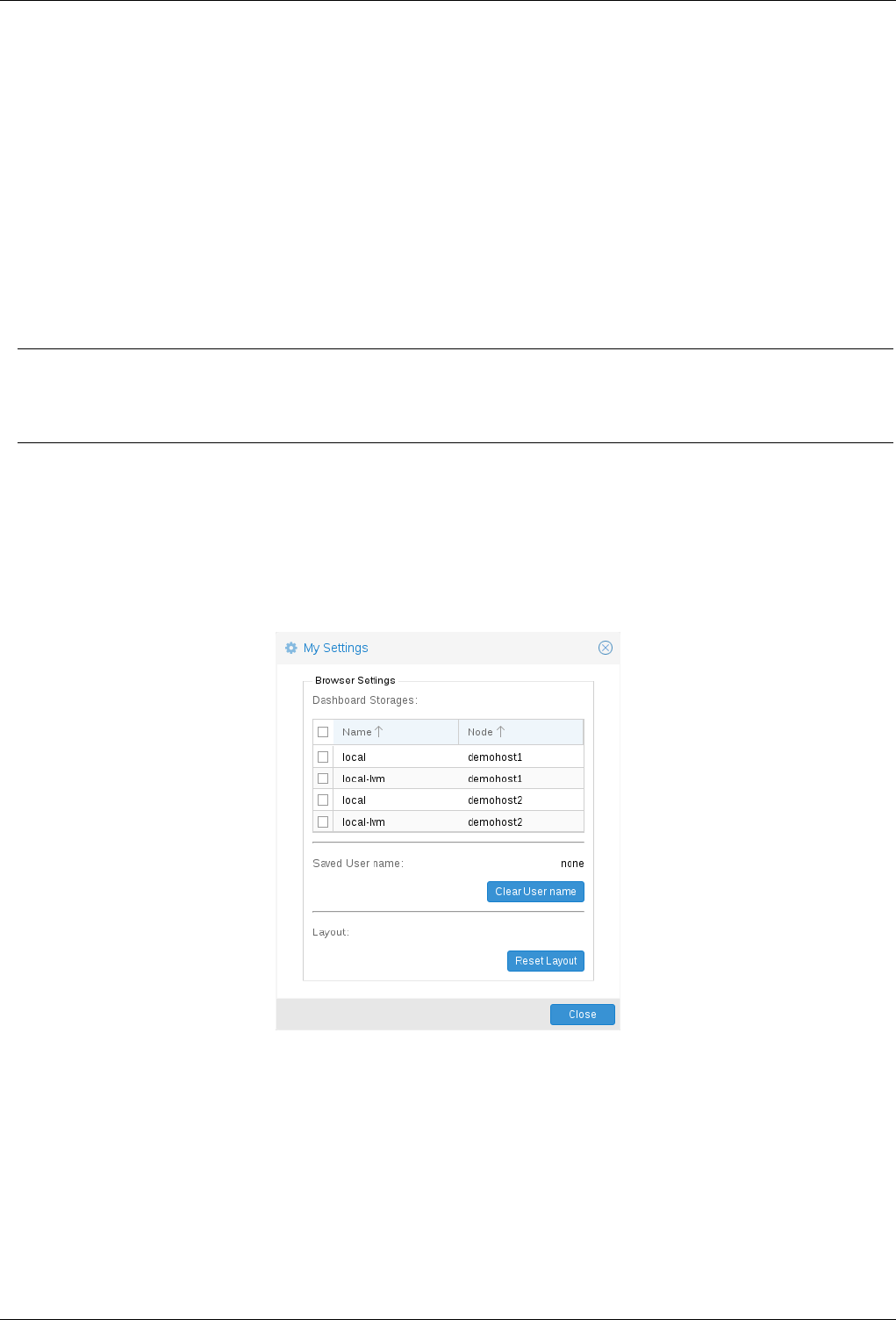

PROXMOX VE ADMINISTRATION GUIDE

RELEASE 5.2

August 8, 2018

Proxmox Server Solutions Gmbh

www.proxmox.com

Proxmox VE Administration Guide ii

Copyright ©2017 Proxmox Server Solutions Gmbh

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free

Documentation License, Version 1.3 or any later version published by the Free Software Foundation; with

no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts.

A copy of the license is included in the section entitled «GNU Free Documentation License».

Proxmox VE Administration Guide iii

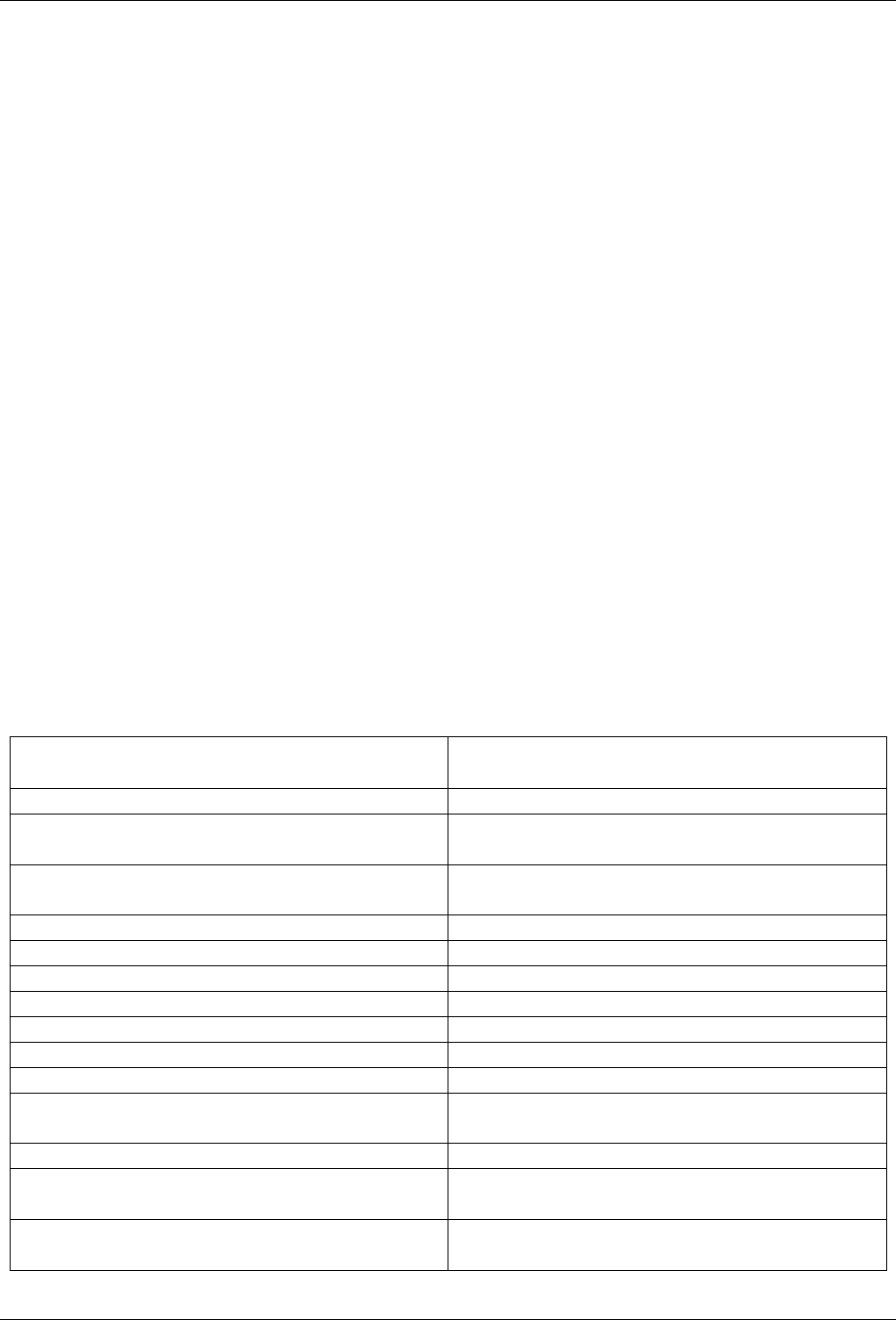

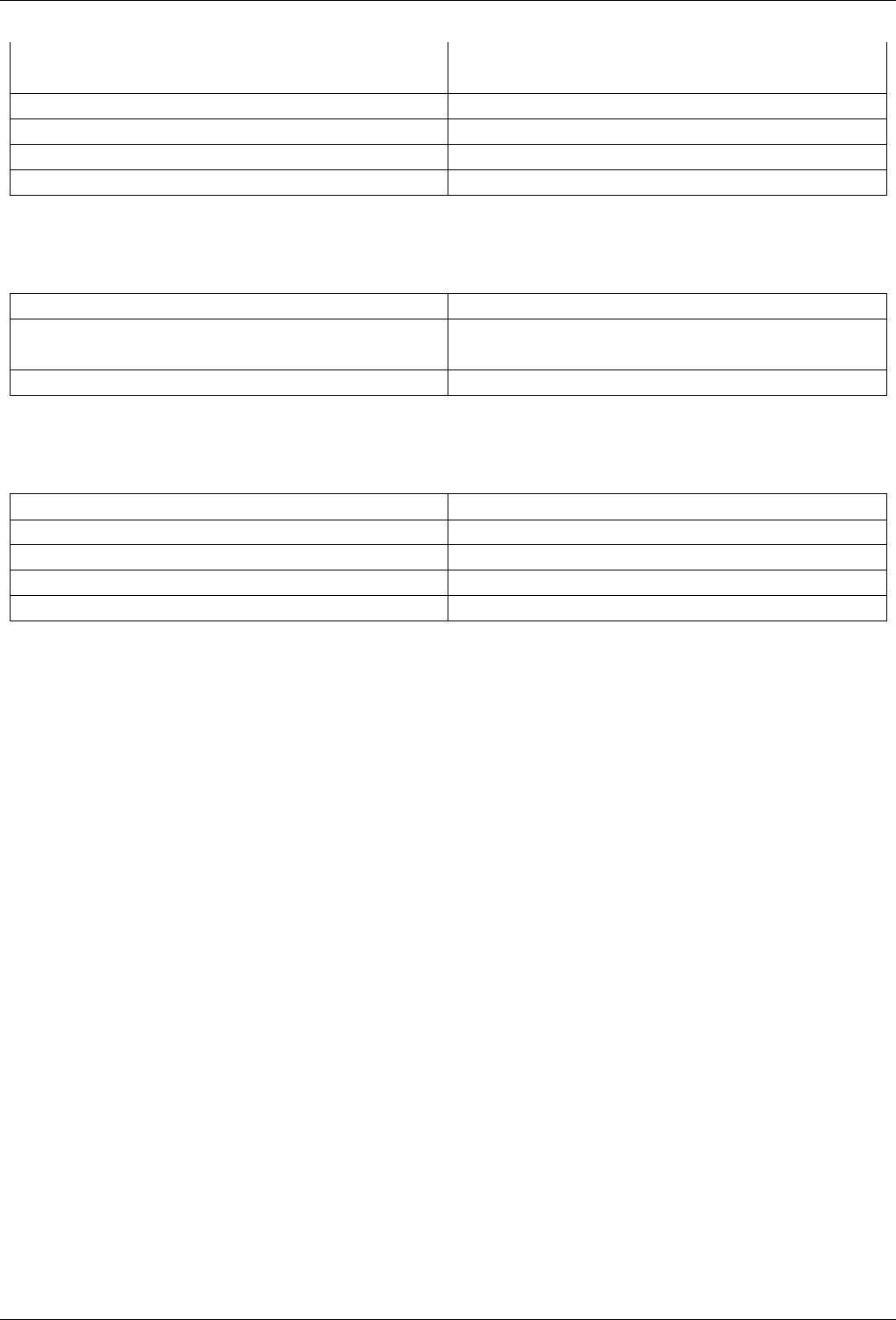

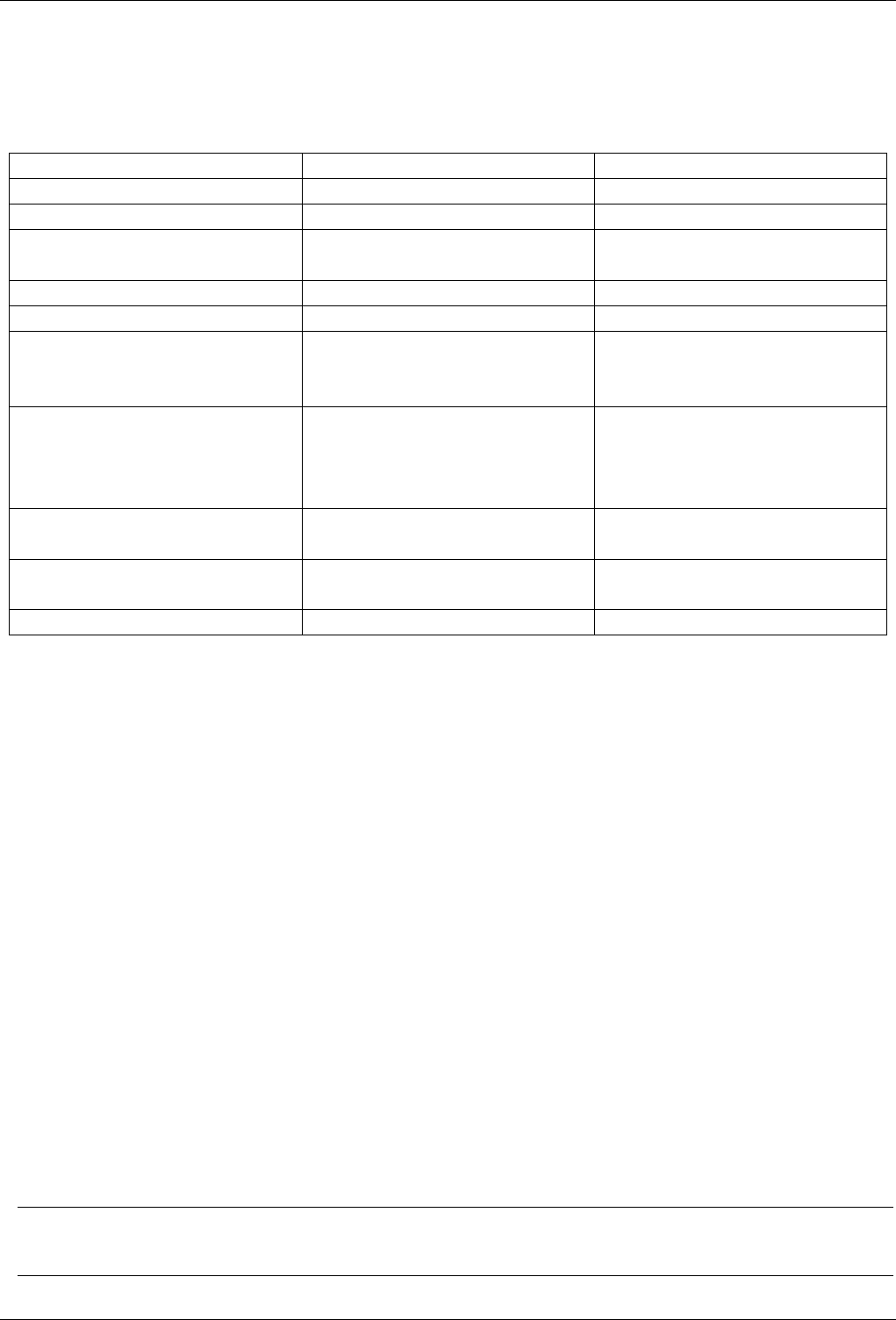

Contents

1 Introduction 1

1.1 Central Management ………………………………… 2

1.2 Flexible Storage ………………………………….. 3

1.3 Integrated Backup and Restore …………………………… 3

1.4 High Availability Cluster ………………………………. 3

1.5 Flexible Networking …………………………………. 4

1.6 Integrated Firewall …………………………………. 4

1.7 Why Open Source …………………………………. 4

1.8 Your benefit with Proxmox VE ……………………………. 4

1.9 Getting Help ……………………………………. 5

1.9.1 Proxmox VE Wiki ………………………………. 5

1.9.2 Community Support Forum ………………………….. 5

1.9.3 Mailing Lists …………………………………. 5

1.9.4 Commercial Support …………………………….. 5

1.9.5 Bug Tracker …………………………………. 5

1.10 Project History …………………………………… 5

1.11 Improving the Proxmox VE Documentation ……………………… 6

2 Installing Proxmox VE 7

2.1 System Requirements ……………………………….. 7

2.1.1 Minimum Requirements, for Evaluation …………………….. 7

2.1.2 Recommended System Requirements …………………….. 8

2.1.3 Simple Performance Overview ………………………… 8

2.1.4 Supported web browsers for accessing the web interface . . . . . . . . . . . . . . . . 8

2.2 Using the Proxmox VE Installation CD-ROM ……………….…….. 8

2.2.1 Advanced LVM Configuration Options …………………….. 14

2.2.2 ZFS Performance Tips ……………………………. 15

2.3 Install Proxmox VE on Debian ……………………………. 15

Proxmox VE Administration Guide iv

2.4 Install from USB Stick ……………………………….. 16

2.4.1 Prepare a USB flash drive as install medium ………………….. 16

2.4.2 Instructions for GNU/Linux ………………………….. 16

2.4.3 Instructions for OSX …………………………….. 17

2.4.4 Instructions for Windows …………………………… 17

2.4.5 Boot your server from USB media ………………………. 17

3 Host System Administration 18

3.1 Package Repositories ……………………………….. 18

3.1.1 Proxmox VE Enterprise Repository ………..…………….. 19

3.1.2 Proxmox VE No-Subscription Repository ……………………. 19

3.1.3 Proxmox VE Test Repository …………….…………… 19

3.1.4 SecureApt ………………………………….. 20

3.2 System Software Updates ……………………………… 20

3.3 Network Configuration ……………………………….. 21

3.3.1 Naming Conventions …………………………….. 21

3.3.2 Choosing a network configuration …………………….... 22

3.3.3 Default Configuration using a Bridge ……………………… 22

3.3.4 Routed Configuration …………………………….. 23

3.3.5 Masquerading (NAT) with iptables . . . . . . . . . . . . . . . . . . . . . . . . . . 23

3.3.6 Linux Bond …………………………………. 24

3.3.7 VLAN 802.1Q …..……………………………. 26

3.4 Time Synchronization ……………………………….. 28

3.4.1 Using Custom NTP Servers …………………………. 28

3.5 External Metric Server ……………………………….. 29

3.5.1 Graphite server configuration …………………………. 29

3.5.2 Influxdb plugin configuration …………………….…… 29

3.6 Disk Health Monitoring ……………………………….. 30

3.7 Logical Volume Manager (LVM) …………………….…….. 30

3.7.1 Hardware ………………………………….. 31

3.7.2 Bootloader …………………………………. 31

3.7.3 Creating a Volume Group …………………………… 31

3.7.4 Creating an extra LV for /var/lib/vz . . . . . . . . . . . . . . . . . . . . . . . . 32

3.7.5 Resizing the thin pool ………………..…………… 32

3.7.6 Create a LVM-thin pool ……………………………. 33

3.8 ZFS on Linux ……………………………………. 33

Proxmox VE Administration Guide v

3.8.1 Hardware ………………………………….. 34

3.8.2 Installation as Root File System ……………………….. 34

3.8.3 Bootloader …………………………………. 35

3.8.4 ZFS Administration ……………………………… 35

3.8.5 Activate E-Mail Notification ………………………….. 37

3.8.6 Limit ZFS Memory Usage ………………………….. 38

3.9 Certificate Management ………………………………. 39

3.9.1 Certificates for communication within the cluster ………………… 39

3.9.2 Certificates for API and web GUI ……………………….. 39

4 Hyper-converged Infrastructure 43

4.1 Benefits of a Hyper-Converged Infrastructure (HCI) with Proxmox VE . . . . . . . . . . . . . . 43

4.2 Manage Ceph Services on Proxmox VE Nodes ……………………. 44

4.2.1 Precondition ………………………………… 45

4.2.2 Installation of Ceph Packages ………………………… 46

4.2.3 Creating initial Ceph configuration ………………………. 46

4.2.4 Creating Ceph Monitors …………………………… 47

4.2.5 Creating Ceph Manager …………………………… 47

4.2.6 Creating Ceph OSDs …………………………….. 48

4.2.7 Creating Ceph Pools …………………………….. 50

4.2.8 Ceph CRUSH & device classes ……………………….. 51

4.2.9 Ceph Client …………………………………. 53

5 Graphical User Interface 54

5.1 Features ……………………………………… 54

5.2 Login ……………………………………….. 55

5.3 GUI Overview …………………………………… 55

5.3.1 Header …………………………………… 56

5.3.2 Resource Tree ……………………………….. 57

5.3.3 Log Panel ………………………………….. 57

5.4 Content Panels …………………………………… 58

5.4.1 Datacenter …………………………………. 58

5.4.2 Nodes ……………………………………. 59

5.4.3 Guests …………………………………… 60

5.4.4 Storage …………………………………… 62

5.4.5 Pools ……………………………………. 63

Proxmox VE Administration Guide vi

6 Cluster Manager 64

6.1 Requirements …………………………………… 64

6.2 Preparing Nodes ..………………………………… 65

6.3 Create the Cluster …………………………………. 65

6.3.1 Multiple Clusters In Same Network ………………………. 65

6.4 Adding Nodes to the Cluster …………………………….. 66

6.4.1 Adding Nodes With Separated Cluster Network ………………… 67

6.5 Remove a Cluster Node ………………………………. 67

6.5.1 Separate A Node Without Reinstalling …………………….. 68

6.6 Quorum ………………………………………. 70

6.7 Cluster Network ………………………………….. 70

6.7.1 Network Requirements ……………………………. 70

6.7.2 Separate Cluster Network ………………………….. 71

6.7.3 Redundant Ring Protocol …………………………… 74

6.7.4 RRP On Cluster Creation …………………….…….. 74

6.7.5 RRP On Existing Clusters ………………………….. 74

6.8 Corosync Configuration ………………………………. 75

6.8.1 Edit corosync.conf ……………………………… 75

6.8.2 Troubleshooting ……………………………….. 76

6.8.3 Corosync Configuration Glossary ………………………. 77

6.9 Cluster Cold Start …………………………..…….. 77

6.10 Guest Migration ………………………………….. 78

6.10.1 Migration Type ……………………………….. 78

6.10.2 Migration Network ……………………………… 78

7 Proxmox Cluster File System (pmxcfs) 80

7.1 POSIX Compatibility ………………………………… 80

7.2 File Access Rights …………………………………. 81

7.3 Technology …………………………………….. 81

7.4 File System Layout …………………………………. 81

7.4.1 Files …………………………………….. 81

7.4.2 Symbolic links ………………………………… 82

7.4.3 Special status files for debugging (JSON) . . . . . . . . . . . . . . . . . . . . . . . . 82

7.4.4 Enable/Disable debugging ………………………….. 82

7.5 Recovery ……………………………………… 82

7.5.1 Remove Cluster configuration ………..………………. 82

7.5.2 Recovering/Moving Guests from Failed Nodes . . . . . . . . . . . . . . . . . . . . . . 83

Proxmox VE Administration Guide vii

8 Proxmox VE Storage 84

8.1 Storage Types …………………………………… 84

8.1.1 Thin Provisioning ………………………………. 85

8.2 Storage Configuration ……………………………….. 85

8.2.1 Storage Pools ………………………………… 86

8.2.2 Common Storage Properties …………………………. 86

8.3 Volumes ……………………………………… 87

8.3.1 Volume Ownership ……………………………… 88

8.4 Using the Command Line Interface …………………………. 88

8.4.1 Examples ………………………………….. 88

8.5 Directory Backend …………………………………. 89

8.5.1 Configuration ………………………………… 90

8.5.2 File naming conventions …………………………… 90

8.5.3 Storage Features ………………………………. 91

8.5.4 Examples ………………………………….. 91

8.6 NFS Backend ……………………………………. 92

8.6.1 Configuration ………………………………… 92

8.6.2 Storage Features ………………………………. 93

8.6.3 Examples ………………………………….. 93

8.7 CIFS Backend …………………………………… 93

8.7.1 Configuration ………………………………… 93

8.7.2 Storage Features ………………………………. 94

8.7.3 Examples ………………………………….. 95

8.8 GlusterFS Backend …………………………………. 95

8.8.1 Configuration ………………………………… 95

8.8.2 File naming conventions …………………………… 96

8.8.3 Storage Features ………………………………. 96

8.9 Local ZFS Pool Backend …………..………………….. 96

8.9.1 Configuration ………………………………… 96

8.9.2 File naming conventions …………………………… 97

8.9.3 Storage Features ………………………………. 97

8.9.4 Examples ………………………………….. 97

8.10 LVM Backend ……………………………………. 97

8.10.1 Configuration ………………………………… 98

8.10.2 File naming conventions …………………………… 98

Proxmox VE Administration Guide viii

8.10.3 Storage Features ………………………………. 98

8.10.4 Examples ………………………….………. 99

8.11 LVM thin Backend …………………………………. 99

8.11.1 Configuration ………………………………… 99

8.11.2 File naming conventions ……………………………100

8.11.3 Storage Features ……………………………….100

8.11.4 Examples ………………………….……….100

8.12 Open-iSCSI initiator …………………………………100

8.12.1 Configuration …………………………………100

8.12.2 File naming conventions ……………………………101

8.12.3 Storage Features ……………………………….101

8.12.4 Examples ………………………….……….101

8.13 User Mode iSCSI Backend ……………………..……….102

8.13.1 Configuration …………………………………102

8.13.2 Storage Features ……………………………….102

8.14 Ceph RADOS Block Devices (RBD) ………………………….102

8.14.1 Configuration …………………………………103

8.14.2 Authentication …………………………………104

8.14.3 Storage Features ……………………………….104

8.15 Ceph Filesystem (CephFS) ……………………………..104

8.15.1 Configuration …………………………………104

8.15.2 Authentication …………………………………105

8.15.3 Storage Features ……………………………….105

9 Storage Replication 107

9.1 Supported Storage Types ………………………………107

9.2 Schedule Format …………………………………..108

9.2.1 Detailed Specification ………………………….….108

9.2.2 Examples: …………………………………..109

9.3 Error Handling ……………………………………109

9.3.1 Possible issues ………………………………..109

9.3.2 Migrating a guest in case of Error ……………………….110

9.3.3 Example …………………………………..110

9.4 Managing Jobs ……………………………………111

9.5 Command Line Interface Examples ………………………….111

Proxmox VE Administration Guide ix

10 Qemu/KVM Virtual Machines 112

10.1 Emulated devices and paravirtualized devices ……………………..112

10.2 Virtual Machines Settings ………………………………113

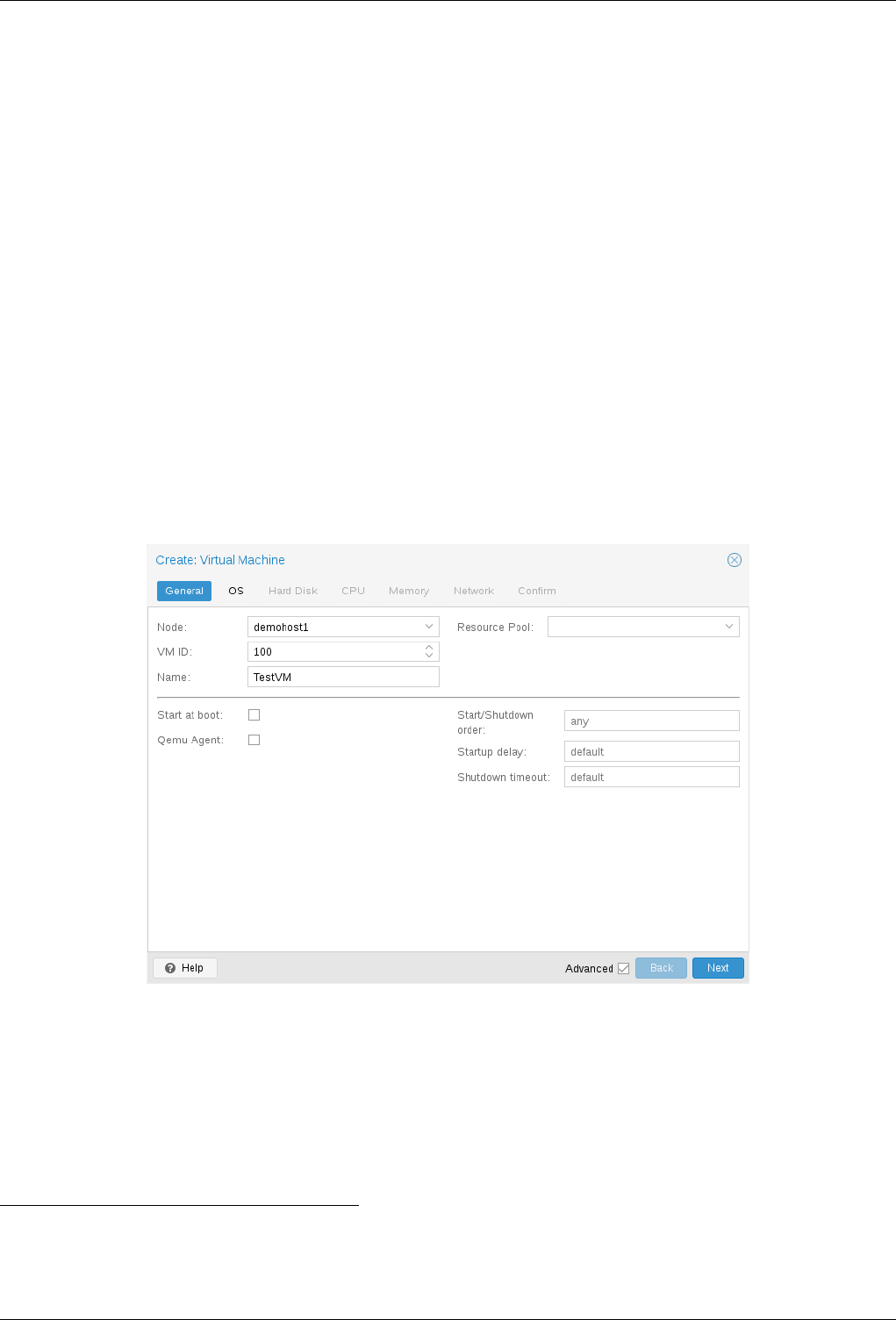

10.2.1 General Settings ……………………………….113

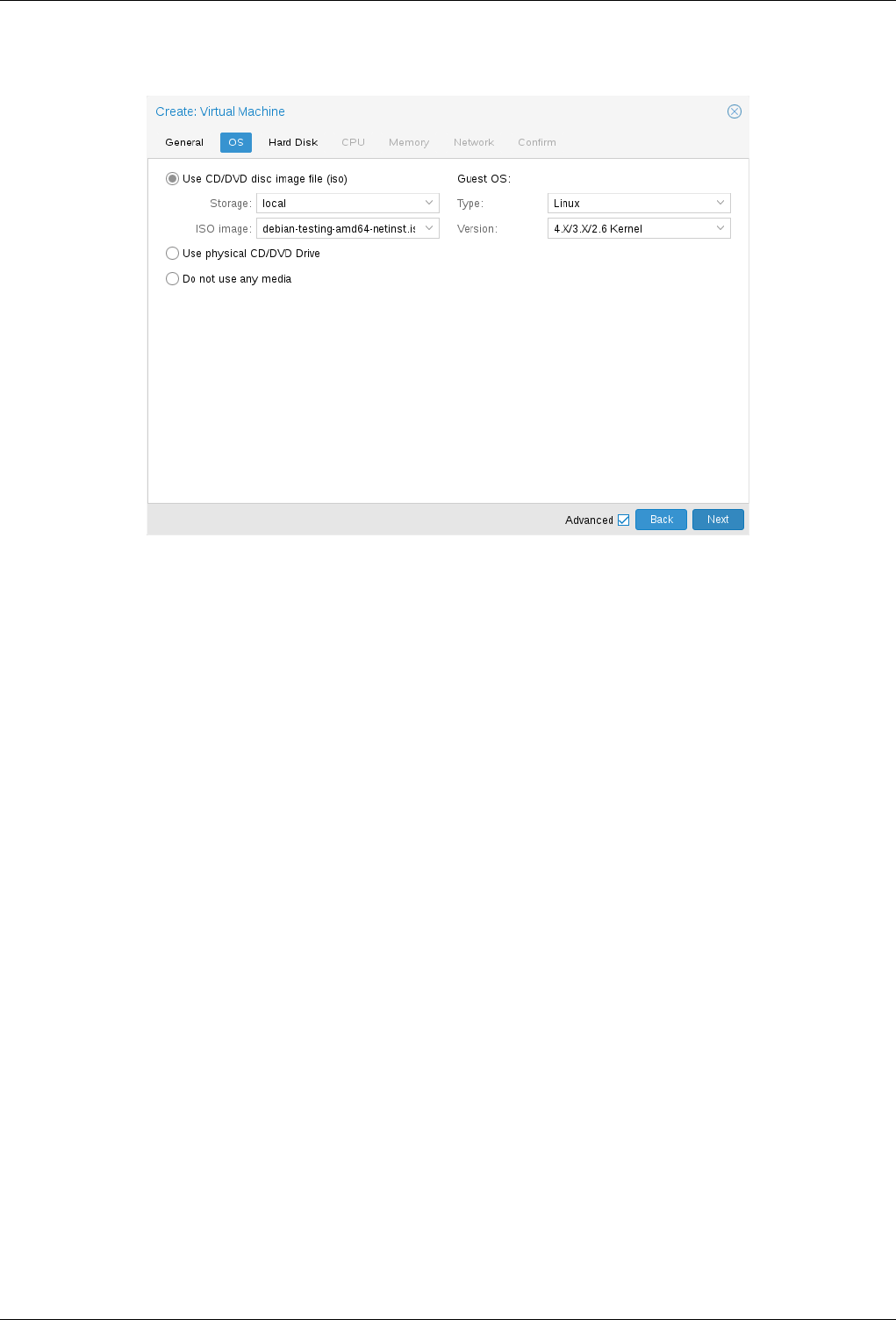

10.2.2 OS Settings ………………………………….114

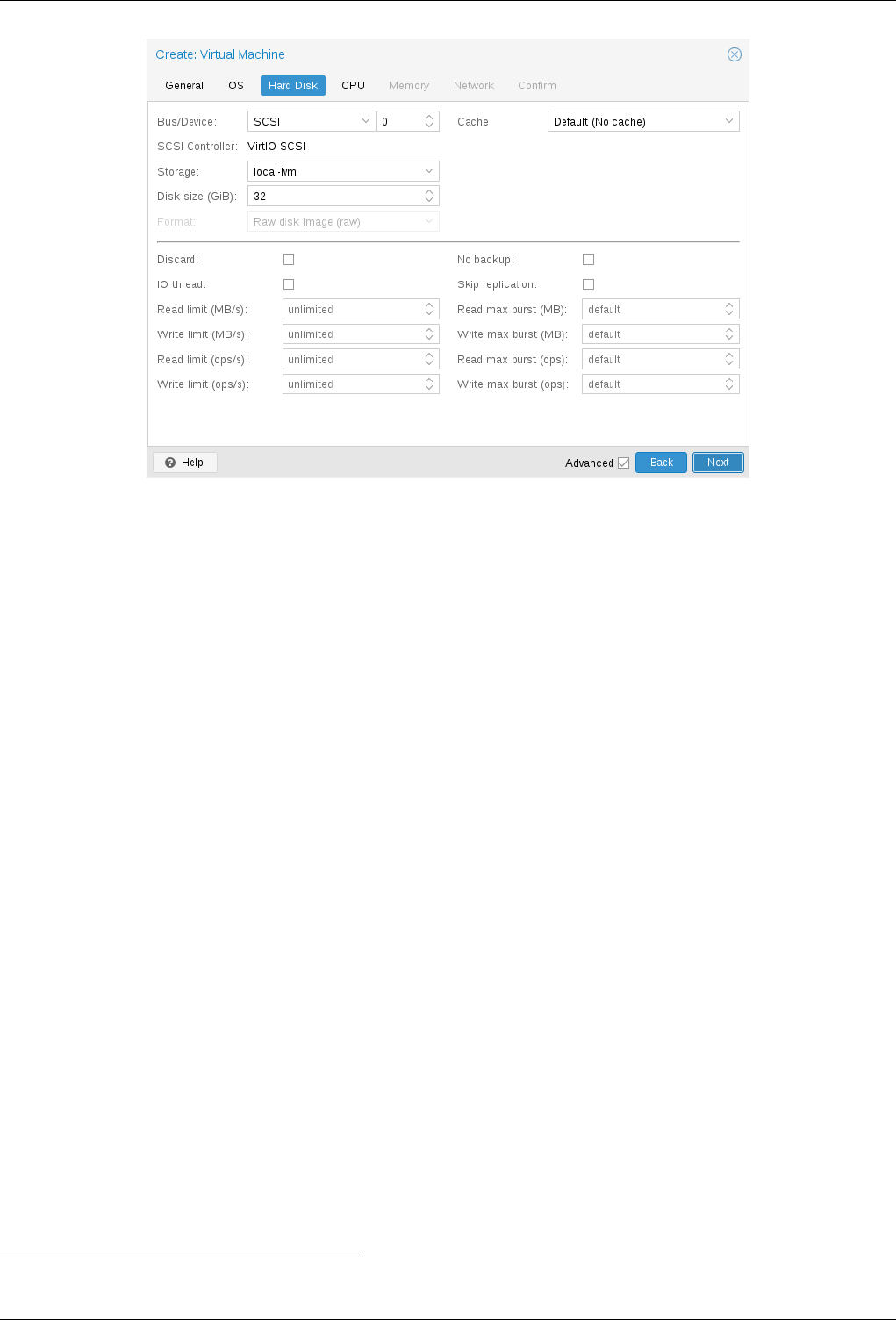

10.2.3 Hard Disk …………………………………..114

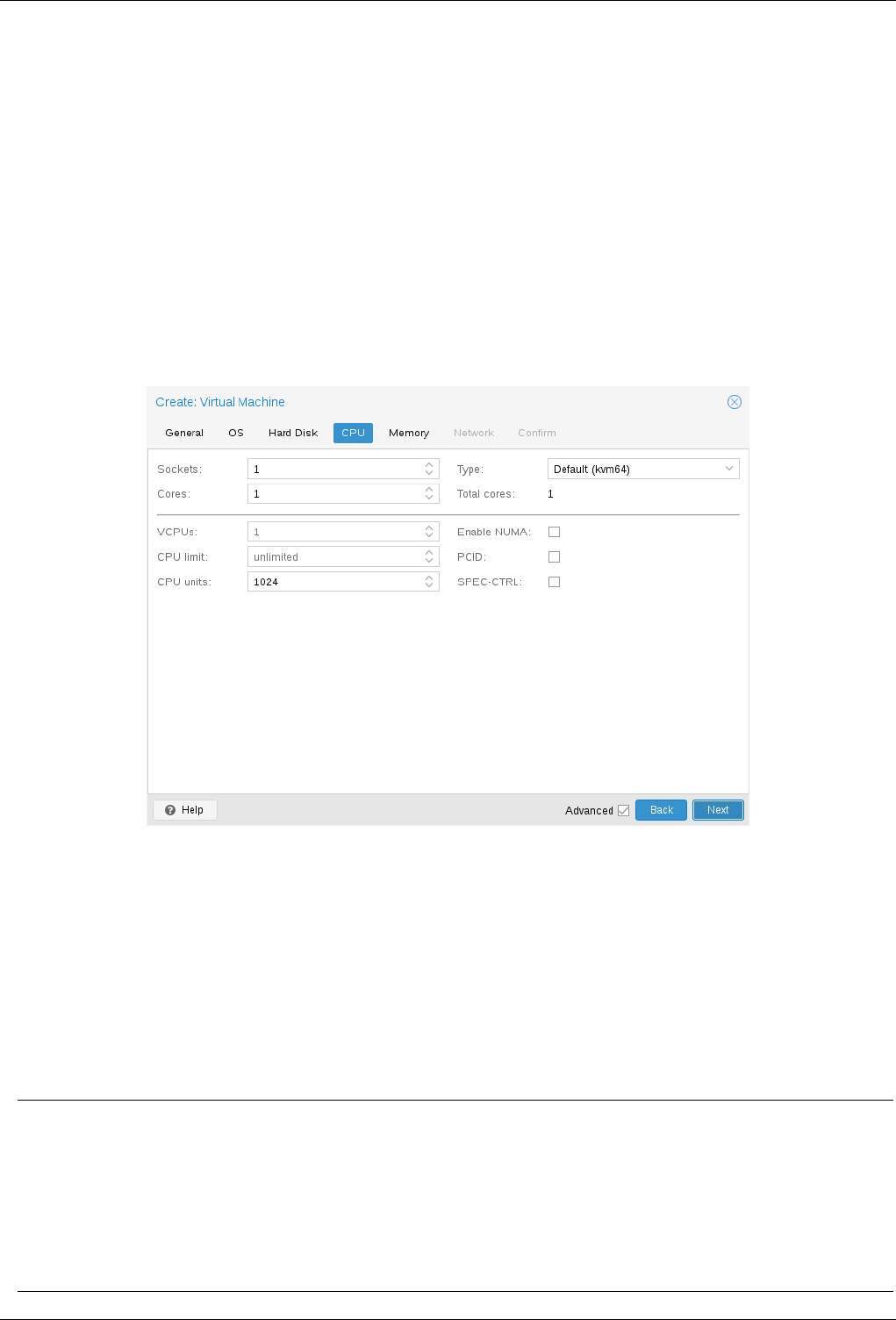

10.2.4 CPU ……………………………………..116

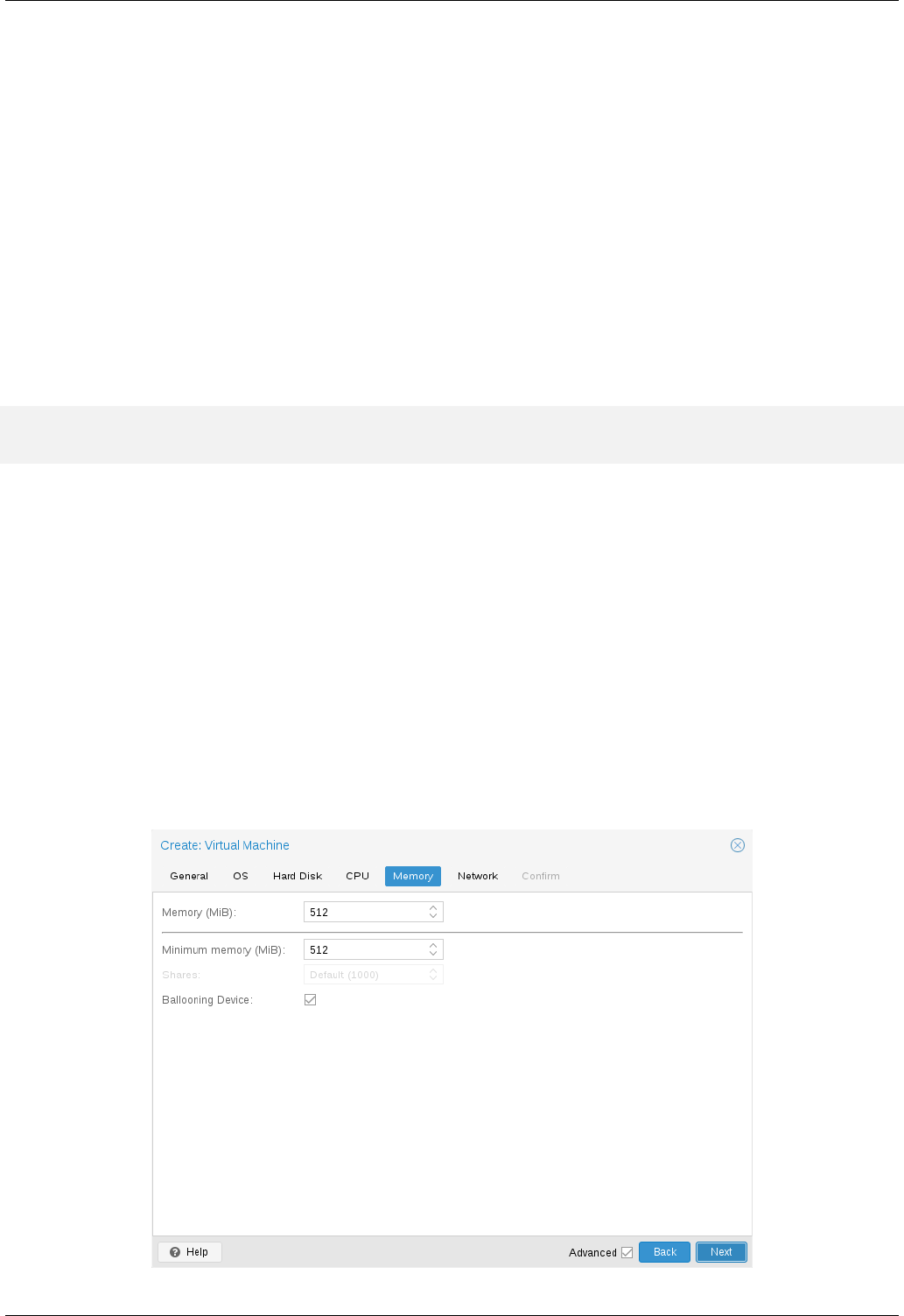

10.2.5 Memory ……………………………………119

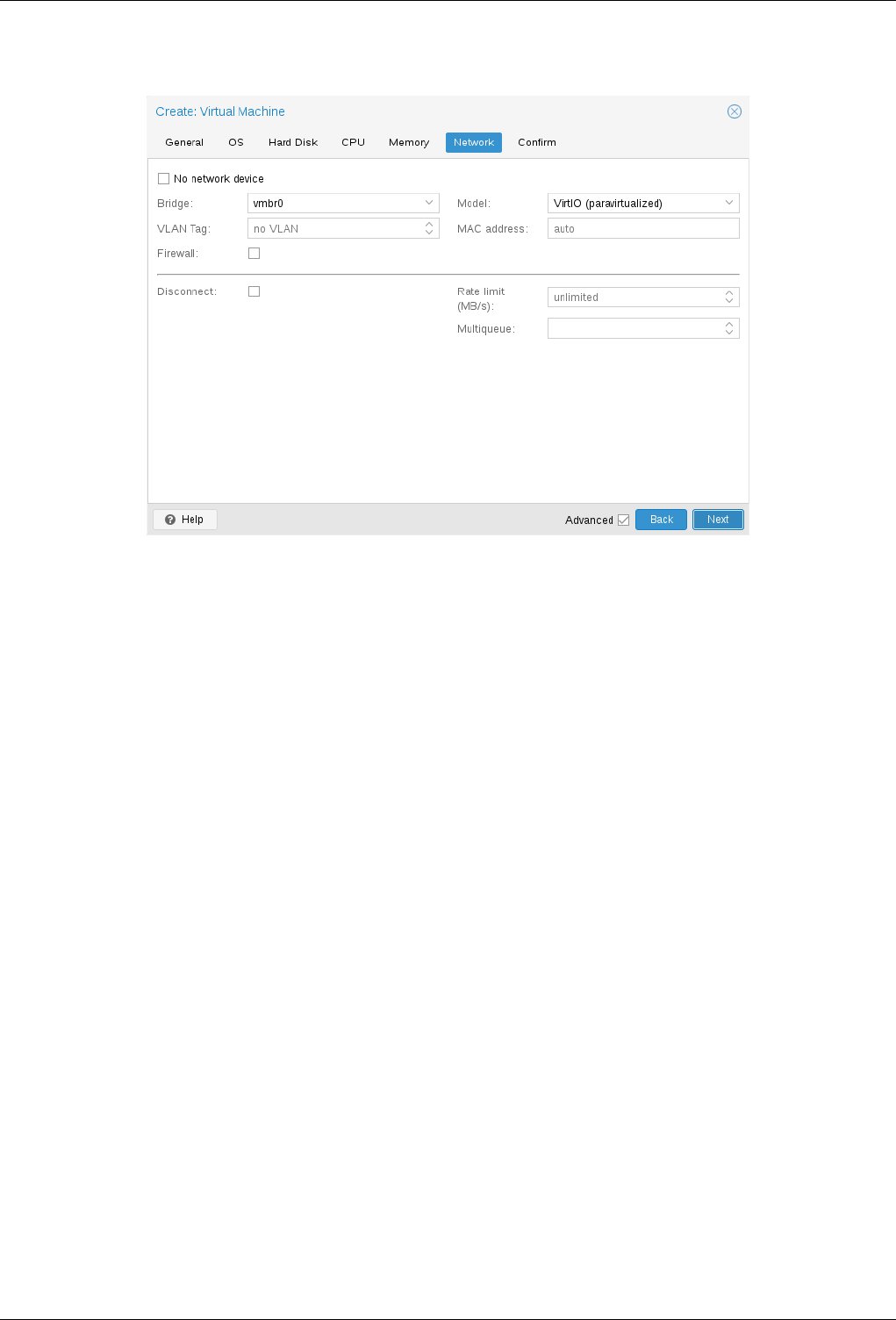

10.2.6 Network Device ………………………………..121

10.2.7 USB Passthrough ……………………………….122

10.2.8 BIOS and UEFI ………………………………..123

10.2.9 Automatic Start and Shutdown of Virtual Machines ……………….123

10.3 Migration ………………………………………124

10.3.1 Online Migration ……………………………….124

10.3.2 Offline Migration ……………………………….125

10.4 Copies and Clones ………………………………….125

10.5 Virtual Machine Templates ………………………………126

10.6 Importing Virtual Machines and disk images ………………………127

10.6.1 Step-by-step example of a Windows OVF import ………..……….127

10.6.2 Adding an external disk image to a Virtual Machine ……………….128

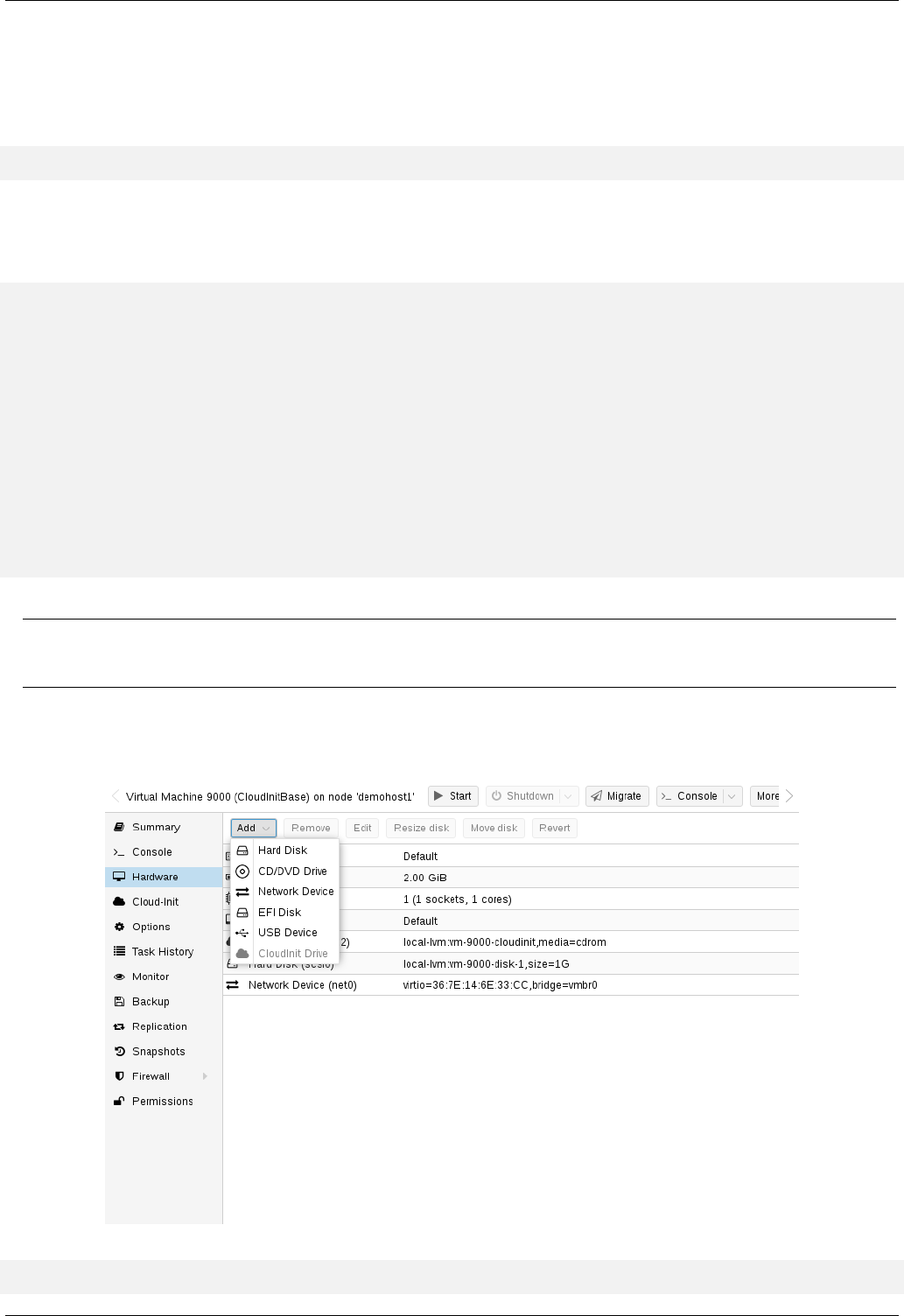

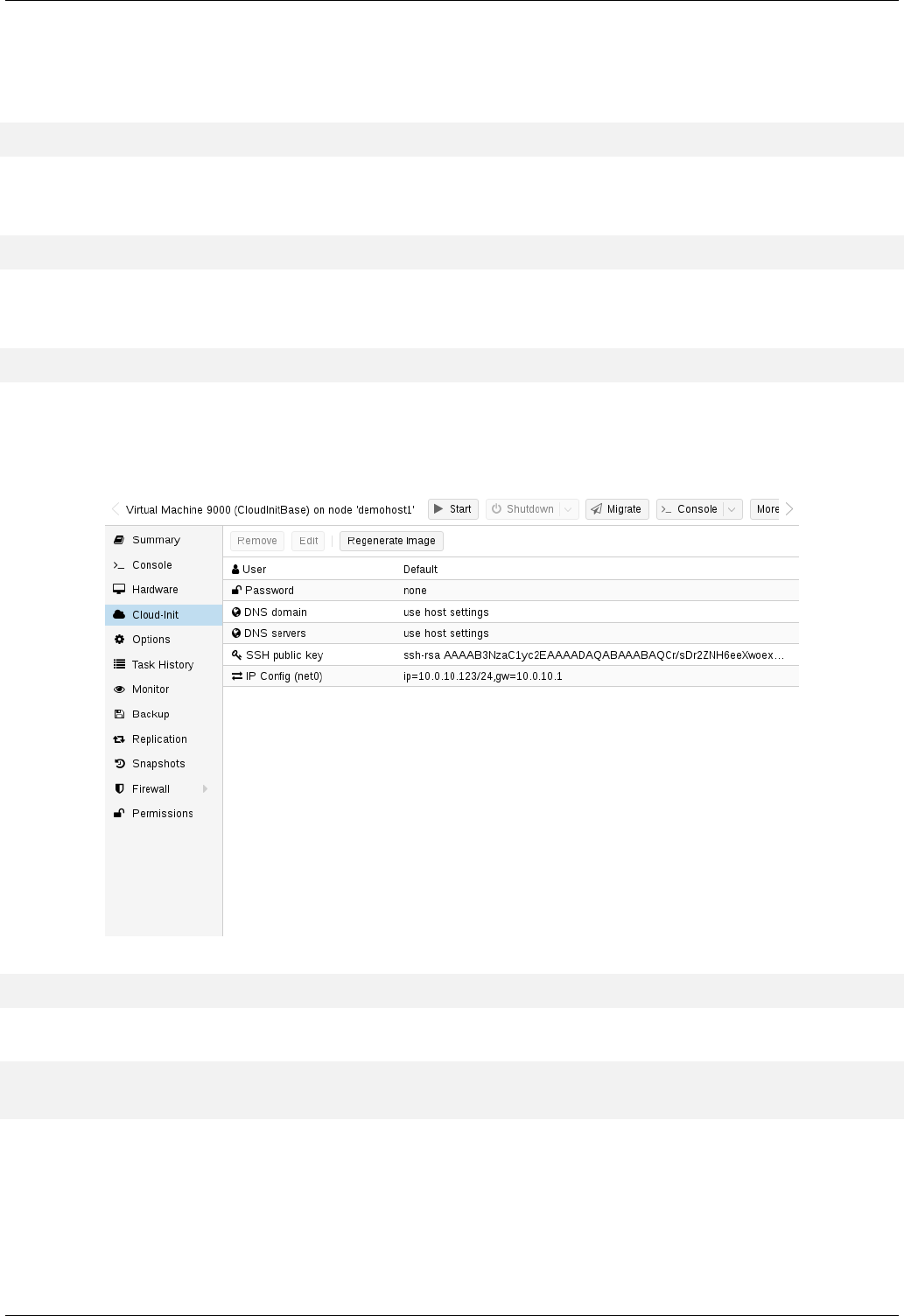

10.7 Cloud-Init Support ………………………………….128

10.7.1 Preparing Cloud-Init Templates …………………………129

10.7.2 Deploying Cloud-Init Templates …………………………130

10.7.3 Cloud-Init specific Options …………………………..131

10.8 Managing Virtual Machines with qm ………………………….132

10.8.1 CLI Usage Examples ……………………………..132

10.9 Configuration …………………………………….132

10.9.1 File Format ………………………………….133

10.9.2 Snapshots ………………………………….133

10.9.3 Options ……………………………………134

10.10Locks ………….…………………………….154

Proxmox VE Administration Guide x

11 Proxmox Container Toolkit 155

11.1 Technology Overview …………………………………155

11.2 Security Considerations ……………………………….156

11.3 Guest Operating System Configuration ………………………..156

11.4 Container Images ………………………………….158

11.5 Container Storage ………………………………….159

11.5.1 FUSE Mounts …………………………………159

11.5.2 Using Quotas Inside Containers ………………………..159

11.5.3 Using ACLs Inside Containers …………………………160

11.5.4 Backup of Containers mount points ………………………160

11.5.5 Replication of Containers mount points …………………….160

11.6 Container Settings ………………………………….160

11.6.1 General Settings ……………………………….160

11.6.2 CPU ……………………………………..162

11.6.3 Memory ……………………………………163

11.6.4 Mount Points …………………………………163

11.6.5 Network ……………………………………166

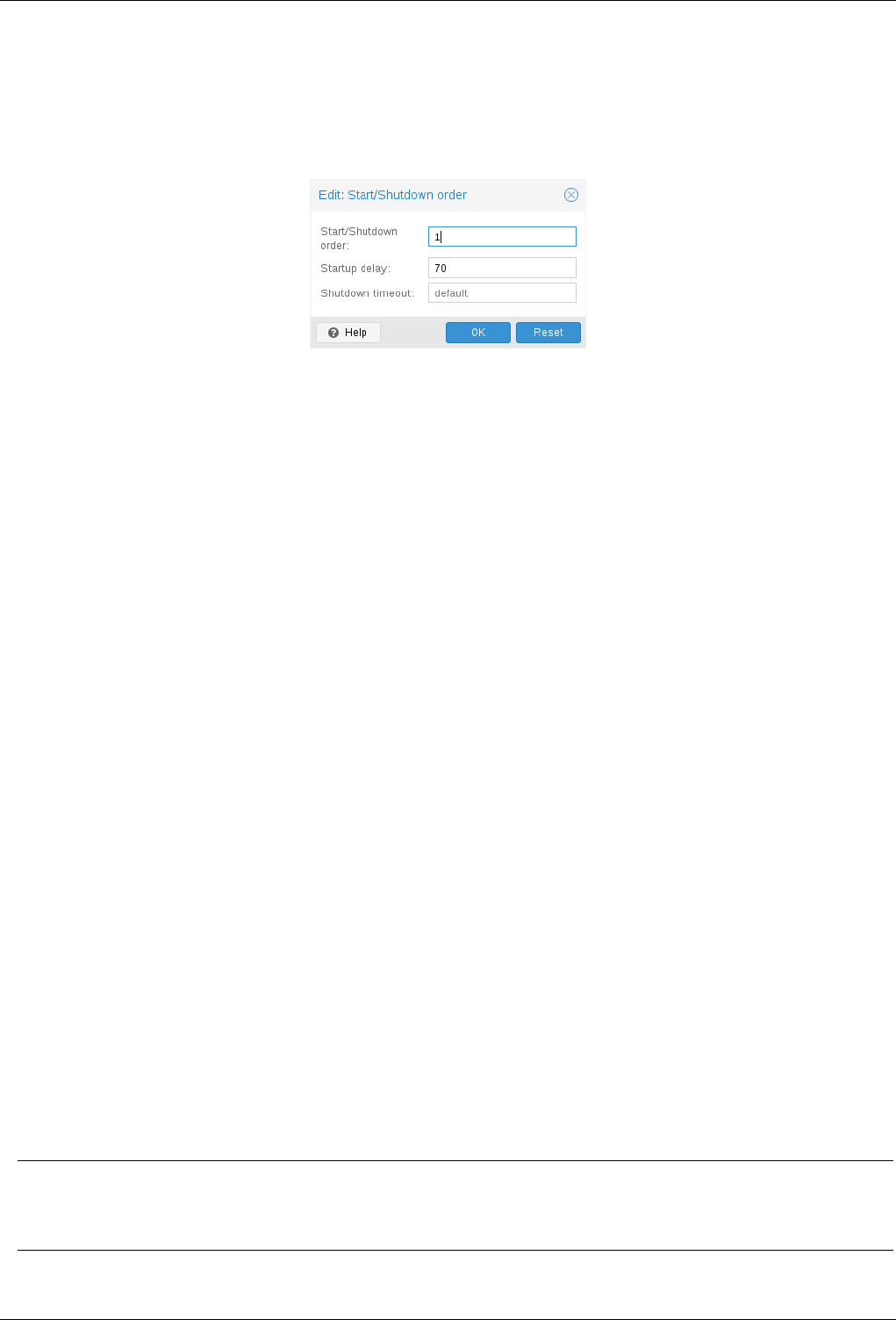

11.6.6 Automatic Start and Shutdown of Containers . . . . . . . . . . . . . . . . . . . . . . 167

11.7 Backup and Restore …………………………………168

11.7.1 Container Backup ……………………………….168

11.7.2 Restoring Container Backups ………………………….168

11.8 Managing Containers with pct ……………………………169

11.8.1 CLI Usage Examples ……………………………..170

11.8.2 Obtaining Debugging Logs …………………………..170

11.9 Migration ………………………………………171

11.10Configuration ……………………………..……..171

11.10.1File Format ………………………………….171

11.10.2Snapshots ………………………………….172

11.10.3Options ……………………………………172

11.11Locks ………….…………………………….177

12 Proxmox VE Firewall 178

12.1 Zones ………………………………………..178

12.2 Configuration Files ………………………………….178

12.2.1 Cluster Wide Setup ………………………………179

12.2.2 Host Specific Configuration …………………………..180

Proxmox VE Administration Guide xi

12.2.3 VM/Container Configuration ………………………….181

12.3 Firewall Rules ……………………………………182

12.4 Security Groups …………………………………..184

12.5 IP Aliases ………………………………………184

12.5.1 Standard IP Alias local_network . . . . . . . . . . . . . . . . . . . . . . . . . . 184

12.6 IP Sets ……………………………………….185

12.6.1 Standard IP set management …………………………185

12.6.2 Standard IP set blacklist …………………………185

12.6.3 Standard IP set ipfilter-net*………………………185

12.7 Services and Commands ………………………….……186

12.8 Tips and Tricks ……………………………………186

12.8.1 How to allow FTP ……………………………….186

12.8.2 Suricata IPS integration …………………………….186

12.9 Notes on IPv6 ……………………………………187

12.10Ports used by Proxmox VE ………………………………187

13 User Management 188

13.1 Users ………………………………………..188

13.1.1 System administrator ……………………………..188

13.1.2 Groups ……………………………………189

13.2 Authentication Realms ………………………………..189

13.3 Two factor authentication ……………………………….190

13.4 Permission Management ……………………………….190

13.4.1 Roles …………………………………….191

13.4.2 Privileges …………………………………..191

13.4.3 Objects and Paths ………………………………192

13.4.4 Pools …………………………………….193

13.4.5 What permission do I need? ………………………….193

13.5 Command Line Tool …………………………………194

13.6 Real World Examples ………………………………..195

13.6.1 Administrator Group ……………………………..195

13.6.2 Auditors ……………………………………195

13.6.3 Delegate User Management ………………………….196

13.6.4 Pools …………………………………….196

Proxmox VE Administration Guide xii

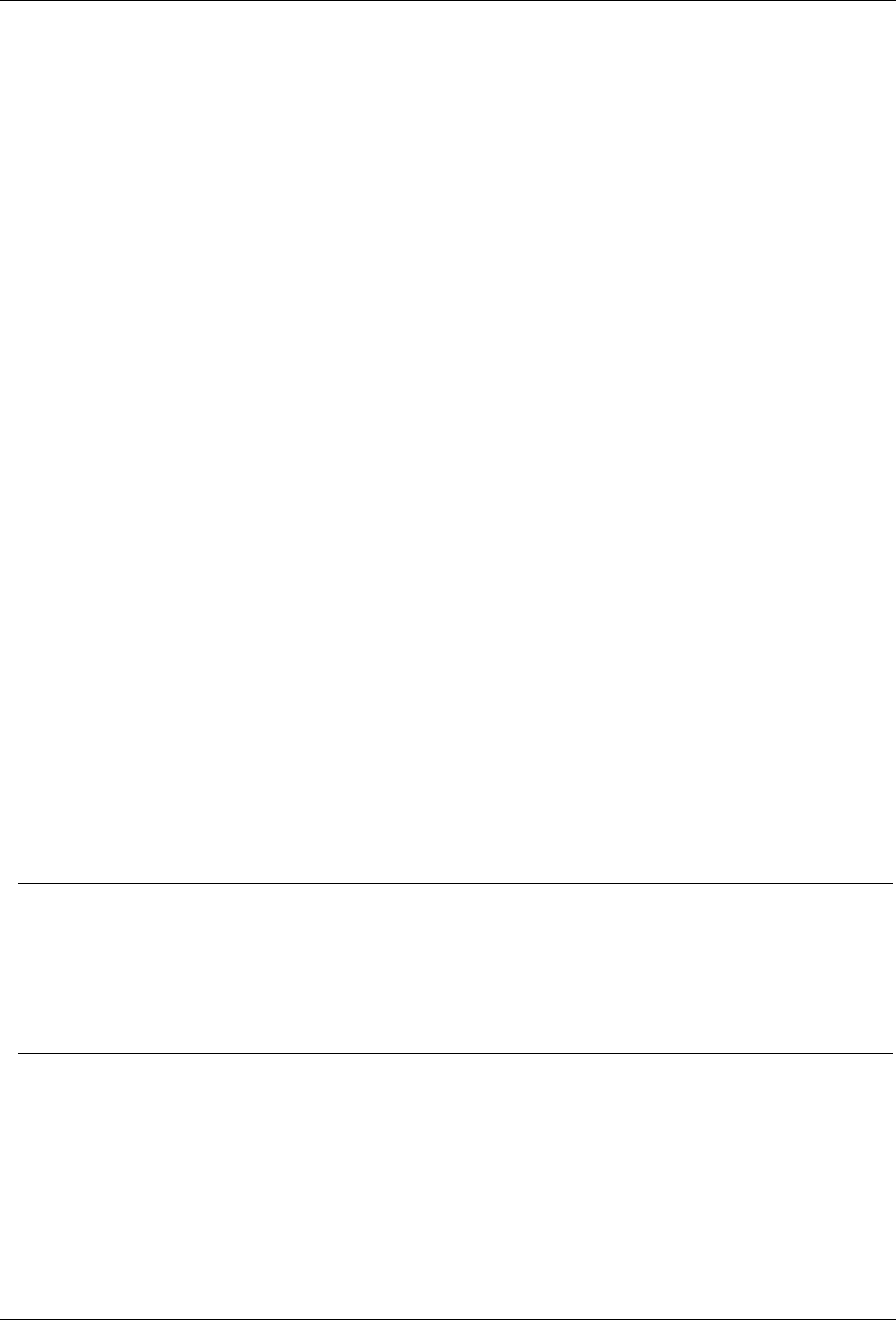

14 High Availability 197

14.1 Requirements ……………………………………198

14.2 Resources ……………………………………..199

14.3 Management Tasks ………………………………….199

14.4 How It Works …………………………………….200

14.4.1 Service States ………………………………..201

14.4.2 Local Resource Manager ……………………………202

14.4.3 Cluster Resource Manager …………………………..203

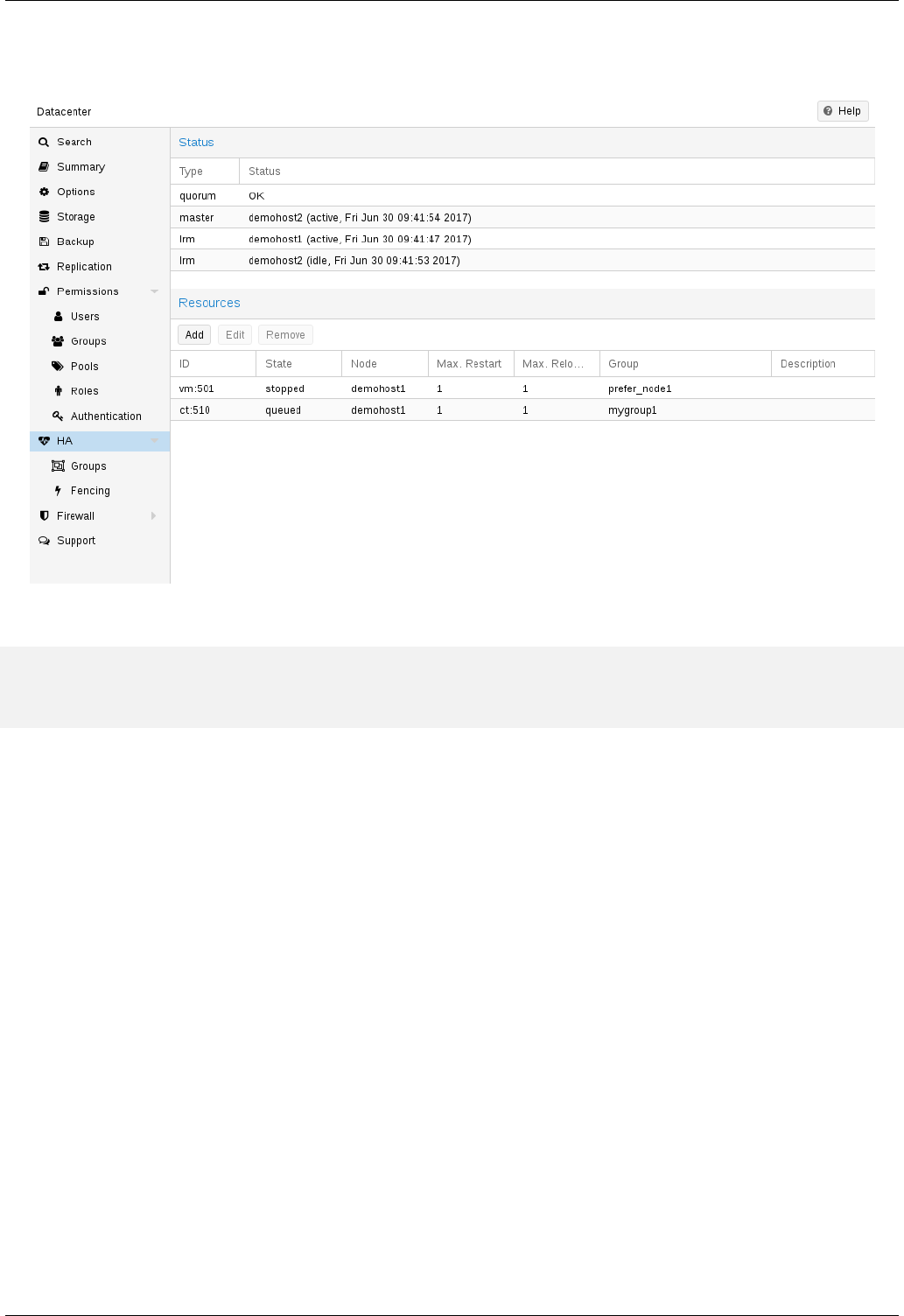

14.5 Configuration …………………………………….203

14.5.1 Resources ………………………………….204

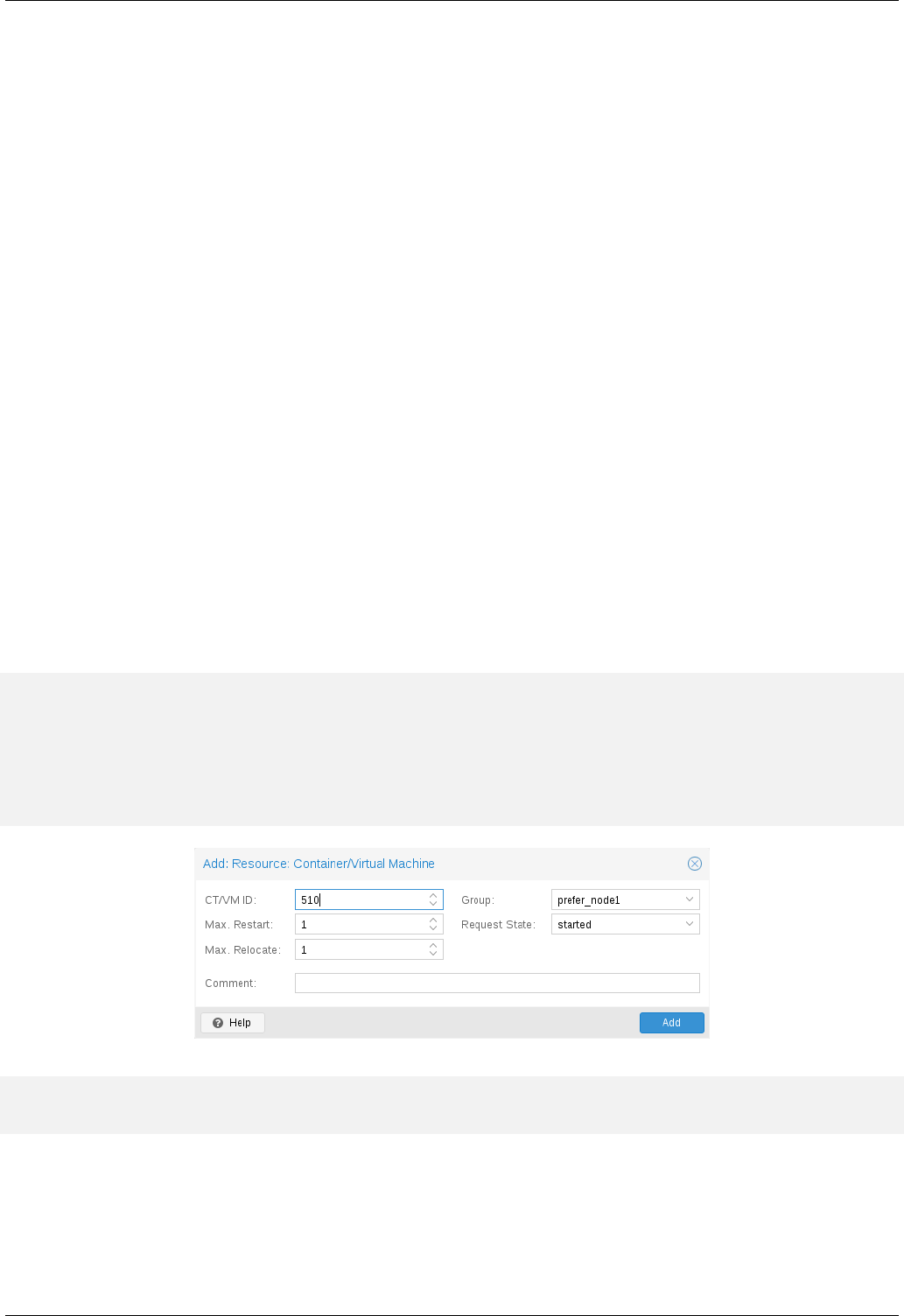

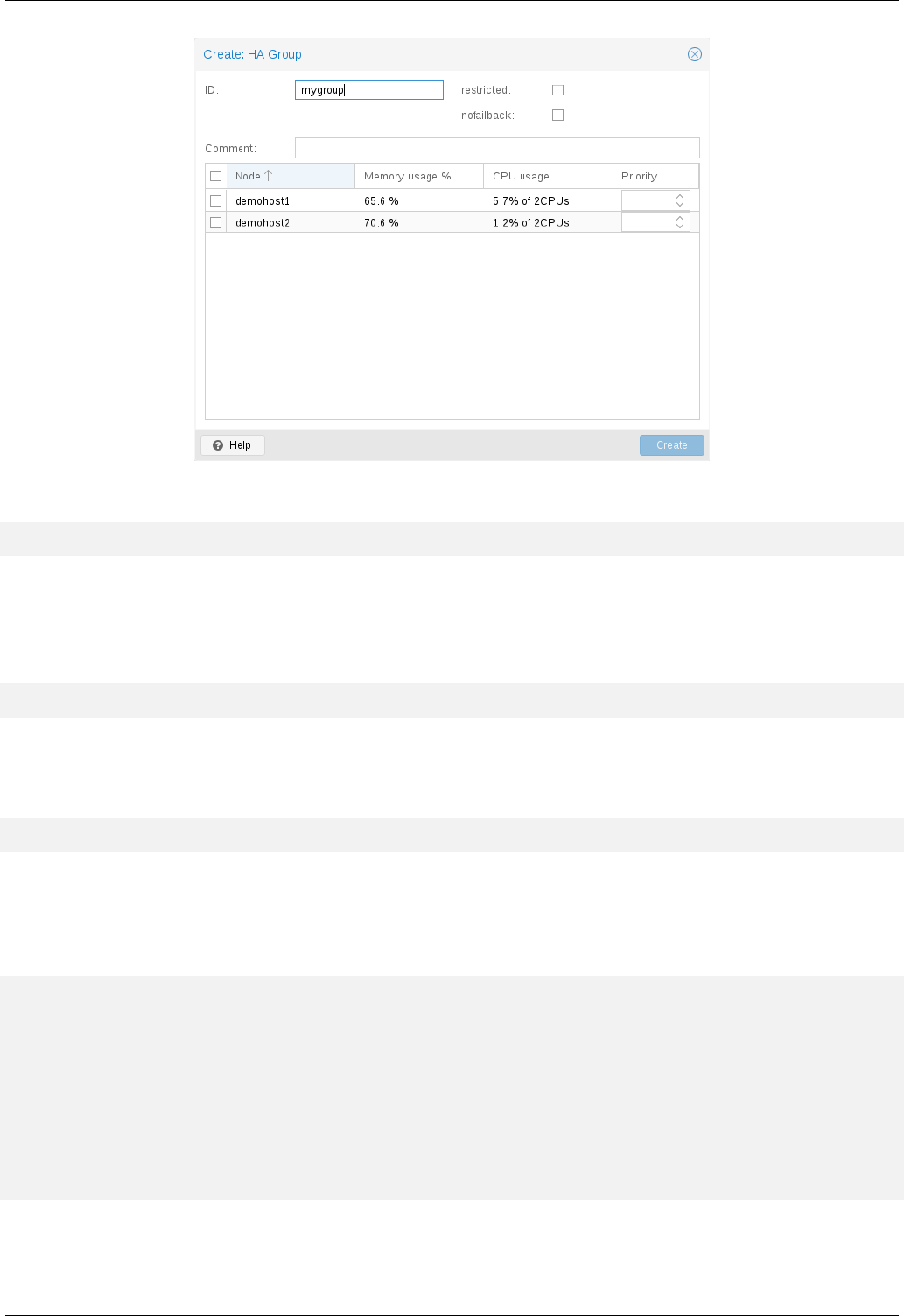

14.5.2 Groups ……………………………………206

14.6 Fencing ……………………………………….208

14.6.1 How Proxmox VE Fences ……………………………208

14.6.2 Configure Hardware Watchdog ……………………..….209

14.6.3 Recover Fenced Services …………………………..209

14.7 Start Failure Policy ………………………………….209

14.8 Error Recovery ……………………………………210

14.9 Package Updates …………………………………..210

14.10Node Maintenance .…………………………………210

14.10.1Shutdown ………………………..…………210

14.10.2Reboot ……………………………………211

14.10.3Manual Resource Movement ………………………….211

15 Backup and Restore 212

15.1 Backup modes ……………………………………212

15.2 Backup File Names …………………………….……214

15.3 Restore ……………………………………….214

15.3.1 Bandwidth Limit ………………………………..214

15.4 Configuration …………………………………….215

15.5 Hook Scripts …………………………………….216

15.6 File Exclusions ……………………………………216

15.7 Examples ………………………………………217

Proxmox VE Administration Guide xiii

16 Important Service Daemons 218

16.1 pvedaemon — Proxmox VE API Daemon ………………………..218

16.2 pveproxy — Proxmox VE API Proxy Daemon ………………………218

16.2.1 Host based Access Control …………………………..218

16.2.2 SSL Cipher Suite ……………………………….219

16.2.3 Diffie-Hellman Parameters …………………………..219

16.2.4 Alternative HTTPS certificate ………………………….219

16.3 pvestatd — Proxmox VE Status Daemon ………………………..219

16.4 spiceproxy — SPICE Proxy Service …………………………..219

16.4.1 Host based Access Control …………………………..220

17 Useful Command Line Tools 221

17.1 pvesubscription — Subscription Management ………………………221

17.2 pveperf — Proxmox VE Benchmark Script ……………………….221

17.3 Shell interface for the Proxmox VE API ………………………..222

17.3.1 EXAMPLES ………………………………….222

17.4 Proxmox Node Management ……………………………..222

17.4.1 EXAMPLES ………………………………….222

18 Frequently Asked Questions 223

19 Bibliography 226

19.1 Books about Proxmox VE ………………………………226

19.2 Books about related technology ……………………………226

19.3 Books about related topics ………………………………227

A Command Line Interface 228

A.1 Output format options [FORMAT_OPTIONS] …………………….228

A.2 pvesm — Proxmox VE Storage Manager ………………………..229

A.3 pvesubscription — Proxmox VE Subscription Manager …………………238

A.4 pveperf — Proxmox VE Benchmark Script ……………………….239

A.5 pveceph — Manage CEPH Services on Proxmox VE Nodes ……………….239

A.6 pvenode — Proxmox VE Node Management ………………………243

A.7 pvesh — Shell interface for the Proxmox VE API …………………….246

A.8 qm — Qemu/KVM Virtual Machine Manager ………………………248

A.9 qmrestore — Restore QemuServer vzdump Backups . . . . . . . . . . . . . . . . . . . . . . 272

A.10 pct — Proxmox Container Toolkit ……………………………273

Proxmox VE Administration Guide xiv

A.11 pveam — Proxmox VE Appliance Manager ……………………….288

A.12 pvecm — Proxmox VE Cluster Manager ………………………..289

A.13 pvesr — Proxmox VE Storage Replication ……………………….292

A.14 pveum — Proxmox VE User Manager …………………………296

A.15 vzdump — Backup Utility for VMs and Containers . . . . . . . . . . . . . . . . . . . . . . . . 300

A.16 ha-manager — Proxmox VE HA Manager ……………………….302

B Service Daemons 307

B.1 pve-firewall — Proxmox VE Firewall Daemon . . . . . . . . . . . . . . . . . . . . . . . . . . 307

B.2 pvedaemon — Proxmox VE API Daemon ……………………….308

B.3 pveproxy — Proxmox VE API Proxy Daemon ………………………309

B.4 pvestatd — Proxmox VE Status Daemon ………………………..309

B.5 spiceproxy — SPICE Proxy Service ………………………….310

B.6 pmxcfs — Proxmox Cluster File System ………………………..311

B.7 pve-ha-crm — Cluster Resource Manager Daemon …………………..311

B.8 pve-ha-lrm — Local Resource Manager Daemon . . . . . . . . . . . . . . . . . . . . . . . . 312

C Configuration Files 313

C.1 Datacenter Configuration ……………………………….313

C.1.1 File Format ………………………………….313

C.1.2 Options ……………………………………313

D Firewall Macro Definitions 316

E GNU Free Documentation License 330

Proxmox VE Administration Guide 1 / 336

Chapter 1

Introduction

Proxmox VE is a platform to run virtual machines and containers. It is based on Debian Linux, and completely

open source. For maximum flexibility, we implemented two virtualization technologies — Kernel-based Virtual

Machine (KVM) and container-based virtualization (LXC).

One main design goal was to make administration as easy as possible. You can use Proxmox VE on a

single node, or assemble a cluster of many nodes. All management tasks can be done using our web-based

management interface, and even a novice user can setup and install Proxmox VE within minutes.

qm pvesm pveum ha-manager

pct pvecm pveceph pve-firewall

User Tools

pveproxy pvedaemon pvestatd pve-ha-lrm pve-cluster

Linux Kernel

KVM

Container

AppApp

VM

Guest OS

AppApp

Qemu

Container

AppApp

VM

Guest OS

AppApp

Proxmox VE Administration Guide 2 / 336

1.1 Central Management

While many people start with a single node, Proxmox VE can scale out to a large set of clustered nodes.

The cluster stack is fully integrated and ships with the default installation.

Unique Multi-Master Design

The integrated web-based management interface gives you a clean overview of all your KVM guests

and Linux containers and even of your whole cluster. You can easily manage your VMs and con-

tainers, storage or cluster from the GUI. There is no need to install a separate, complex, and pricey

management server.

Proxmox Cluster File System (pmxcfs)

Proxmox VE uses the unique Proxmox Cluster file system (pmxcfs), a database-driven file system for

storing configuration files. This enables you to store the configuration of thousands of virtual machines.

By using corosync, these files are replicated in real time on all cluster nodes. The file system stores

all data inside a persistent database on disk, nonetheless, a copy of the data resides in RAM which

provides a maximum storage size is 30MB — more than enough for thousands of VMs.

Proxmox VE is the only virtualization platform using this unique cluster file system.

Web-based Management Interface

Proxmox VE is simple to use. Management tasks can be done via the included web based manage-

ment interface — there is no need to install a separate management tool or any additional management

node with huge databases. The multi-master tool allows you to manage your whole cluster from any

node of your cluster. The central web-based management — based on the JavaScript Framework (Ex-

tJS) — empowers you to control all functionalities from the GUI and overview history and syslogs of each

single node. This includes running backup or restore jobs, live-migration or HA triggered activities.

Command Line

For advanced users who are used to the comfort of the Unix shell or Windows Powershell, Proxmox

VE provides a command line interface to manage all the components of your virtual environment. This

command line interface has intelligent tab completion and full documentation in the form of UNIX man

pages.

REST API

Proxmox VE uses a RESTful API. We choose JSON as primary data format, and the whole API is for-

mally defined using JSON Schema. This enables fast and easy integration for third party management

tools like custom hosting environments.

Role-based Administration

You can define granular access for all objects (like VMs, storages, nodes, etc.) by using the role based

user- and permission management. This allows you to define privileges and helps you to control

access to objects. This concept is also known as access control lists: Each permission specifies a

subject (a user or group) and a role (set of privileges) on a specific path.

Authentication Realms

Proxmox VE supports multiple authentication sources like Microsoft Active Directory, LDAP, Linux PAM

standard authentication or the built-in Proxmox VE authentication server.

Proxmox VE Administration Guide 3 / 336

1.2 Flexible Storage

The Proxmox VE storage model is very flexible. Virtual machine images can either be stored on one or

several local storages or on shared storage like NFS and on SAN. There are no limits, you may configure as

many storage definitions as you like. You can use all storage technologies available for Debian Linux.

One major benefit of storing VMs on shared storage is the ability to live-migrate running machines without

any downtime, as all nodes in the cluster have direct access to VM disk images.

We currently support the following Network storage types:

• LVM Group (network backing with iSCSI targets)

• iSCSI target

• NFS Share

• CIFS Share

• Ceph RBD

• Directly use iSCSI LUNs

• GlusterFS

Local storage types supported are:

• LVM Group (local backing devices like block devices, FC devices, DRBD, etc.)

• Directory (storage on existing filesystem)

• ZFS

1.3 Integrated Backup and Restore

The integrated backup tool (vzdump) creates consistent snapshots of running Containers and KVM guests.

It basically creates an archive of the VM or CT data which includes the VM/CT configuration files.

KVM live backup works for all storage types including VM images on NFS, CIFS, iSCSI LUN, Ceph RBD or

Sheepdog. The new backup format is optimized for storing VM backups fast and effective (sparse files, out

of order data, minimized I/O).

1.4 High Availability Cluster

A multi-node Proxmox VE HA Cluster enables the definition of highly available virtual servers. The Proxmox

VE HA Cluster is based on proven Linux HA technologies, providing stable and reliable HA services.

Proxmox VE Administration Guide 4 / 336

1.5 Flexible Networking

Proxmox VE uses a bridged networking model. All VMs can share one bridge as if virtual network cables

from each guest were all plugged into the same switch. For connecting VMs to the outside world, bridges

are attached to physical network cards assigned a TCP/IP configuration.

For further flexibility, VLANs (IEEE 802.1q) and network bonding/aggregation are possible. In this way it is

possible to build complex, flexible virtual networks for the Proxmox VE hosts, leveraging the full power of the

Linux network stack.

1.6 Integrated Firewall

The integrated firewall allows you to filter network packets on any VM or Container interface. Common sets

of firewall rules can be grouped into “security groups”.

1.7 Why Open Source

Proxmox VE uses a Linux kernel and is based on the Debian GNU/Linux Distribution. The source code of

Proxmox VE is released under the GNU Affero General Public License, version 3. This means that you are

free to inspect the source code at any time or contribute to the project yourself.

At Proxmox we are committed to use open source software whenever possible. Using open source software

guarantees full access to all functionalities — as well as high security and reliability. We think that everybody

should have the right to access the source code of a software to run it, build on it, or submit changes back

to the project. Everybody is encouraged to contribute while Proxmox ensures the product always meets

professional quality criteria.

Open source software also helps to keep your costs low and makes your core infrastructure independent

from a single vendor.

1.8 Your benefit with Proxmox VE

• Open source software

• No vendor lock-in

• Linux kernel

• Fast installation and easy-to-use

• Web-based management interface

• REST API

• Huge active community

• Low administration costs and simple deployment

Proxmox VE Administration Guide 5 / 336

1.9 Getting Help

1.9.1 Proxmox VE Wiki

The primary source of information is the Proxmox VE Wiki. It combines the reference documentation with

user contributed content.

1.9.2 Community Support Forum

Proxmox VE itself is fully open source, so we always encourage our users to discuss and share their knowl-

edge using the Proxmox VE Community Forum. The forum is fully moderated by the Proxmox support team,

and has a quite large user base around the whole world. Needless to say that such a large forum is a great

place to get information.

1.9.3 Mailing Lists

This is a fast way to communicate via email with the Proxmox VE community

• Mailing list for users: PVE User List

The primary communication channel for developers is:

• Mailing list for developer: PVE development discussion

1.9.4 Commercial Support

Proxmox Server Solutions Gmbh also offers commercial Proxmox VE Subscription Service Plans. System

Administrators with a standard subscription plan can access a dedicated support portal with guaranteed re-

sponse time, where Proxmox VE developers help them should an issue appear. Please contact the Proxmox

sales team for more information or volume discounts.

1.9.5 Bug Tracker

We also run a public bug tracker at https://bugzilla.proxmox.com. If you ever detect an issue, you can file a

bug report there. This makes it easy to track its status, and you will get notified as soon as the problem is

fixed.

1.10 Project History

The project started in 2007, followed by a first stable version in 2008. At the time we used OpenVZ for

containers, and KVM for virtual machines. The clustering features were limited, and the user interface was

simple (server generated web page).

But we quickly developed new features using the Corosync cluster stack, and the introduction of the new

Proxmox cluster file system (pmxcfs) was a big step forward, because it completely hides the cluster com-

plexity from the user. Managing a cluster of 16 nodes is as simple as managing a single node.

Proxmox VE Administration Guide 6 / 336

We also introduced a new REST API, with a complete declarative specification written in JSON-Schema.

This enabled other people to integrate Proxmox VE into their infrastructure, and made it easy to provide

additional services.

Also, the new REST API made it possible to replace the original user interface with a modern HTML5

application using JavaScript. We also replaced the old Java based VNC console code with noVNC. So

you only need a web browser to manage your VMs.

The support for various storage types is another big task. Notably, Proxmox VE was the first distribution to

ship ZFS on Linux by default in 2014. Another milestone was the ability to run and manage Ceph storage on

the hypervisor nodes. Such setups are extremely cost effective.

When we started we were among the first companies providing commercial support for KVM. The KVM

project itself continuously evolved, and is now a widely used hypervisor. New features arrive with each

release. We developed the KVM live backup feature, which makes it possible to create snapshot backups on

any storage type.

The most notable change with version 4.0 was the move from OpenVZ to LXC. Containers are now deeply

integrated, and they can use the same storage and network features as virtual machines.

1.11 Improving the Proxmox VE Documentation

Depending on which issue you want to improve, you can use a variety of communication mediums to reach

the developers.

If you notice an error in the current documentation, use the Proxmox bug tracker and propose an alternate

text/wording.

If you want to propose new content, it depends on what you want to document:

• if the content is specific to your setup, a wiki article is the best option. For instance if you want to document

specific options for guest systems, like which combination of Qemu drivers work best with a less popular

OS, this is a perfect fit for a wiki article.

• if you think the content is generic enough to be of interest for all users, then you should try to get it into the

reference documentation. The reference documentation is written in the easy to use asciidoc document

format. Editing the official documentation requires to clone the git repository at git://git.proxmox.

com/git/pve-docs.git and then follow the README.adoc document.

Improving the documentation is just as easy as editing a Wikipedia article and is an interesting foray in the

development of a large opensource project.

Note

If you are interested in working on the Proxmox VE codebase, the Developer Documentation wiki article

will show you where to start.

Proxmox VE Administration Guide 7 / 336

Chapter 2

Installing Proxmox VE

Proxmox VE is based on Debian and comes with an installation CD-ROM which includes a complete Debian

system («stretch» for version 5.x) as well as all necessary Proxmox VE packages.

The installer just asks you a few questions, then partitions the local disk(s), installs all required packages,

and configures the system including a basic network setup. You can get a fully functional system within a

few minutes. This is the preferred and recommended installation method.

Alternatively, Proxmox VE can be installed on top of an existing Debian system. This option is only recom-

mended for advanced users since detail knowledge about Proxmox VE is necessary.

2.1 System Requirements

For production servers, high quality server equipment is needed. Keep in mind, if you run 10 Virtual Servers

on one machine and you then experience a hardware failure, 10 services are lost. Proxmox VE supports

clustering, this means that multiple Proxmox VE installations can be centrally managed thanks to the included

cluster functionality.

Proxmox VE can use local storage (DAS), SAN, NAS and also distributed storage (Ceph RBD). For details

see chapter storage Chapter 8.

2.1.1 Minimum Requirements, for Evaluation

• CPU: 64bit (Intel EMT64 or AMD64)

• Intel VT/AMD-V capable CPU/Mainboard for KVM Full Virtualization support

• RAM: 1 GB RAM, plus additional RAM used for guests

• Hard drive

• One NIC

Proxmox VE Administration Guide 8 / 336

2.1.2 Recommended System Requirements

• CPU: 64bit (Intel EMT64 or AMD64), Multi core CPU recommended

• Intel VT/AMD-V capable CPU/Mainboard for KVM Full Virtualization support

• RAM: 8 GB RAM, plus additional RAM used for guests

• Hardware RAID with batteries protected write cache (“BBU”) or flash based protection

• Fast hard drives, best results with 15k rpm SAS, Raid10

• At least two NICs, depending on the used storage technology you need more

2.1.3 Simple Performance Overview

On an installed Proxmox VE system, you can run the included pveperf script to obtain an overview of the

CPU and hard disk performance.

Note

this is just a very quick and general benchmark. More detailed tests are recommended, especially regard-

ing the I/O performance of your system.

2.1.4 Supported web browsers for accessing the web interface

To use the web interface you need a modern browser, this includes:

• Firefox, a release from the current year, or the latest Extended Support Release

• Chrome, a release from the current year

• the Microsoft currently supported versions of Internet Explorer (as of 2016, this means IE 11 or IE Edge)

• the Apple currently supported versions of Safari (as of 2016, this means Safari 9)

If Proxmox VE detects you’re connecting from a mobile device, you will be redirected to a lightweight touch-

based UI.

2.2 Using the Proxmox VE Installation CD-ROM

You can download the ISO from http://www.proxmox.com. It includes the following:

• Complete operating system (Debian Linux, 64-bit)

• The Proxmox VE installer, which partitions the hard drive(s) with ext4, ext3, xfs or ZFS and installs the

operating system.

• Proxmox VE kernel (Linux) with LXC and KVM support

Proxmox VE Administration Guide 9 / 336

• Complete toolset for administering virtual machines, containers and all necessary resources

• Web based management interface for using the toolset

Note

By default, the complete server is used and all existing data is removed.

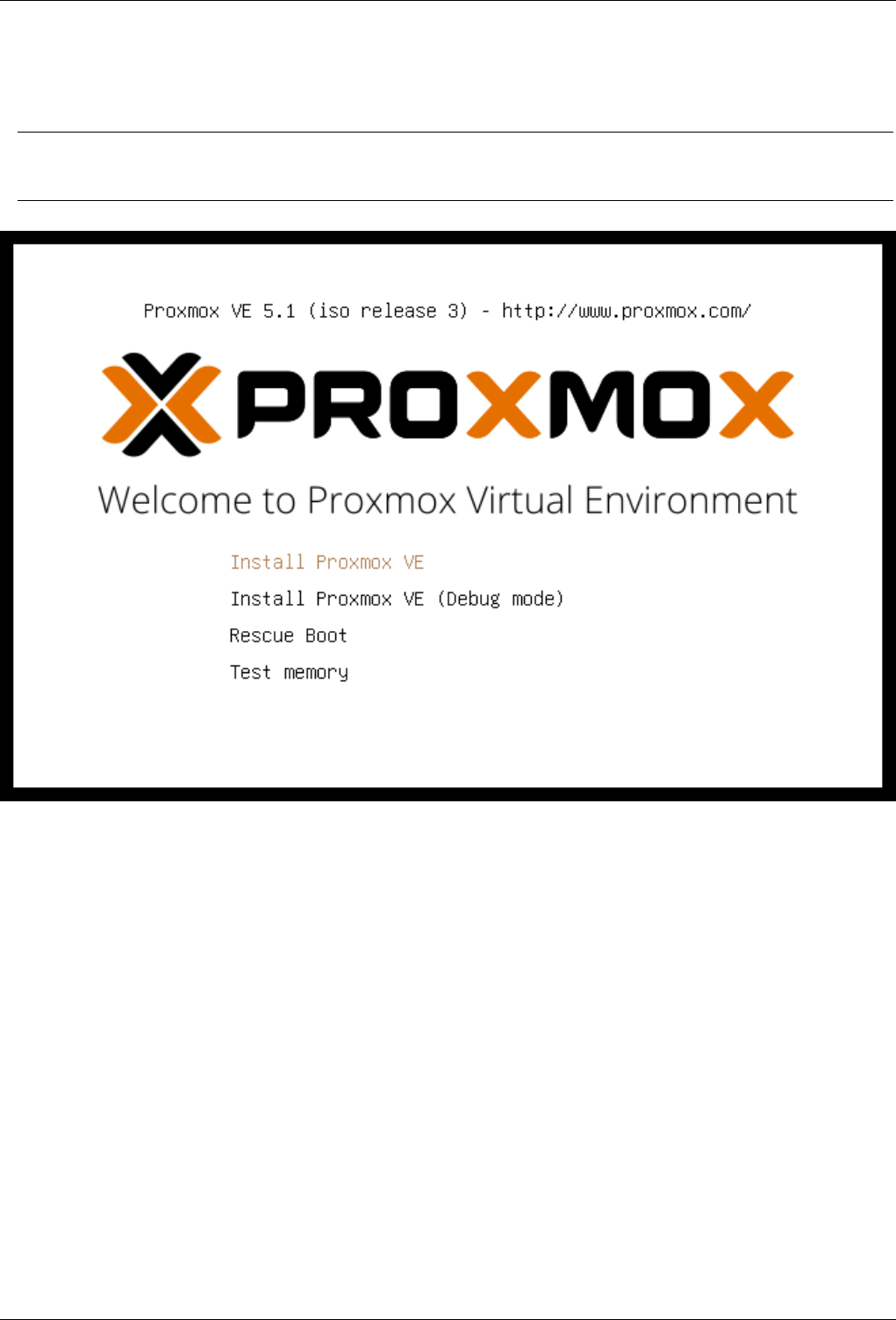

Please insert the installation CD-ROM, then boot from that drive. Immediately afterwards you can choose

the following menu options:

Install Proxmox VE

Start normal installation.

Install Proxmox VE (Debug mode)

Start installation in debug mode. It opens a shell console at several installation steps, so that you

can debug things if something goes wrong. Please press CTRL-D to exit those debug consoles and

continue installation. This option is mostly for developers and not meant for general use.

Rescue Boot

This option allows you to boot an existing installation. It searches all attached hard disks and, if it finds

an existing installation, boots directly into that disk using the existing Linux kernel. This can be useful

if there are problems with the boot block (grub), or the BIOS is unable to read the boot block from the

disk.

Test Memory

Runs memtest86+. This is useful to check if your memory is functional and error free.

Proxmox VE Administration Guide 10 / 336

You normally select Install Proxmox VE to start the installation. After that you get prompted to select the

target hard disk(s). The Options button lets you select the target file system, which defaults to ext4. The

installer uses LVM if you select ext3,ext4 or xfs as file system, and offers additional option to restrict

LVM space (see below)

If you have more than one disk, you can also use ZFS as file system. ZFS supports several software RAID

levels, so this is specially useful if you do not have a hardware RAID controller. The Options button lets

you select the ZFS RAID level, and you can choose disks there.

Proxmox VE Administration Guide 11 / 336

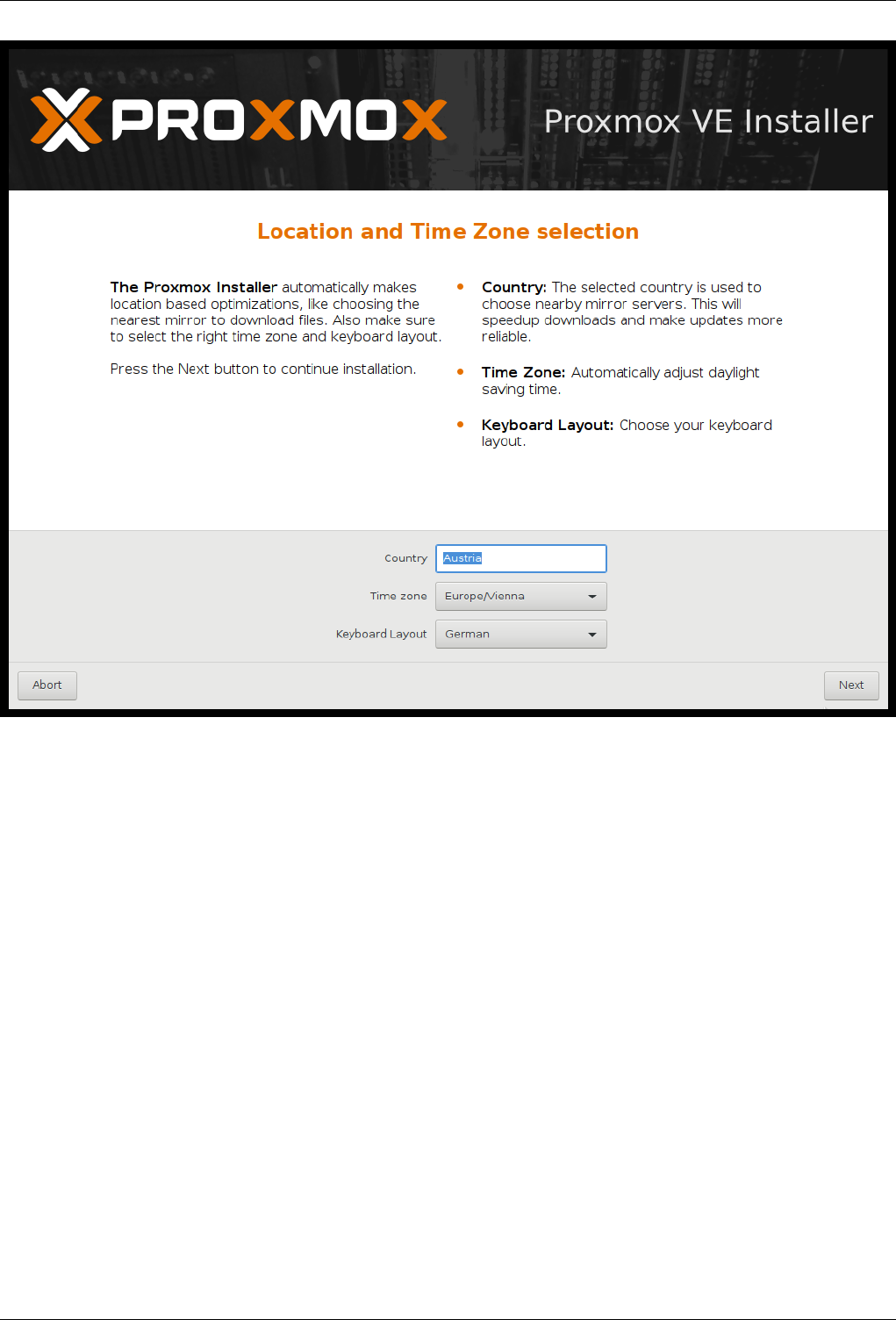

The next page just ask for basic configuration options like your location, the time zone and keyboard layout.

The location is used to select a download server near you to speedup updates. The installer is usually able

to auto detect those setting, so you only need to change them in rare situations when auto detection fails, or

when you want to use some special keyboard layout not commonly used in your country.

Proxmox VE Administration Guide 12 / 336

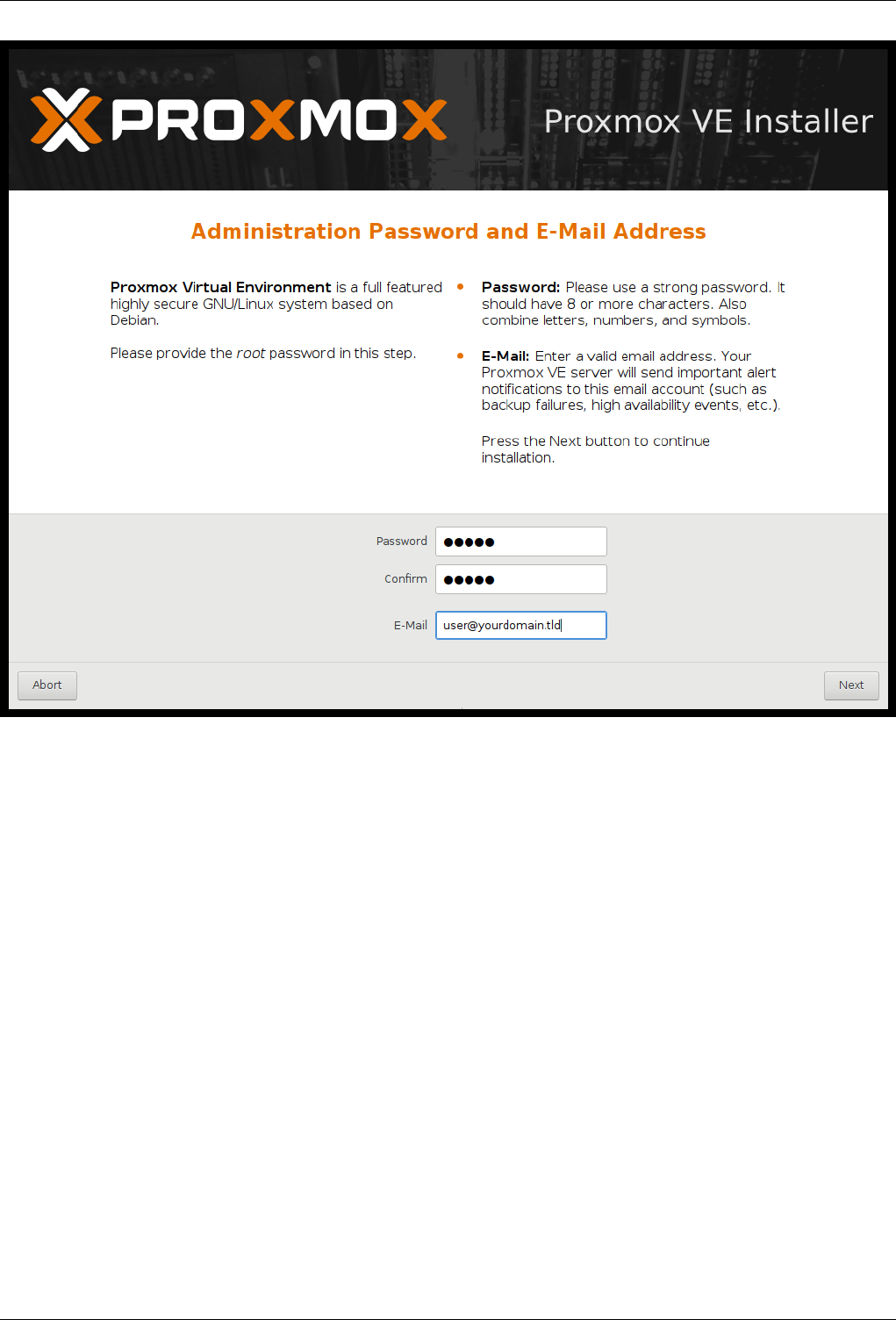

You then need to specify an email address and the superuser (root) password. The password must have at

least 5 characters, but we highly recommend to use stronger passwords — here are some guidelines:

• Use a minimum password length of 12 to 14 characters.

• Include lowercase and uppercase alphabetic characters, numbers and symbols.

• Avoid character repetition, keyboard patterns, dictionary words, letter or number sequences, usernames,

relative or pet names, romantic links (current or past) and biographical information (e.g., ID numbers,

ancestors’ names or dates).

It is sometimes necessary to send notification to the system administrator, for example:

• Information about available package updates.

• Error messages from periodic CRON jobs.

All those notification mails will be sent to the specified email address.

Proxmox VE Administration Guide 13 / 336

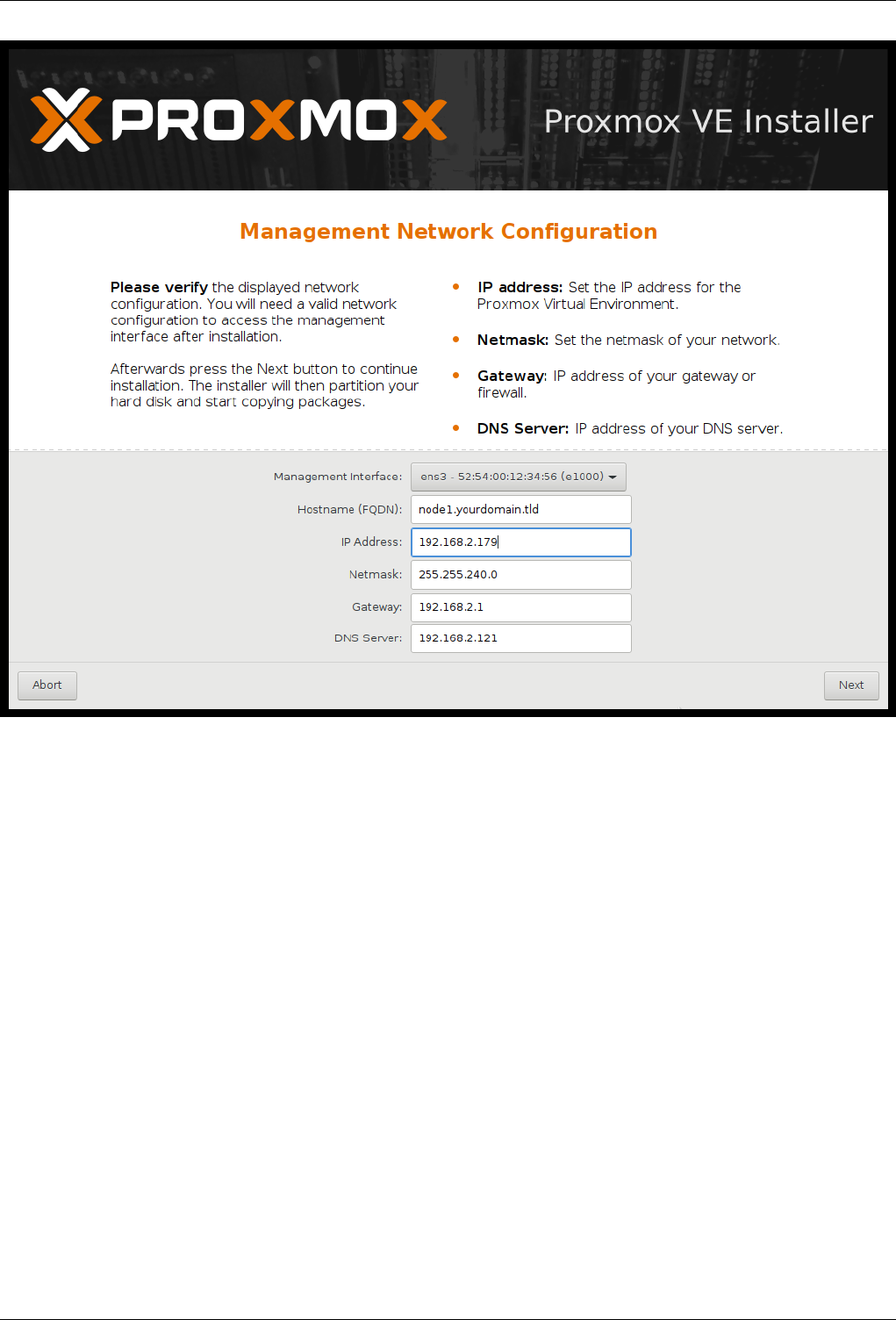

The last step is the network configuration. Please note that you can use either IPv4 or IPv6 here, but not

both. If you want to configure a dual stack node, you can easily do that after installation.

Proxmox VE Administration Guide 14 / 336

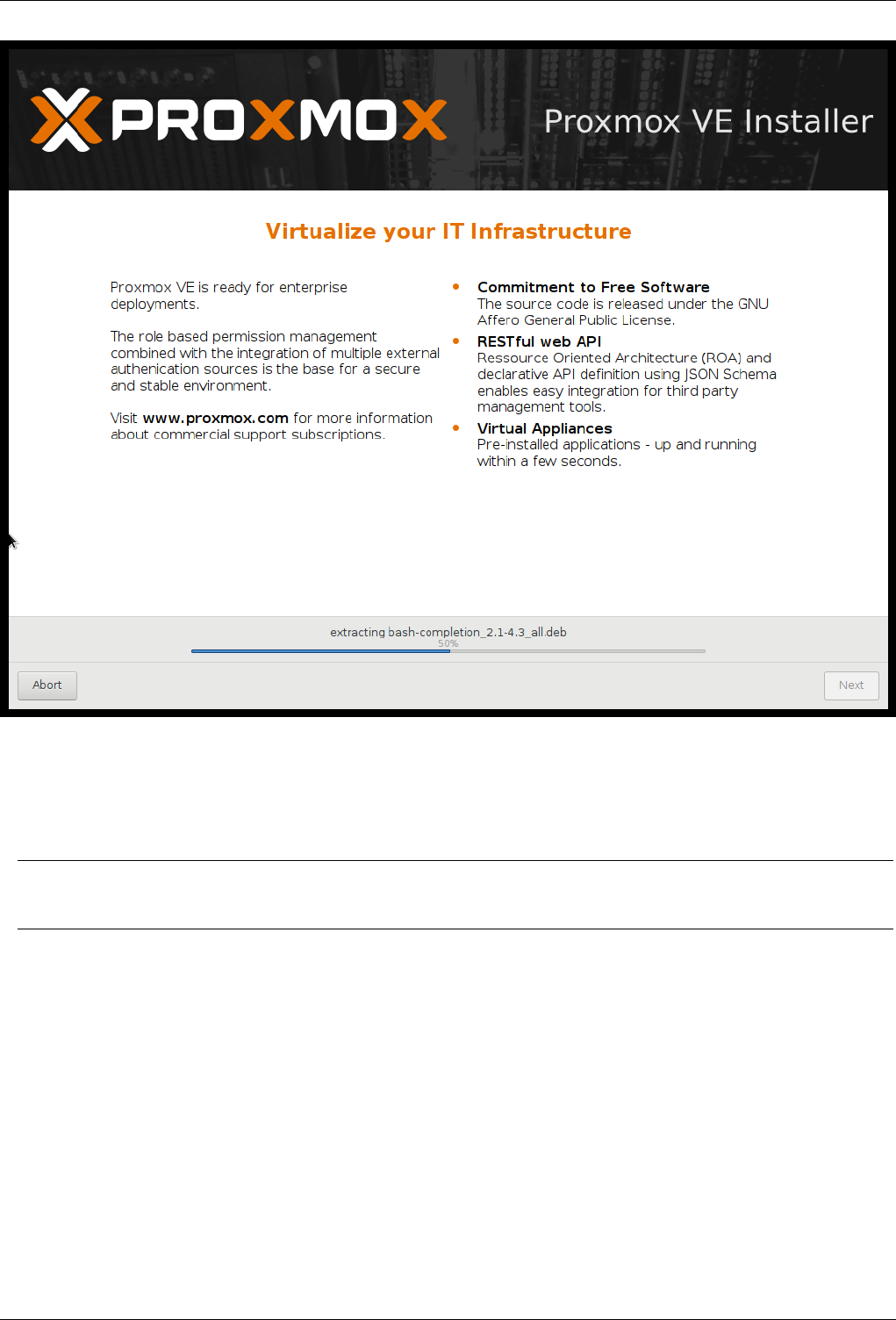

If you press Next now, installation starts to format disks, and copies packages to the target. Please wait

until that is finished, then reboot the server.

Further configuration is done via the Proxmox web interface. Just point your browser to the IP address given

during installation (https://youripaddress:8006).

Note

Default login is «root» (realm PAM) and the root password is defined during the installation process.

2.2.1 Advanced LVM Configuration Options

The installer creates a Volume Group (VG) called pve, and additional Logical Volumes (LVs) called root,

data and swap. The size of those volumes can be controlled with:

hdsize

Defines the total HD size to be used. This way you can save free space on the HD for further partition-

ing (i.e. for an additional PV and VG on the same hard disk that can be used for LVM storage).

swapsize

Defines the size of the swap volume. The default is the size of the installed memory, minimum 4 GB

and maximum 8 GB. The resulting value cannot be greater than hdsize/8.

Proxmox VE Administration Guide 15 / 336

Note

If set to 0, no swap volume will be created.

maxroot

Defines the maximum size of the root volume, which stores the operation system. The maximum

limit of the root volume size is hdsize/4.

maxvz

Defines the maximum size of the data volume. The actual size of the data volume is:

datasize = hdsize — rootsize — swapsize — minfree

Where datasize cannot be bigger than maxvz.

Note

In case of LVM thin, the data pool will only be created if datasize is bigger than 4GB.

Note

If set to 0, no data volume will be created and the storage configuration will be adapted accordingly.

minfree

Defines the amount of free space left in LVM volume group pve. With more than 128GB storage

available the default is 16GB, else hdsize/8 will be used.

Note

LVM requires free space in the VG for snapshot creation (not required for lvmthin snapshots).

2.2.2 ZFS Performance Tips

ZFS uses a lot of memory, so it is best to add additional RAM if you want to use ZFS. A good calculation is

4GB plus 1GB RAM for each TB RAW disk space.

ZFS also provides the feature to use a fast SSD drive as write cache. The write cache is called the ZFS

Intent Log (ZIL). You can add that after installation using the following command:

zpool add <pool-name> log </dev/path_to_fast_ssd>

2.3 Install Proxmox VE on Debian

Proxmox VE ships as a set of Debian packages, so you can install it on top of a normal Debian installation.

After configuring the repositories, you need to run:

apt-get update

apt-get install proxmox-ve

Proxmox VE Administration Guide 16 / 336

Installing on top of an existing Debian installation looks easy, but it presumes that you have correctly installed

the base system, and you know how you want to configure and use the local storage. Network configuration

is also completely up to you.

In general, this is not trivial, especially when you use LVM or ZFS.

You can find a detailed step by step howto on the wiki.

2.4 Install from USB Stick

The Proxmox VE installation media is now a hybrid ISO image, working in two ways:

• An ISO image file ready to burn on CD

• A raw sector (IMG) image file ready to directly copy to flash media (USB Stick)

Using USB sticks is faster and more environmental friendly and therefore the recommended way to install

Proxmox VE.

2.4.1 Prepare a USB flash drive as install medium

In order to boot the installation media, copy the ISO image to a USB media.

First download the ISO image from https://www.proxmox.com/en/downloads/category/iso-images-pve

You need at least a 1 GB USB media.

Note

Using UNetbootin or Rufus does not work.

Important

Make sure that the USB media is not mounted and does not contain any important data.

2.4.2 Instructions for GNU/Linux

You can simply use dd on UNIX like systems. First download the ISO image, then plug in the USB stick. You

need to find out what device name gets assigned to the USB stick (see below). Then run:

dd if=proxmox-ve_*.iso of=/dev/XYZ bs=1M

Note

Be sure to replace /dev/XYZ with the correct device name.

Caution

Be very careful, and do not overwrite the hard disk!

Proxmox VE Administration Guide 17 / 336

Find Correct USB Device Name

You can compare the last lines of dmesg command before and after the insertion, or use the lsblk command.

Open a terminal and run:

lsblk

Then plug in your USB media and run the command again:

lsblk

A new device will appear, and this is the USB device you want to use.

2.4.3 Instructions for OSX

Open the terminal (query Terminal in Spotlight).

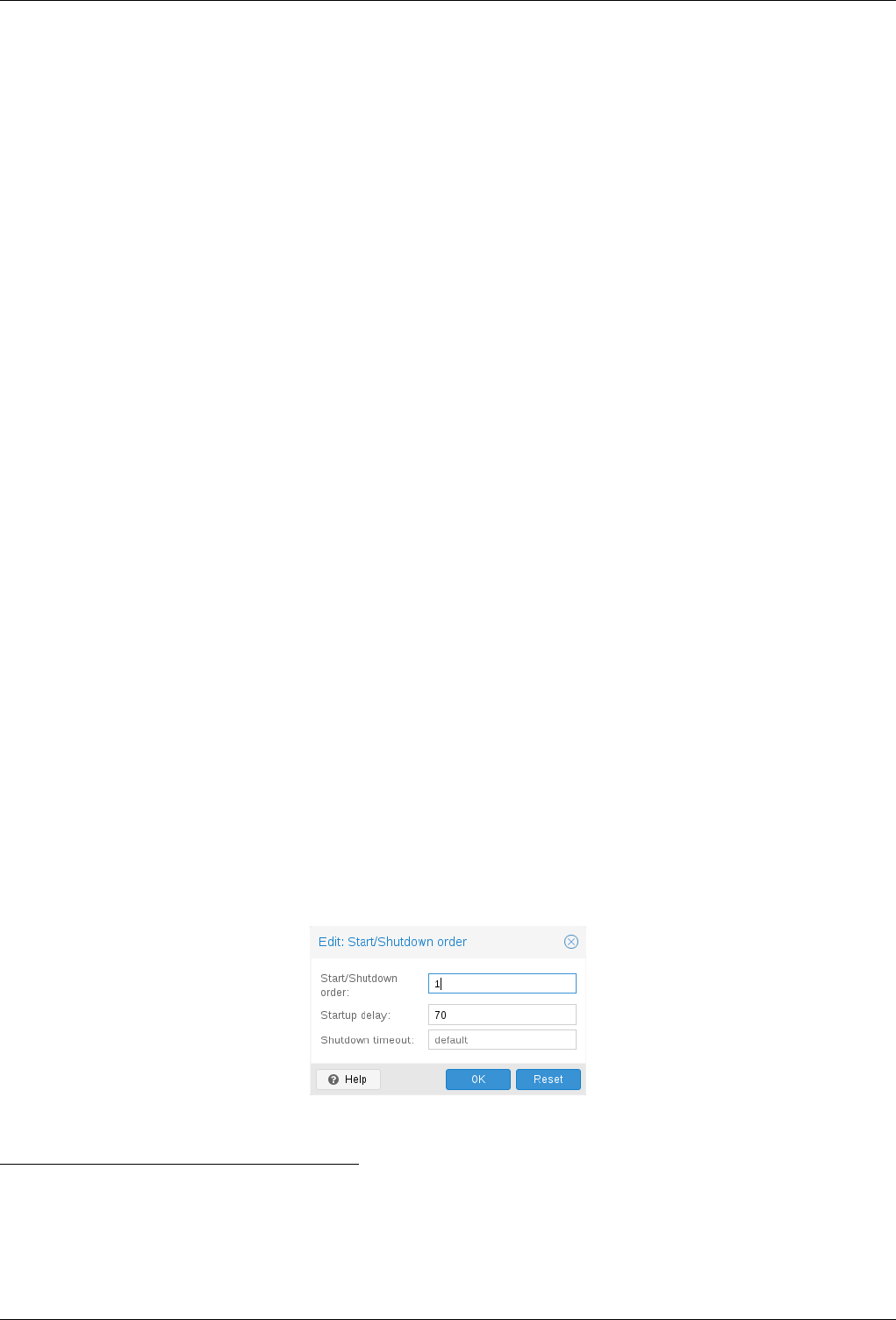

Convert the .iso file to .img using the convert option of hdiutil for example.