A quick way to determine how much memory MySQL thinks it could allocate is as follows:

wget mysqltuner.pl

perl mysqltuner.pl

When you run this script, it will tell you what percentage of the installed RAM MySQL thinks it can safely allocate. If the answer given is over 100%, you definitely need to lower your buffer sizes. The main one to focus on are:

sort_buffer_size

read_buffer_size

read_rnd_buffer_size

join_buffer_size

max_connections

key_buffer_size (not really effective past 4G)

@DTest already set the direction for you in his answer, so +1 for his anwser. The perl script will tell you what happens if you don’t set it or if you change any value. Here is an example:

A client of mine has

read_buffer_size=128K

read_rnd_buffer_size=256K

sort_buffer_size=2M

join_buffer_size=128K

max_connections=1050

Here is the output from mysqltuner.pl:

MySQLTuner 1.2.0 — Major Hayden

Bug reports, feature requests, and downloads at http://mysqltuner.com/

Run with ‘—help’ for additional options and output filtering

Please enter your MySQL administrative login: lwdba

Please enter your MySQL administrative password:

——— General Statistics —————————————————

[—] Skipped version check for MySQLTuner script

[OK] Currently running supported MySQL version 5.0.51a-community-log

[!!] Switch to 64-bit OS — MySQL cannot currently use all of your RAM

——— Storage Engine Statistics ——————————————-

[—] Status: +Archive -BDB +Federated +InnoDB -ISAM -NDBCluster

[—] Data in MyISAM tables: 319M (Tables: 108)

[—] Data in InnoDB tables: 2M (Tables: 5)

[!!] Total fragmented tables: 22

——— Performance Metrics ————————————————-

[—] Up for: 52d 23h 15m 57s (72M q [15.875 qps], 241K conn, TX: 2B, RX: 1B)

[—] Reads / Writes: 59% / 41%

[—] Total buffers: 34.0M global + 2.7M per thread (1050 max threads)

[!!] Allocating > 2GB RAM on 32-bit systems can cause system instability

[!!] Maximum possible memory usage: 2.8G (72% of installed RAM)

[OK] Slow queries: 0% (54/72M)

[OK] Highest usage of available connections: 6% (65/1050)

[OK] Key buffer size / total MyISAM indexes: 8.0M/82.1M

[OK] Key buffer hit rate: 100.0% (4B cached / 1M reads)

[!!] Query cache is disabled

[OK] Sorts requiring temporary tables: 0% (0 temp sorts / 948K sorts)

[OK] Temporary tables created on disk: 3% (11K on disk / 380K total)

[!!] Thread cache is disabled

[!!] Table cache hit rate: 0% (64 open / 32K opened)

[OK] Open file limit used: 2% (125/5K)

[OK] Table locks acquired immediately: 99% (30M immediate / 30M locks)

[OK] InnoDB data size / buffer pool: 2.7M/8.0M

——— Recommendations ——————————————————

General recommendations:

Run OPTIMIZE TABLE to defragment tables for better performance

Enable the slow query log to troubleshoot bad queries

Set thread_cache_size to 4 as a starting value

Increase table_cache gradually to avoid file descriptor limits

Variables to adjust:

query_cache_size (>= 8M)

thread_cache_size (start at 4)

table_cache (> 64)

Please notice under performance metrics

[—] Total buffers: 34.0M global + 2.7M per thread (1050 max threads)

that MySQL can allocate up to 72% of installed RAM based on the settings in /etc/my.cnf.

The 34M is based on innodb_buffer_pool_size and key_buffer_size combined

The 2.7M per thread was based on read_buffer_size + read_rnd_buffer_size + sort_buffer_size + join_buffer_size.

Multiples of the 2.7M are based on max_connections.

Therefore, you must tweek these parameters until the Performance metrics report says you have under 100% (preferrably under 80%) of installed RAM.

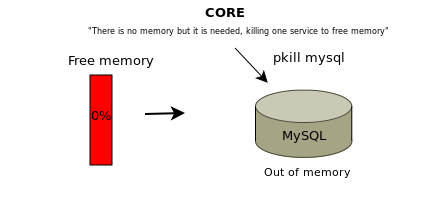

Часто при анализе неполадок на сервере в выводе утилиты dmesg приходится встречать сообщения о OOM и прекращении ядром процессов MySQL. Ошибка Out of memory MySQL — ситуация при которой системе не хватает оперативной памяти для нормального функционирования, при возникновении подобной ситуации ядро принудительно завершает процесс MySQL. Процесс MySQL будет завершен если система сочтет его самым малозначительный процессом.

В случае если сервер используется для размещения веб-сайтов ресурсы при этом становятся недоступны поскольку пропадает соединение с сервером баз данных. Вместо контента сайтов может отображаться ошибка 500 или ошибка о невозможности подключиться к базе данных.

Чаще всего вопрос решается запуском MySQL

service mysql start

Однако, часто при «убийстве» процесса ядром происходит повреждение таблиц баз данных. Обычно их можно починить используя утилиту myisamchk, но случаются и ситуации когда MySQL не запускается и после починки таблиц.

Работы по устранению неисправностей в этом случае могут занять определенное время, сайты на сервере при этом будут продолжать оставаться недоступны.

В Linux существует возможность дать ядру указание на то какие процессу убивать не следует. При необходимости можно задать в качестве такого процесса процесс, выполняемый от имени сервера баз данных.

Делается это при помощи cgroups или cgroup (начиная с версии ядра 4.5).

В рамках статьи вдаваться в особенности работы cgroups не будем, приведем лишь способ решения поставленной задачи — ограждение процесса MySQL от убийства ядром.

Добавим в tasks идентификатор процесса MySQL

ps aux | grep mysql

В выводе видим нужный PID, помещаем его в tasks

echo PID > /sys/fs/cgroup/cpuset/group0/tasks

где PID — идентификатор процесса

cgroups позволяет управлять памятью, в том числе создавать ограничения для механизма срабатывающего при нехватке RAM:

echo 1 > /sys/fs/cgroup/memory/group0/memory.oom_control

cat /sys/fs/cgroup/memory/group0/memory.oom_control

oom_killdisable 1

under_oom 0

При преувеличении потребления памяти сейчас процесс убит не будет, ядро выберет другую жертву и принудительно завершит работу другой службы, серверу баз данных и таблицам БД угрожать при выполнении описанных действий ничего не будет.

Используя cgroups мы поместили процесс в группу, группу затем добавили в подсистему.

Самым лучшим решением если подобные ситуации появляются регулярно является увеличение количества RAM.

Читайте про то как исправить таблицы после сбоя если они имеют тип InnoDB (упомянутая утилита myisamchk в этом случае не поможет).

Do the operating systems kill your MySQL instances from time to time? Are some database servers swapping constantly? These are relatively common problems. Why? How to prevent them?

Vim /var/log/messages

Memory allocation

When a running program needs some additional memory, it can typically allocate it dynamically with malloc() function. It finds an unused continuous block that is at least as large as the requested size, reserves as much as it needs, and returns a pointer to that space. No initialization of the memory contents is performed at the time. When malloc() returns NULL instead of a valid address, it is an information to the calling program that there wasn’t enough memory available and the call has failed to allocate anything. In such cases applications typically take appropriate actions to notify users about the problem and terminate some of their activity or completely shut down.

In Linux it can be a little bit more complicated. Calling malloc() may succeed even if there should be no more memory available. It is because Linux implements optimistic memory allocation, which is an element of the legacy system architecture. Optimistic memory allocation allows applications to ask for and receive more than can be possibly offered. The system hopes and assumes that all running applications won’t ever require all of the allocated memory all at once. It is a bit like with airlines, which like to overbook their flights assuming they can get away with it as in most cases there are a few no-shows or last minute cancellations. That way all applications may think they have the memory they allocated, just the same way you think you have a seat on the flight you booked, but eventually the system may not be able to provide it.

What then?

There are a few scenarios. At first the system can start swapping some of the memory contents to disk, because the whole reason the swap space is there is to extend the physical memory. But as applications keep using it more and more, there is a physical limit they cannot cross. At that point either some of them crash miserably or, more typically, system decides to kill one of them and this way quickly free some resources.

The optimistic memory allocation is also implemented in FreeBSD, while on the other hand Solaris never overcommits memory.

OOM Killer

The Linux kernel has a functionality called Out Of Memory Killer (or OOM Killer) responsible for dealing with memory exhaustion. If system reaches a point where it may soon run out of all memory, OOM Killer looks for a process it could killand ends its life.

Jun 11 21:04:48 prod-odsmadb kernel: Killed process 2138, UID 27, (mysqld).

How does it work and why does it often kill MySQL?

OOM Killer uses a heuristic system to choose a processes for termination. It is based on a score associated with each running application, which is calculated by oom_badness() call, formerly named badness(), inside Linux kernel. Those interested in more details can check the source code in mm/oom_kill.c.

The algorithm is relatively simple and usually the more memory a process uses, the higher score it receives, which makes it more likely to be killed. But there are actually more factors that are considered:

- memory consumption

- process ownership

- process age (older kenerls only)

- CPU time used (older kernels only)

- process nice value (older kernels only)

- process flags

- oom_adj/oom_score_adj setting

The complete list can be different for different Linux versions as the algorithm was mostly re-written in kernel 2.6.29.

In the past, the modifiers used to impact the score significantly and for example if a task had niceness above zero, its score was doubled. If it was owned by a privileged user, the score was divided by eight. In new kernels it is no longer the case. For instance, a process belonging to root now only receive a very small bonus of 30 points out of the possible 1000. With these changes the developers wanted a more predictable algorithm and apparently this was the way to achieve that.

So why does it kill MySQL so often? The answer is simple — because MySQL typically uses the most memory out of all processes running in a system.

The experiment

To demonstrate the problem mentioned above, here’s a little experiment. I wrote a tiny program that does nothing except it allocates some memory and then fills it with values in 50MB blocks every now and then. On a machine with 1.5GB of memory and 128MB swap space and running a Linux distribution, I started two instances of the program:

USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND root 3142 3.0 3.3 1052552 51816 pts/0 S 18:42 0:00 ./test-malloc root 3143 3.0 3.4 1052552 52684 pts/0 S 18:42 0:00 ./test-malloc

Each process was granted 1GB of memory as shown in VSZ, which together was more than RAM and swap size combined. However, at the time each process actually only used 50MB as reported in RSS, while the rest of it was never even initialized. The system could easily offer 2GB, and it did, when most of it was not used at all.

USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND root 3143 10.1 49.2 1052552 757232 pts/0 R 18:43 0:01 ./test-malloc root 3142 6.3 35.3 1052552 542756 pts/0 D 18:43 0:01 ./test-malloc

As both programs kept writing into the memory they allocated, their respective resident set sizes grew larger and larger. At that point the system ran out of physical RAM and started swapping out. Eventually, a few moments later, it decided it couldn’t let both growing programs live and decided to kill one of them:

test-malloc invoked oom-killer: gfp_mask=0x280da, order=0, oom_adj=0, oom_score_adj=0 test-malloc cpuset=/ mems_allowed=0 [..] Out of memory: Kill process 3143 (test-malloc) score 465 or sacrifice child Killed process 3143, UID 0, (test-malloc) total-vm:1052552kB, anon-rss:862564kB, file-rss:8kB

Only then the other instance managed to use its full allocation of 1GB, even though in the beginning both were told they could have 1GB each.

From MySQL DBA perspective

A similar problem may appear when running a MySQL database. It allocates a lot of different buffers for various purposes. Some last only for a short time, others live for as long as the database instance itself. These buffers start empty and only fill up over time, which means it may be very easy to run into these problems as in the beginning, right after a database instance is started, there may not be any signs that anything bad could be going on.

The server

Running into memory problems related to MySQL server usually implies bad database configuration. Whether you run a dedicated database server or share resources between a few larger applications, the memory configuration has to be set correctly to reflect the specific circumstances.

A few common causes of overrunning the available memory:

- database main buffers were set too large, e.g.: key_buffer_size, innodb_buffer_pool

- per-session buffers were set too large given the number of concurrently connected clients or concurrently running queries, e.g.: read_buffer_size or sort_buffer_size

- temporary tables configuration allows very large in-memory tables and some queries take advantage of that, e.g.: max_heap_table_size, tmp_table_size

- database carries very large number of InnoDB tables (e.g. tens of thousands), but data dictionary size limit was not set in innodb_dict_size_limit

In other cases it is also possible that something other than MySQL uses a lot of memory. It could be a web server running in the same system, or a cron job script. Their activity can also eventually cause problems with MySQL.

Example: InnoDB buffer pool size was set too larger

mysql> show global variables like 'innodb_buffer_pool_size';

+-------------------------+------------+

| Variable_name | Value |

+-------------------------+------------+

| innodb_buffer_pool_size | 5368709120 |

+-------------------------+------------+

mysql> ! free -g

total used free shared buffers cached

Mem: 3 3 0 0 0 0

-/+ buffers/cache: 2 1

Swap: 3 0 3

MySQL allowed using 5GB for InnoDB buffer pool even though the server only had 3GB of RAM. Why? The system allowed it, while MySQL didn’t check. The example is of course a bit exaggerated and may not even be possible with never MySQL versions, but even if you set it just below the limit, eventually the effect could be similar. If for example many threads connected and started allocating and using their private buffers, the system would likely ran out of memory at some point.

Example: A running cron job

Often cron jobs are written in Perl, PHP, Python or another scripting language that allows implementing a more complex logic. Very frequently I see such jobs running on a database server rather than on a dedicated system for handling internal tasks. Those scripts sometimes load into memory a lot of data pulled out of MySQL tables. They often quickly grow to hundreds of megabytes and beyond, which may significantly affect the global memory usage, causing swapping or even trigger a OOM Killer action.

Prevention

Analyse and fix MySQL configuration

By far the most important element of preventing memory allocation problems on a database server is creating a correct MySQL configuration. Setting any per-thread buffer to a hundred megabytes or more is a frequent bad practice that can very easily backfire. Global buffer sizes have to be sane as well and combined they must never be allowed to allocate more than approximately 80% of memory. In some cases the value that you specify in the configuration does not include any extra buffers or overhead. For instance InnoDB will typically use up to 5-10% more memory than set in innodb_buffer_pool_size.

Adjust OOM score

But taking care of MySQL configuration does not cover every contingency. There can always be some circumstances, such as a nasty cron job running, under which the system may decide to end some task. Fortunately there is a way to control the behaviour of OOM Killer. You can manually adjust the OOM score of each process independently. This is possible through /proc/[pid]/oom_score_adj (or /proc/[pid]/oom_adj on older systems).

mysql-server-01 ~ # cat /proc/$(pidof mysqld)/oom_score_adj 0

The range of values which oom_score_adj accepts is between -1000 and 1000 (or -17 to 15 for the deprecated interface that relies on oom_adj). Once it is set to any non-zero value, the score that oom_badness() calculates will be either reduced or increased by this manual adjustment. Setting it to the minimum possible value does not disable OOM Killer for the particular process, but simply rather reduces its chances for being chose to a minimum.

Here is the example of how it works:

mysql-server-01 ~ # cat /proc/$(pidof mysqld)/oom_score 24 mysql-server-01 ~ # echo '-20' > /proc/$(pidof mysqld)/oom_score_adj mysql-server-01 ~ # cat /proc/$(pidof mysqld)/oom_score 4

It is a pretty good way to tell the system to avoid killing the MySQL instance whenever there is a pressure to free memory. The problem is of course that if not MySQL, the biggest memory consumer, then what? It is why it may be important to adjust the score not only for mysqld process alone, but also for example for sshd, which often is nearly as critical application as database itself.

Tunning overcommit

Starting from kernel 2.5.30 there is possibility to change the system behaviour during memory allocation. It was implemented to help fighting the issues related to the memory overcommitment. It can be controlled through /proc or sysctl:

mysql-server-01 ~ # ls -l /proc/sys/vm/overcommit_* -rw-r--r-- 1 root root 0 May 17 18:12 /proc/sys/vm/overcommit_memory -rw-r--r-- 1 root root 0 May 17 18:12 /proc/sys/vm/overcommit_ratio

The values /proc/sys/vm/overcommit_memory accepts:

- 0 – The default value. Linux kernel uses predefined heuristics when deciding whether to allow memory overcommit or not.

- 1 – Always allow overcommit. Back to the past when Linux was doing it by default.

- 2 – Prevents overcommit when certain limit is exceeded.

The other parameter – /proc/sys/vm/overcommit_ratio – affects the limit mentioned above. The limit is defined as swap size plus the percentage of memory equal to the value set in overcommit_ratio. For example:

mysql-server-01 ~ # free -g

total used free shared buffers cached

Mem: 3 3 0 0 0 0

-/+ buffers/cache: 2 1

Swap: 3 0 3

mysql-server-01 ~ # cat /proc/sys/vm/overcommit_ratio

50

The calculation is: 3GB of swap space + 50% of 3GB of RAM = 4.5GB. The system will not permit allocating more than 4.5GB and that’s the global limit (not per process). The limit and the actual usage can be checked in /proc/meminfo by looking at the CommitLimit and Committed_AS values.

Disabling memory overcommit is not really a common practice, because you have to be a lot more careful, while usually simply proper database configuration is enough to address all these problems. It should never be used on servers where MySQL shares the system resources with other applications such as web server software, because the configuration does not play well with applications that strongly rely on fork() call such as Apache or even Java applications.

Summary

So why is this important? When systems starts swapping it is usually bad for MySQL performance, but it is a condition that can be addressed relatively quickly and painlessly, with a database restart for example. A database crash caused by OOM Killer or a memory outage, on the other hand, is something that can turn into a nasty problem. First, a crash is not a controlled restart, so you do not have any time to prepare for it. Second, restart after a crash may take a lot longer than after a clean shutdown, because database needs to perform recovery. And finally, a crash can actually cause physical damage to data files, so your database may not be able recover at all.

Building a stable, reliable and highly performing MySQL server requires a lot of attention. You cannot just set the buffer sizes arbitrarily and assume it will do as it often leads to nasty problems. Do not ever trust tools that claim they can configure MySQL for you as they can only work on a very limited input and can only make very trivial assumptions.

Source : https://www.psce.com/en/blog/2012/05/31/mysql-oom-killer-and-everything-related/

I have a large DB. When I try to retrieve the table using MySQL workbench I get the following error:

Error code: 2008 MySQL client ran out of memory

One provided solution by MySQL is to use --quick option but I work with the workbench. How can I solve this problem ?

asked Aug 1, 2012 at 16:56

2

You are using more memory than has been provided to you… try slicing down your memory in a number of chunks. I also faced this problem in python connection so I used generators and sliced my query in number of chunks … this will not take your memory.

Try using limit and offset.

answered Sep 15, 2012 at 14:38

Причина ошибки «Out of memory» на VPS

Каждому виртуальному серверу назначается фиксированный объём оперативной памяти, в зависимости от выбранного тарифного плана: от 1ГБ до 32ГБ.

Когда запущенные процессы и приложения хотят получить больше памяти, операционная система обычно начинает случайным образом выбирать некоторые процессы и принудительно завершать их в аварийном режиме.

В результате некоторые службы на сервере перестают работать, сервер часто перегружается и «зависает» в целом, выдавая ошибку «out of memory» (OOM) — нехватка операционной памяти.

Если процесс завершается в целях экономии памяти, она регистрируется в системных журналах и хранится в /var/log/, где вы можете найти подобное сообщения о нехватке памяти:

Jav 11 17:12:34 ovzhost114 kernel: [63353551.892881] Out of memory in UB 33222955: OOM

killed process 110919 (mysqld) score 0 vm:404635kB, rss:06665kB, swap:0kB

В зависимости от дистрибутива Linux и конфигурации системы, вид этой записи может меняться.

Как решить ошибку «Out of memory» на VPS

Узнайте какие процессы запущены

Вам нужно узнать обо всех запущенных процессах и сколько оперативной памяти они потребляют.

- Использование памяти можно отслеживать, например, с помощью команды:

free -h,

которая предоставляет текущую статистику памяти. К примеру, результаты, предоставленные системой в 1ГБ, будут выглядеть примерно так:

| total | used | free | shared | buffers |

cached |

|

|

Mem |

993M | 738M | 255M | 5.7M | 64M |

439M |

|

-/+ buffers/cache: |

234M | 759M | ||||

|

Swap: |

0B | 0B | 0B |

- Ещё одним полезным инструментом для мониторинга памяти является «top», который отображает полезную, постоянно обновляемую информацию об использовании памяти и ЦП, времени выполнения процессов и другой статистике. Это особенно полезно для определения ресурсоёмких задач.

Программа будет работать до тех пор, пока вы не выйдете их неё нажатием «q». Использование ресурсов отображается в процентах и предоставляет лаконичный обзор нагрузки на вашу систему.

Снизьте потребление оперативной памяти

Ограничьте работу ресурсоёмких плагинов и удалите лишние расширения и плагины сайта.

Оптимизируйте настройки MySQL с помощью Mysqltuner

Mysqltuner – это высокопроизводительный сценарий для настройки MySQL, который предоставляет подробную информацию о состоянии и работоспособности сервера MySQL, и даёт конкретные рекомендации по улучшению и повышению производительности.

- Чтобы установить mysqltuner, запустите следующие команды, в зависимости от ОС:

Debian/Ubuntu:

sudo apt-get -y install mysqltuner

CentOS:

sudo yum -y install mysqltuner

- Теперь можно запустить Mysqltuner: mysqltuner

В результате анализа вы получите крайне полезную информацию. Обратите внимание на раздел Recommendations.

- После строки Variables to adjust указаны параметры, которые следует изменить или дописать в случае их отсутствия в файле my.cnf.

Вы сможете найти этот файл в директории:

Debian/Ubuntu:

/etc/mysql/my.cnf

CentOS:

/etc/my.cnf

- Перезагрузите MySQL с помощью команд:

Debian/Ubuntu:

sudo /etc/init.d/mysql restart

CentOS:

sudo systemctl restart mariadb

Оптимизируйте настройки Apache

Неправильная настройка параметров Apache может стать огромной головной болью и привести к нехватке оперативной памяти VPS.

Вы можете выполнить следующие действия:

- Измените файл httpd.conf следующим образом. Такие параметры подходят для сервера с оперативной памятью в 1 ГБ.

|

KeepAlive Off MaxKeepAliveRequests |

100 |

|

KeepAliveTimeout |

15 |

|

StartServers |

2 |

|

ServerLimit |

2 |

|

MinSpareThreads |

50 |

|

MaxSpareThreads |

100 |

|

ThreadLimit |

100 |

|

ThreadsPerChild |

50 |

|

MaxClients |

100 |

|

MaxRequestsPerChild |

10000 |

- Кроме того, этот код можно внести в отдельный файл с расширением .conf и сохранить его в директории /etc/httpd/conf.d.

- Перезагрузите Apache: apachectl restart

Установите Nginx

Nginx служит хорошим балансировщиком нагрузки в сочетании с Apache и потребляет значительно меньше памяти. Больше о нём вы сможете узнать из нашей статьи Что такое nginx и как правильно его настроить.

Включите кэширование контента

Включение кэша вашего сайта уменьшит возникающие ошибки. Этот способ будет особенно полезен, если ваш сайт получает огромный объём трафика.

Перейдите на более высокий тарифный план

При нехватке оперативной памяти рекомендуется выбрать другой тариф VPS. Вы можете ознакомиться с тарифами VDS/VPS на нашем сайте.

Закажите услугу «Администрирование по запросу»

Услуга «Администрирование по запросу» может существенно облегчить вам решение этой проблемы. Просто доверьтесь специалистам RU-CENTER, и они сделают всю сложную работу за вас.

(3).png)

(3).png)